The prisoners' dilemma game originated in 1950, and thus commemorates its 70th anniversary this year.

The underlying ideas are remarkably important to social scientists, and to economists in particular, because they describes a situation in which the pursuit of self-interest can make all parties worse-off. More broadly, I think the prisoner's dilemma game captures a popular intuition about why society would be better off with more cooperation and less pursuit of self-interest.

But when you look into the prisoner's dilemma game more closely, it also offers insights about what it takes to sustain cooperative behavior, and also can help in understanding why the lines between self-interest and cooperation can get so blurry. My goal here is to review the concepts of the prisoner's dilemma game for those not especially familiar with it, and to explain how the prisoner's dilemma helps to illuminate one's thinking about the possibility concepts of self-interest and cooperation may often tend to overlap.

I'm not going to provide an intellectual history of the prisoner's dilemma game: the origins of formal game theory in the work of John von Neumann and Oskar Morgenstern, especially in their 1944 classic Theory of Games and Economic Behavior; how this approach spread to the RAND Institution, where Merrill Flood and Melvin Dresher experimented with a series of different games in 1950, and Flood wrote up the results that included a game with the structure and payoffs of a prisoner's dilemma game in a 1952 working paper; how Alfred Tucker was asked to give a talk on these issues to the Stanford psychology department in 1950, and came up with the idea of discussing the game as ac dilemma facing a prisoner as a way of describing the ideas to a nontechnical audience. For those stories and a detailed follow-up on applications of the idea over time, I recommend William Poundstone's 1992 book, The Prisoner's Dilemma. Poundstone writes:

I asked Flood if he realized the importance of the prisoner's dilemma when he and Dresher conceived it. "I must admit," he answered, "that I never foresaw the tremendoust impact that this idea would have on science and society, although Dresher and I certainly did think that our result was of considerable importance ...

For those interested in a more algebraic overview of the prisoners' dilemma and it's relationship to a double-handful of applications and games that are close cousins, Steven Kuhn provides a nice overview in the Stanford Encyclopedia of Philosophy on the "Prisoner's Dilemma" (updated April 2, 2019).

Here's my own description of the prisoner's dilemma game lifted from my Principles of Economics textbook:

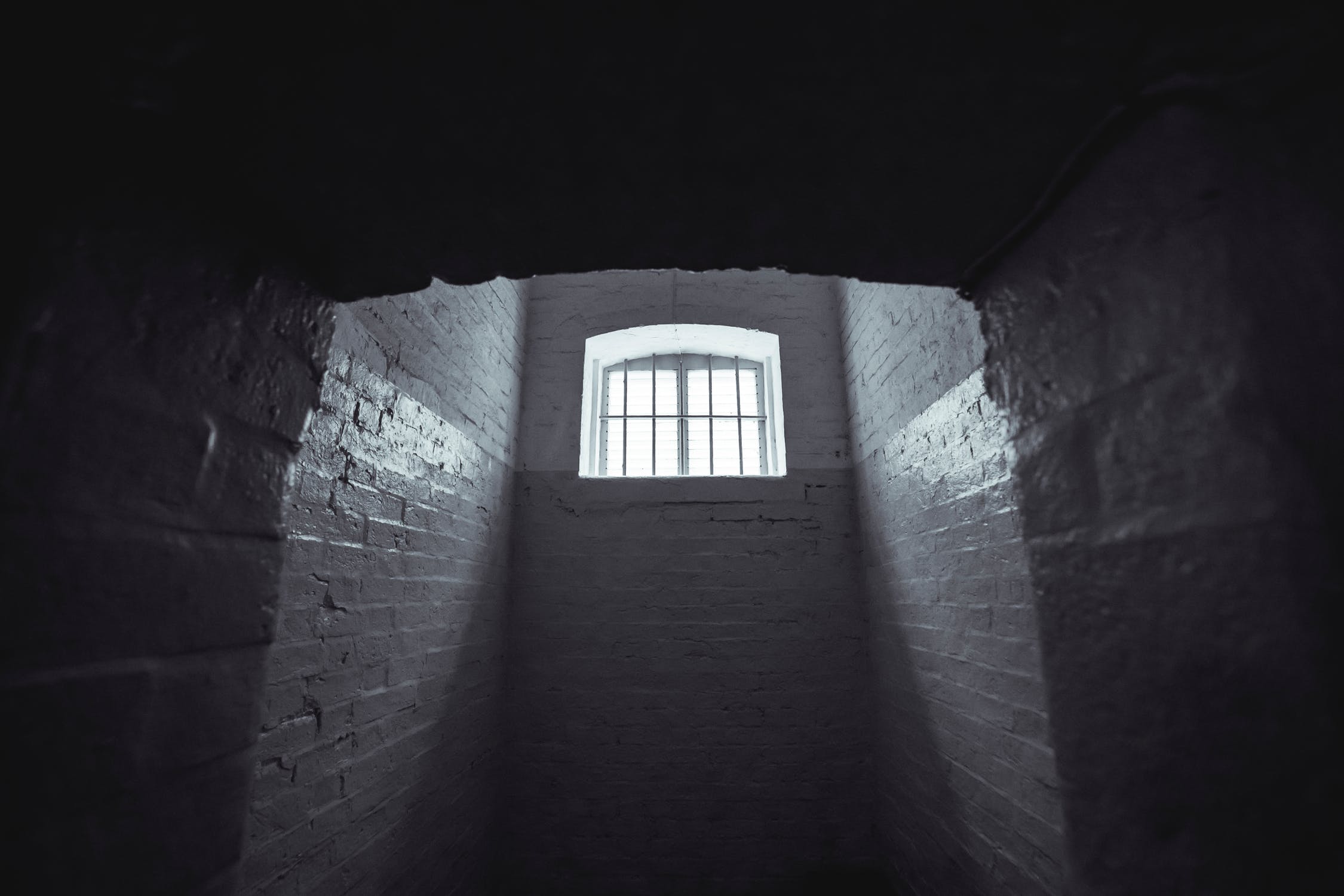

Two prisoners are arrested. When they are taken to the police station, they refuse to say anything and are put in separate interrogation rooms. Eventually, a police officer enters the room where Prisoner A is being held and says: “You know what? Your partner in the other room is confessing. So your partner is going to get a light prison sentence of just one year, and because you’re remaining silent, the judge is going to stick you with eight years in prison. Why don’t you get smart? If you confess, too, we’ll cut your jail time down to five years.” Over in the next room, another police officer is giving exactly the same speech to Prisoner B. What the police officers don’t say is that if both prisoners remain silent, the evidence against them is not especially strong, and the prisoners will end up with only two years in jail each. ...

To understand the prisoner’s dilemma, first consider the choices from Prisoner A’s point of view. If A believes that his partner B will confess, then A ought to confess, too, so as to not get stuck with the eight years in prison. But if A believes that B will not confess, then A will be tempted to act selfishly and confess, so as to serve only one year. The key point is that A has an incentive to confess, regardless of what choice B makes! B faces the same set of choices and thus will have an incentive to confess regardless of what choice A makes. The result is that if the prisoners each pursue their own self-interest, both will confess, and they will end up doing a total of 10 years of jail time between them.

But if the two prisoners had cooperated by both remaining silent, they would only have had to serve a total of four years of jail time between them. If the two prisoners can work out some way of cooperating so that neither one will confess, they will both be better off than if they each follow their own individual self-interest, which in this case leads straight into longer jail terms for both.

So this is the underlying structure of the basic prisoner's dilemma game: two players, two choices that can be phrased as "cooperate" or "defect" by following one's own immediate self interest. If the game is played one time, both players have no reason to cooperate. But if both players recognize that the logic of the game applies to both parties, and if both parties follow the self-interested logic they end up in the worst possible outcome, they they may also recognize that by cooperating they can avoid this worst-for-everyone outcome.

The underlying idea of the prisoner's dilemma game was soon applied in the 1960s to the situation of nuclear deterrence. Consider a game with two players, the US and the USSR. The choices are "build more nuclear weapons" or "don't build." Each country will reason as follows: If the other country does build nuclear weapons, then we need to keep up, and so we should build. However, if the other country doesn't build nuclear weapons, then we can improve our geostrategic position by deciding to build, so again we should build. Thus, if both countries pursue this logic of self-interest, a costly arms race results, in which neither side comes out ahead. The challenge is to find a method for the two countries to cooperate in a way where they both decide to make a "not build" choice.

But applications of the prisoner's dilemma game have become very widespread, and many of have a similar "arms race" interpretation."

Or think about the choice of those in a dispute on whether to hire a lawyer or to use arbitration. Each of the parties may see the choice this way: If the other party hires a lawyer, then I must also hire one, or I risk being underrepresented and losing. If the other party doesn't hire a lawyer, then I should hire one because it gives me a better chance of winning. As a result, both parties hire lawyers in a different sort of "arms race." Orley Ashenfelter, David E. Bloom and Gordon B. Dahl lay out this argument in a 2013 paper, "Lawyers as Agents of the Devil in a Prisoner's Dilemma Game" (Journal of Empirical Legal Studies, 10:3 399-423).

Think about a decision of an athlete as to whether to use performance-enhancing substances. If others use such substances, the I must use them too, or I have no chance of winning. If others do not use such substances, then I can get an advantage by using them. Or think about a firm's decision to advertise. If others advertise, then I must do so or risk losing market share. If others do not advertise, then I should to do to gain market share. The result is the common lament: "Half the money I spend on advertising is wasted, and the trouble is I don't know which half."

However, other versions of the prisoner's dilemma idea are perhaps more comfortably interpreted through the idea of a "free rider" who benefits from the actions of others. (For earlier posts on the history of the "free rider" idea, see here and here.)

For example, consider whether a country should enact costly rules to reduce carbon emissions. One fact about such policies is that a large share of benefits of actions taken by any country to reduce carbon emissions happen for other countries--whether those other countries take actions to reduce their own carbon emissions or not. Thus, a country may reason that if it decides to bear the costs of reducing carbon emissions when no other country does so, its policies will have little effect on the overall global problem and so it should decide not to bear such costs. However, if other countries do decide to bear the costs of "carbon-reducing investments," then the problem will largely be addressed and the individual country can decide not to bear such costs.

A similar version of the prisoner's dilemma idea arises for investments in public goods more broadly: that is, if others don't contribute to the cost, I would be foolish to contribute, but if others do contribute to the cost, the my little contribution won't make any difference and I can just be a "free rider" benefiting from the result. The result of this argument is that everyone decides against contributing and the public good doesn't get built. In formal terms, this is an example of a prisoner's dilemma game with many players, each making their own decision.

Over the decades, the prisoner's dilemma has been applied to a wide range of other situations. In a recent paper, Graciela Chichilnisky argues that an economy where men tend to specialize in paid market work and women tend specialize in unpaid family-and-home work can be interpreted as a prisoner's dilemma, and if society could cooperate, it could reach an outcome where both economic and family-based outcome are improved ("The Widening Gender Gap," Capitalism & Society, 14: 1. 2019). Kuhn reports in his Stanford Encyclopedia of Philosophy overview: "Donninger reports that “more than a thousand articles” about it were published in the sixties and seventies. A Google Scholar search for “prisoner's dilemma” in 2018 returns 49,600 results."

Indeed, one can argue, and Poundstone points out: "Even among the most law-abiding, most transactions are potential prisoner's dilemmas." Poundstone illustrates this theme with a version of the prisoner's dilemma due to cognitive scientist Douglas Hofstadter.

You have stolen the Hope Diamond. You make contact with an underworld figure named Mr. Big, who offers to buy it from you for a suitcase full of cash. You agree to make the exchange in this way: you leave the diamond in a wheat field in North Dakota, while Mr. Big leaves the cash in a wheat field in South Dakota. But after agreeing on this plan, you start thinking. If Mr. Big decides not to leave the cash, then I should definitely not leave the diamond. If Mr. Big does leave the cash, then I should also keep the diamond and re-sell it to someone else. Of course, you recognize that Mr. Big is having the same thoughts about not leaving the money. The result is likely to be that the market transaction will not happen in this way.

The example is intentionally theatrical. But it raises a real problem that applies in many market transactions. How does a buyer enforce delivery of the desired good, with the appropriate quality? How does a seller assure getting paid in full? For many purchases, from getting your roof fixed to paying with a credit card for what is supposed to be "fresh" produce at a roadside stand, these issues come up in one way or another.

How can those who find themselves in a prisoner's dilemma setting get to cooperative outcomes? In the original example of the two prisoners, one mechanism is the "snitches get stitches" philosophy--that is, if you inform on your partner, you will pay a price. In the case of nuclear weapons or climate change, an international agreement using "trust but verify" principles might work. For investments in public goods, many societies use taxation to fund such projects (at least to some extent) so that free-riding on the contributions of others becomes difficult. In the case of market transactions, the reputation of the seller, along with more formal mechanisms like money-back policies and warranties, can offer reassurance to all parties. In some cases, like hiring lawyers or spending money on advertising, no method for assuring cooperation has fully emerged.

Given the power and widespread application of the prisoner's dilemma idea, is it fair to conclude that cooperation beats self-interest? That sweeping conclusion is much too facile. For example, if cooperation in some situations leads to better outcomes than narrow self-interest, shouldn't someone pursuing their self-interest agree to cooperate? And if pursuit of self-interest in some situations leads to better outcomes than cooperation, shouldn't we agree to cooperate by letting self-interest guide our actions in certain areas?

One can even apply a prisoner's dilemma approach to argue that cooperation may be bad for society. Indeed, this conclusion is obvious in the original prisoner's dilemma, where cooperation between the two prisoners means that they are less likely to be punished.

But as another example, consider the case of two profit-seeking oligopoly firms, who can keep profits and prices high if they can avoid competing and instead cooperate with each other. However, each firm reasons that if the other firm starts cutting prices, then it also needs to cut price to keep market share. And if the other firm doesn't cut prices, then it could gain market share by cutting prices. In this situation, both firms have a self-interested motive to cut prices and compete, but if two companies cooperate they in effect create a monopoly for exploiting consumers. One way for antitrust policy to work is to make it much harder for big firms to cooperate with each other in keeping prices high, and instead let their self-interested incentive to compete take over.

It's of course easy to think of ways in which pressure for social "cooperation" can be used for undesirable purposes. Oppressive social forces often cooperate with each other and with government quite effectively. With a given society, war can be viewed as an ultimate act of social cooperation. Government can enact regulations or college tax revenues both for useful social purposes and also for the benefits of special interests, or for inefficient or corrupt reasons. Perhaps the pragmatic lesson here is that "competition" and "cooperation" need to be judged in context, and in "The Blurry Line Between Competition and Cooperation," I argue that the idea have considerable overlap. I wrote there:

Instead, a concept of cooperation is actually embedded in the meaning of the word “compete.” According to the Oxford English Dictionary, “compete” derives from Latin, in which “com-” means “together” and “petĕre” has a variety of meanings that include “to fall upon, assail, aim at, make for, try to reach, strive after, sue for, solicit, ask, seek.” Based on this derivation, valid meanings of competition would be “to aim at together,” “to try to reach together,” and “to strive after together.”

Competition can come in many forms. The version of competition that economists typically invoke when discussing markets is not about wolves competing in a pen full of sheep; nor is it competition among weeds to choke a flowerbed. The market-based competition envisioned in economics is disciplined by rules and reputations, and those who break the rules through fraud, theft or other offenses are clearly outside the shared process of market competition.

The prisoner's dilemma game is a lasting contribution to how social scientists think about incentives and the context behind incentives, self-interest, group cooperation, commitment, and more. It's a true dilemma, in the sense that it doesn't have a "solution." As Poundstone writes:

Both Flood and Dresher say they initially hoped that someone at RAND would "resolve" the prisoner's dilemma. ... The theory would address the conflict between individual and collective rationality typified by the prisoner's dilemma. ... The solution never came. Flood and Dresher now believe that the prisoner's dilemma game will never be "solved," and almost all game theorists agree with them. ...

Social problems are never simple. Many political and military debates are so enmeshed in contingencies and uncertainties that it is possible to argue forever over the details. There is a sense that if only these ancillary issues were resolved, the dilemma would be slain and all would agree on the proper course to take. This is not necessarily so. In many cases the central dilemma is genuine and seemingly irresolvable. To the extent that a real social problem poses a prisoner's dilemma, it will be an agonizing choice even when all the side issues are settled. There will be no "right" answer, and reasonable minds will differ.

A version of this article first appeared on Conversable Economist.

Leave your comments

Post comment as a guest