Comments

- No comments found

Artificial intelligence (AI) has the potential to enhance the accessibility of information & communications technology (ICT).

It maybe the system software operating systems like Apple's iOS, Google's Android, Microsoft's Windows Phone, BlackBerry's BlackBerry 10, Samsung's/Linux Foundation's Tizen and Jolla's Sailfish OS; macOS, GNU/Linux, computational science software, game engines, industrial automation, and software as a service applications.

It may be web browsers such as Internet Explorer, Chrome OS and Firefox OS for smartphones, tablet computers and smart TVs, cloud-based software or specialized classes of operating systems, such as embedded and real-time systems.

Here is an heuristic rule, each complex problem in science and technology is decided by adding up a new abstraction level.

ICT with computer science, as computing, data storage and digital communication, deep neural networks, etc., is not any exclusion. It relates to the Internet stack, from computers to content, the OSI model and the Internet suite, with Domain Name System.

Internet Protocol Suite

OSI Model

1. Physical layer

2. Data link layer

3. Network layer

4. Transport layer

5. Session layer

6. Presentation layer

7. Application layer

This is how data web or Semantic Web, an extension of the World Wide Web, was tried and failed by the World Wide Web Consortium (W3C) to make Internet data machine-readable.

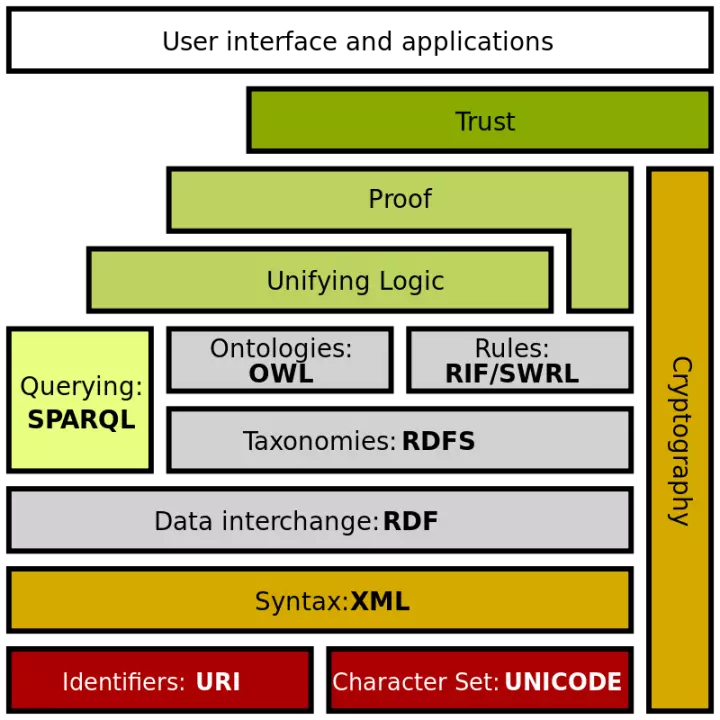

The Semantic Web Stack, also known as Semantic Web Cake or Semantic Web Layer Cake, illustrates the architecture of the Semantic Web as the hierarchy of languages, where each layer exploits and uses capabilities of the layers below. The Semantic Web aims at converting the current web, dominated by unstructured and semi-structured documents into a "web of data".

To enable the encoding of semantics with the data, web data representation/ formatting technologies such as Resource Description Framework (RDF) and Web Ontology Language (OWL) are used.

W3C tried but failed to innovate a sort of universal dataset code layer over the universal character set code layer, or UNICODE.

Still, the whole idea to apply ontology [describing concepts, relationships between entities, and categories of things] was intuitively right.

Unicode is an information technology (IT) standard for the consistent encoding, representation, and handling of text expressed in most of the world's writing systems. The standard is maintained by the Unicode Consortium, and as of March 2020, there is a total of 143,859 characters, with Unicode 13.0 (these characters consist of 143,696 graphic characters and 163 format characters) covering 154 modern and historic scripts, as well as multiple symbol sets and emoji. The character repertoire of the Unicode Standard is synchronized with ISO/IEC 10646, and both are code-for-code identical.

The Universal Coded Character Set (UCS) is a standard set of characters defined by the International Standard ISO/IEC 10646, Information technology — Universal Coded Character Set (UCS) (plus amendments to that standard), which is the basis of many character encodings, improving as characters from previously unrepresented writing systems are added.

To integrate AI into computers and system software means to create a Unicode abstraction level, the Universal Coded Data Set (UCDS), as AI Unidatacode or EIS UCDS.

The UCDS is a universal set of data entities, which is the basis of all intelligent/meaningful data encodings, as machine data types, improving as data units, sets, types and points are added.

Data universe represents the state of the world, environment or domain.

Data refers to the fact that some dynamic state of the world is represented or coded in some form suitable for usage or processing.

Data are a set of values of entity variables, as places, persons or objects.

A data type is formally defined as a class of data with its representation and a set of operators manipulating these representations, or a set of values which a variable can possess and a set of functions that one can apply to these values.

Common data types include:

Almost all programming languages explicitly include the notion of data type, while using different terminology. The data type defines the syntactic operations that can be done on the data, the semantic meaning of the data, and the way values of that type can be stored, providing a set of values from which an expression (i.e. variable, function, etc.) may take its values.

Deep Intelligence and Learning = AI [real-world competency and common sense knowledge and reasoning, domain models, causes, principles, rules and laws, data universe models and types] + UniDataCode [Unicode] + Machine DL [ a hierarchical level of artificial neural networks, neurons, synapses, weights, biases, and functions, feature/representation learning, training data sets, unstructured and unlabeled, algorithms and models, model-driven reinforcement learning]

Provided the rules of chess, AlphaZero learned to play the board games in 4 hours, or MuZero -building a model from first principles.

For a dynamic and unpredictable world, you need the AI-Environment interaction model of real intelligence, where AI acts upon the environment (virtual or physical; digital or natural) to change it. AI perceives these reactions to choose a rational course of action.

Every deep AI system must not only have some goals, specific or general-purpose, but efficiently interact with the world to be the Deep Intelligence and Learning.

Leave your comments

Post comment as a guest