Comments

- No comments found

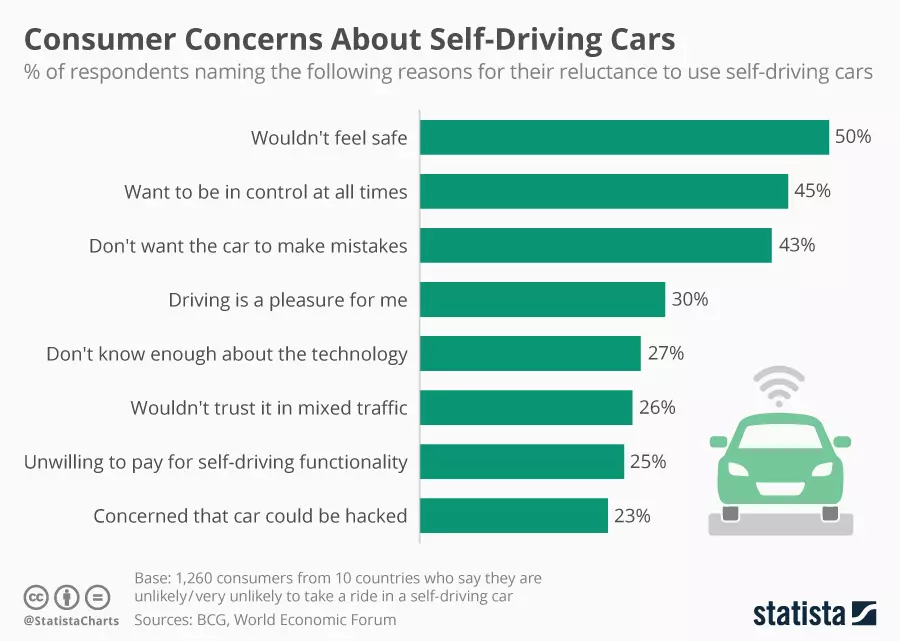

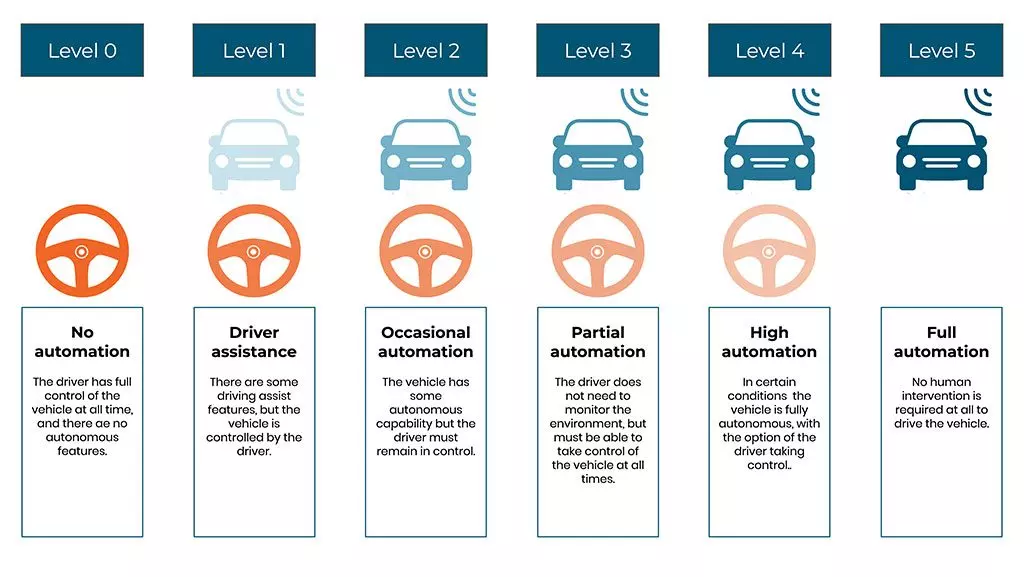

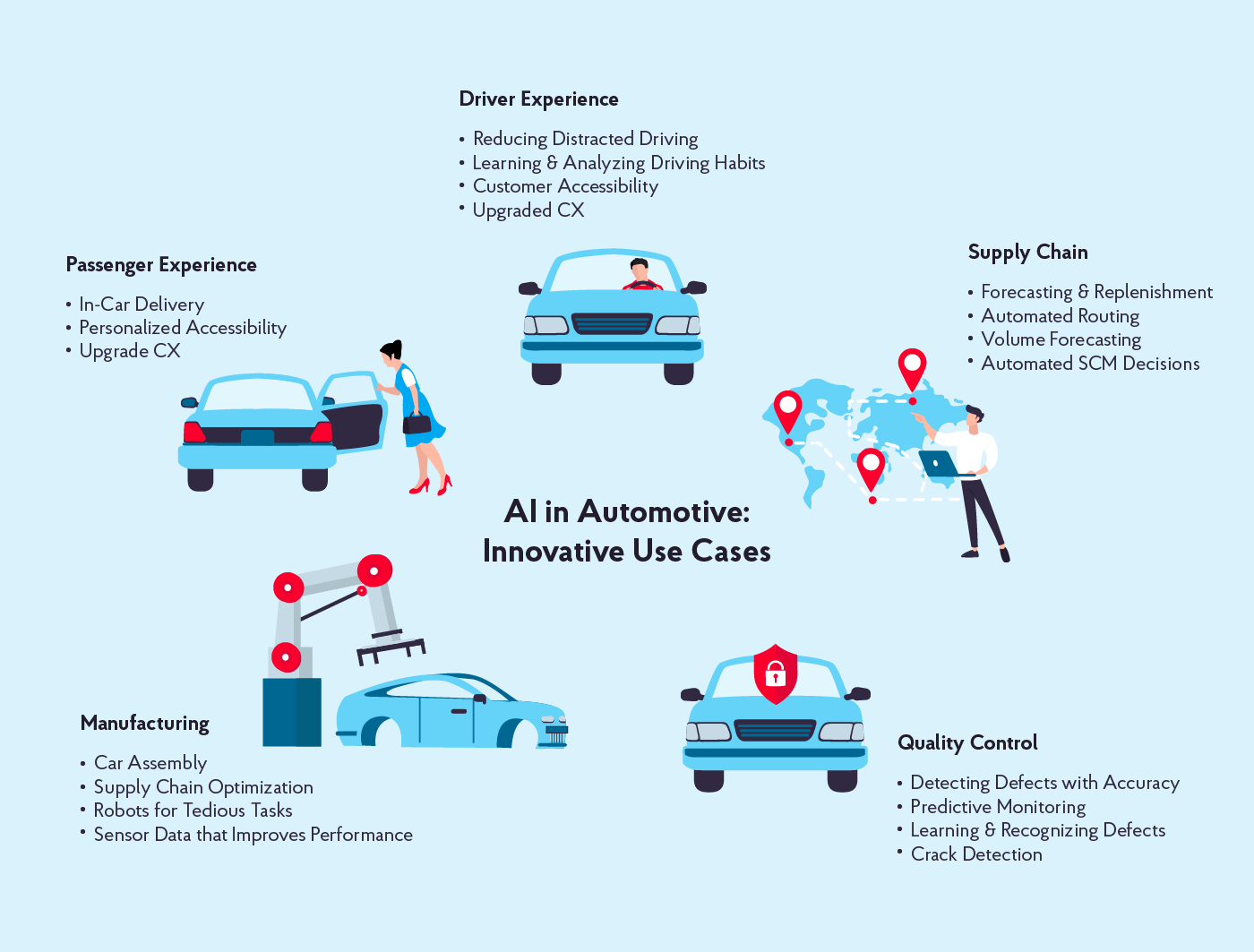

Self-driving cars and robotaxis are some of the most impressive advances in artificial intelligence. Though operational, they sometimes make news for errors and crashes. Autonomous vehicles are also regimented. There are debates on how to regulate them. This article suggests mental health could be a standard for autonomous vehicles — towards a brain-like or neuromorphic form.

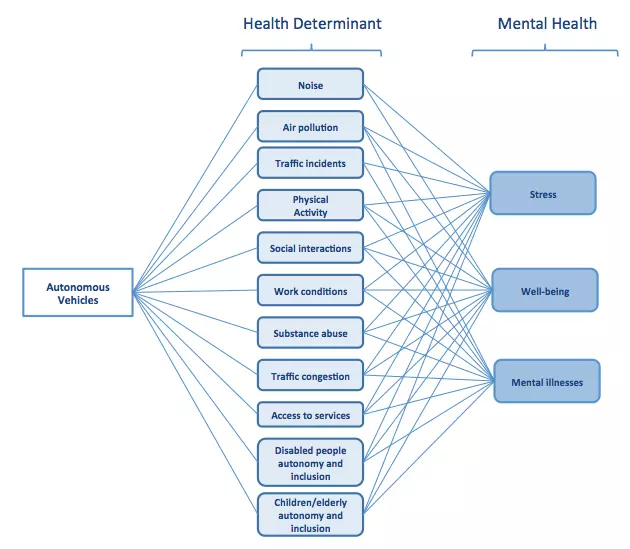

Self-driving vehicles affect important health determinants associated with urban environments including traffic, social interactions, air pollution, traffic noise and access to health services.

Many of these health determinants are associated with mental health.

The construct of the nerve cells and neurochemicals for interactions with the world are thoughts or in the form of thought. The sights of a falling device, resulting in fear, drab or screaming have neurons and brain centers active [observable by neuroimaging].

However, what they actually build — for the equivalent of that experience that emerges then relays, to brain centers are different from what is displayed.

Sight is sensory. It gets integrated. It is relayed to its memory store, which goes to the group of loss and fear, before proceeding to destination to actually feel fear, which may coincide with secretion of noradrenaline, then reaction as screaming.

Alternatively, the thought version of that device went to the memory to know what it means for the device to fall and break, which happens by the store going to different groups, so fast, then proceeding to where fear is actually felt, then reaction.

It is not just what neurons are doing in those processes, or what areas are active — if — under fMRI, but the construct of what is used for that interaction, then relay.

This process defines mental health in general. Though neurons are the building blocks, thoughts or a form of thought are the construct. It is the thought version of the external device that the memory stores, and is used for decision-making.

Artificial intelligence has advanced with part simulation of neural networks, with input, hidden and output layers. There have been tweaks across deep learning libraries, but limitations abound.

There have been talks about poor simulations of biological neural networks, with an alternative in spiking neural nets. But none of them places the construct of neurons as priority for safer AI, ethical AI, explainable and interpretable AI.

Self-driving cars need to have a separate construct for data, to be responsible for how they feel fear and other emotions, or understand the world perceptibly, aside from pattern recognition.

Leave your comments

Post comment as a guest