Comments

- No comments found

Algorithm bias may develop when AI is used to tackle global problems, resulting in unanticipated, wrong, and damaging outcomes.

Artificial intelligence (AI) bias is a problem that is becoming more prevalent as software becomes more integrated into our daily lives.

AI can sometimes manifest the same prejudices as humans, and it could be even worse in some circumstances. An aberration in the output of machine learning algorithms could be due to biases in the training data or prejudiced assumptions made during the algorithm building phase. Our society's beliefs and standards have blind spots or certain expectations in our thinking. As a result, algorithmic AI bias is heavily influenced by societal bias.

People are shaped by their upbringing, experiences, and society. They internalize certain beliefs about the world around them. It's the same with AI. It doesn't exist in a vacuum; it's made up of algorithms created and refined by the same individuals. It tends to "think" or run algorithms in the same manner, it's been taught.

Whether conscious or unconscious, human prejudice lurking in AI algorithms throughout their development is the root cause of AI bias. Human biases and prejudices are adopted and scaled by AI solutions.

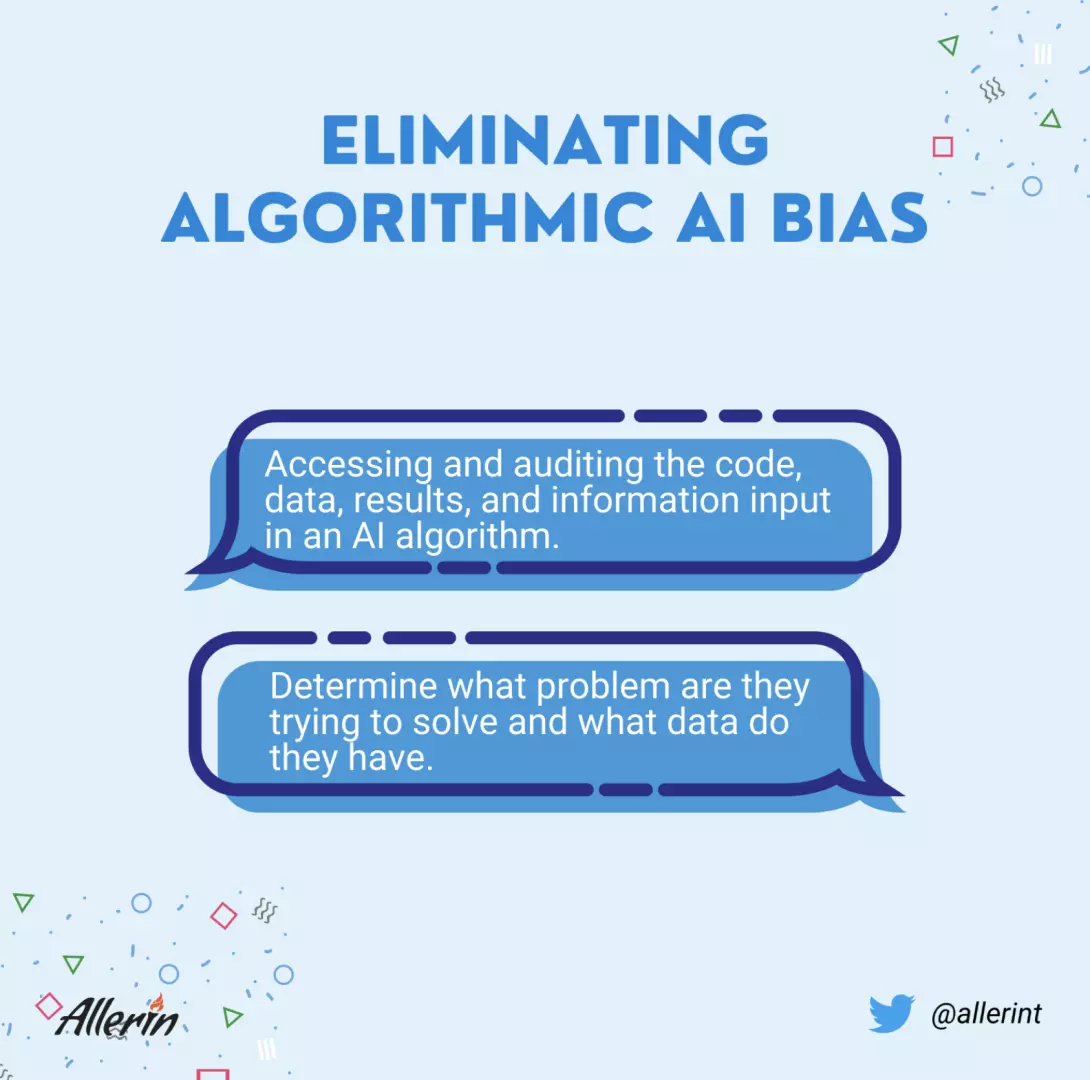

All the information and data collected by the AI algorithm are accessed and examined to see how these algorithms have performed, their outputs, and how they have computed things.

In other words, what problem are they trying to solve, and what data do they have? Audits with access to an algorithm’s code can assess whether the algorithm’s training data is biased and create hypothetical scenarios to examine the impact on different populations.

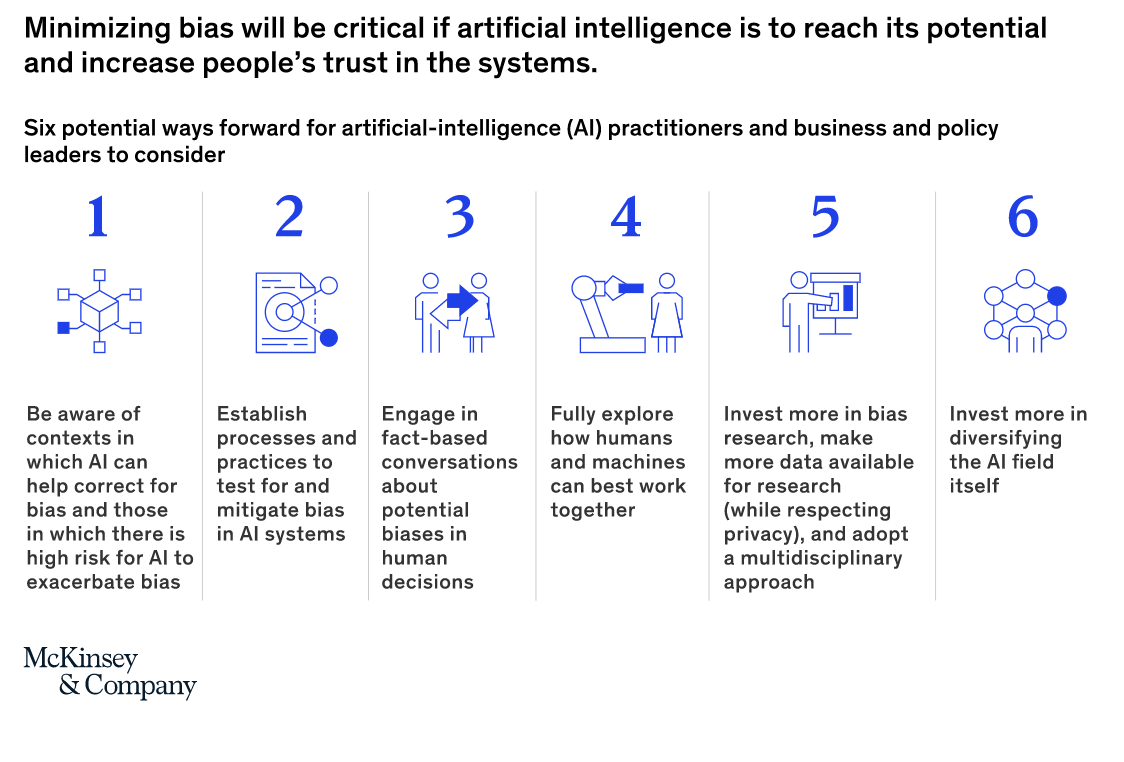

The algorithms and data may appear neutral, yet their output reinforces societal biases. Artificial intelligence (AI) and machine learning have advanced rapidly, resulting in strong algorithms that have the potential to enhance people's lives on a massive scale. Algorithms, particularly machine learning algorithms, are increasingly being used to supplement or replace human decision-making in ways that impact people's lives, interests, opportunities, and rights. The ethical impact of AI has been extensively studied in recent years, with public crises involving lack of transparency, data exploitation and the proliferation of systemic racism. One of the few examples to explain AI bias would be Twitter’s photo cropping algorithm.

The quality of an AI system's input data determines how good it is. You can design an AI system that makes unbiased data-driven decisions if you can clean your training dataset of conscious and unconscious assumptions about race, gender, and other ideological ideas. However, there are innumerable human biases. As a result, having a perfectly unbiased human mind and an AI system may not be attainable.

AI can assist us in avoiding discrimination in hiring, operations, customer service, and the more extensive business and social networks — and it makes excellent commercial sense to do so. Artificial intelligence can help us avoid harmful human bias – both intentional and unintentional. It is now evident that AI algorithms integrated into digital and social technologies can encode societal prejudices, speed the spread of rumors and disinformation, amplify echo chambers of public opinion, hijack our attention and even affect our mental welfare if left uncontrolled. AI bias can be avoided to a certain degree, but only if we educate it to play fair and continuously challenge the findings.

Naveen is the Founder and CEO of Allerin, a software solutions provider that delivers innovative and agile solutions that enable to automate, inspire and impress. He is a seasoned professional with more than 20 years of experience, with extensive experience in customizing open source products for cost optimizations of large scale IT deployment. He is currently working on Internet of Things solutions with Big Data Analytics. Naveen completed his programming qualifications in various Indian institutes.

Leave your comments

Post comment as a guest