Comments (3)

Daniel Johnson

They will get replaced...

Leanne Sophie

It's a controversial topic. Can't give my opinion.

Steve Haworth

You are not afraid to make a point. That's what I admire the most.

Every so often as a writer, you come to the realization that the article that you're working on is going to piss a lot of people off, including friends and colleagues.

This is likely going to be one of those articles. I will say upfront that I'm not advocating that we do fire ontologists (for my own self-preservation if nothing else 😁), but I think we do need to seriously ask what exactly complex ontologies brings to the table, and whether it is time to challenge some long-held assumptions.

I've been involved in standards work for a long time. My first book, in fact, was about XML, and it came out just about the same time as the XML specification itself did in 1998. I have, over the years, been involved in a lot of standards, and have seen and advised on others. I have advocated for standards in XML, XSD, SVG, HTML, RDF and a raft of specifications such as NIEM, XBRL, LEX and others. You'd think, with thirty odd years in this space, I'd be Mr. Standards Guy. Yet, I found myself increasingly with the opinion that formalized standards are increasingly going the way of the dodo bird, and for good reason: They don't work well in one of their primary use cases: capturing the evolution of language.

Let me give two specific cases in point: FIBO and Open Annotation. FIBO is a great standard. For a while, FIBO was the next big thing in banking and finance, a semantic specification for financial transactions that would revolutionize the industry. A couple of the people who invented FIBO and shepherded it through the various standards processes are people I correspond with regularly on LinkedIn (or at least likely will until they read this article). Do you want to know the number of banks that currently use FIBO in a production setting? So would I. There may be some, but if there are, it's news to me.

How about Open Annotation? Cool idea. Create a standard for annotating content on the web. I published several articles on Open Annotation over the years, but again, to my knowledge, there aren't a lot of open annotation servers out there. There's a few, but they generally don't talk much with one another, which was, when you get right down to it, one of the main reasons for making it a standard in the first place.

There are a few semantic standards that have been wildly successful. I'd point to Schema.org, as one, but it's become a standard for a surprisingly mundane reason: SEO. Everyone wants to better control their page rankings on Google and Bing and whoever else is wanting to influence search engines. Hundreds of thousands (perhaps even millions) of Javascript programmers are getting their teeth sharpened in the SEO field, because there's money there, and schema.org is a fairly easy to use ontology that doesn't necessarily require a lot of sophistication.

It is, of course, also possible to extend, but in general such extensions will be meaningless to a schema.org parser. That is to say, your organization may actually create schema.org extensions for internal use, but don't expect Google to understand those extensions.

And therein lies one of the major problems with semantics as an interchange mechanism. The moment that you can combine two schemas, you have created a new language that is only partially understandable to a person (or more likely a computer system) unless the recipients also follows the same rules for extensions. You can combine two ontologies readily within a knowledge graph (it's the union of all triples within that graph) but the moment that you introduce a new property between instances of one class in one system instances of another class in the other system, what you have is now a separate ontology, an extension of one that recognizes the existence of the other.

Mind you, this is a feature, not a bug. There are design patterns that you can follow to facilitate making this shim between two distinct ontologies consistent, but the reality is that the ontology that you have created by extending on ontology into another exists because an ontology is very much a domain specific language, and it is rare, if not impossible, for any two specific domains to have more than loosely overlapping concepts, no matter how close they appear on the surface.

Two publishers, for instance, will very seldom be able to use the same ontology without each adapting to their specific requirements. Perhaps the best that can happen in this case is that there is an agreement about a subset that is consistent between the two to allow for interchange, but expecting a standards group to spend years hashing out whether a given object is a book or a publication will almost certainly end up with specifications that will be used only periodically.

There are a number of very smart people, and very smart organizations, that have created complex "upper ontologies" meant to universally describe everything. Not surprisingly, there's usually pretty consistent agreement on concrete things, but abstractions get fuzzy quickly. It's important to remember that these ontologies are only systems for classification. A library may use Library of College designations, or may use Dewey Decimal Systems, and each of these will correlate a physical position in a library with the location of a book. The ordering of the books will change between the two, but the set of books so ordered will not.

The notion of classification as a form of addressing entities ultimately is what classification is intended to do. When you create a directory structure on your laptop, the directories themselves are simply mechanisms for classification. That classification may be very crude - my largest directory is /home/kurtcagle/Downloads for instance, because, despite being an ontologist I am also singularly lazy. but nonetheless when qualified with a unique identifier for my machine and access to that device externally, there is a rough one-to-one correspondance between a classification and an address. In essence, a file system is an ontology, albeit a pretty sloppy, haphazard one.

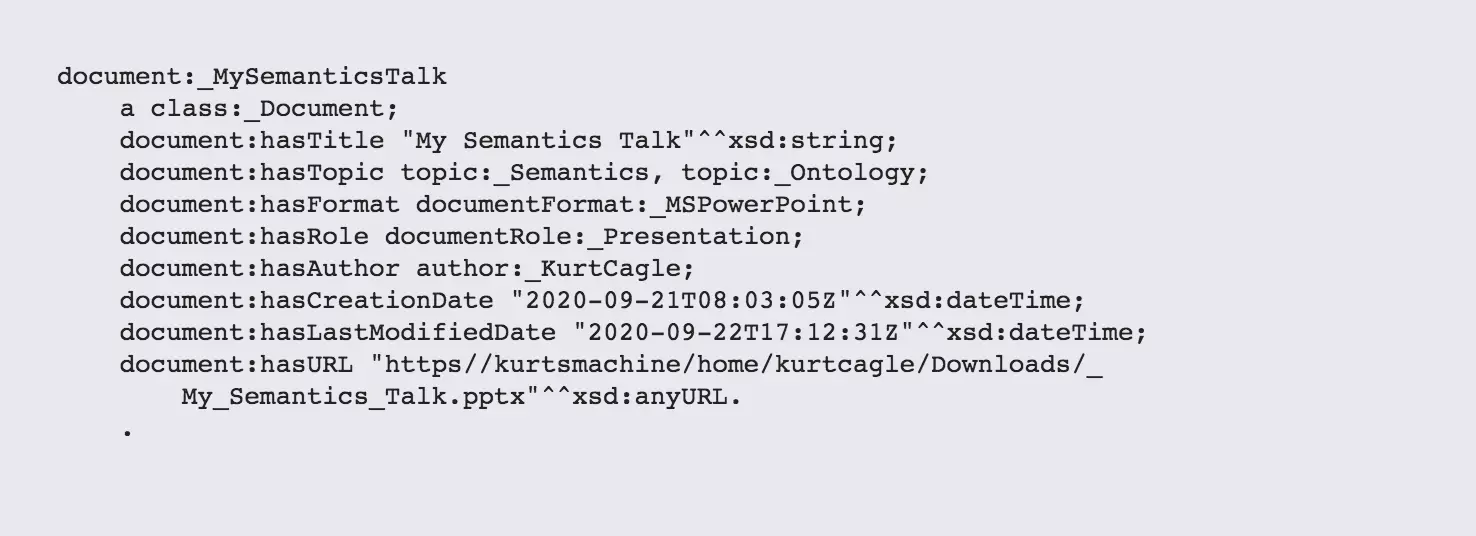

This can of course be turned on its head. There are a number of good reasons for creating file systems that are not strictly hierarchical. If I write a presentation on semantics, as an example, I can tag that document as being about semantics , but I can also indicate that the document is structured as a presentation, and that it has Kurt Cagle as an author:

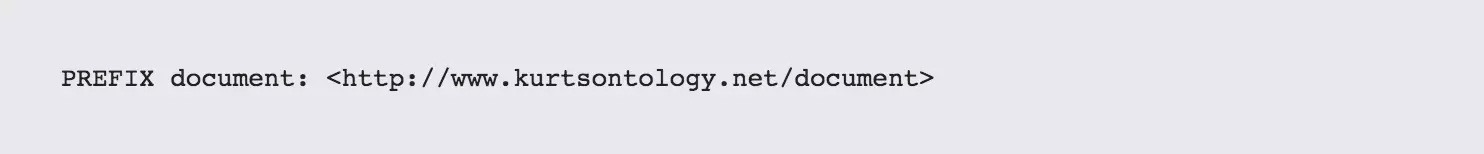

Note that if you have the key (such as document:_MySemanticsTalk) and a resolver for the namespace:

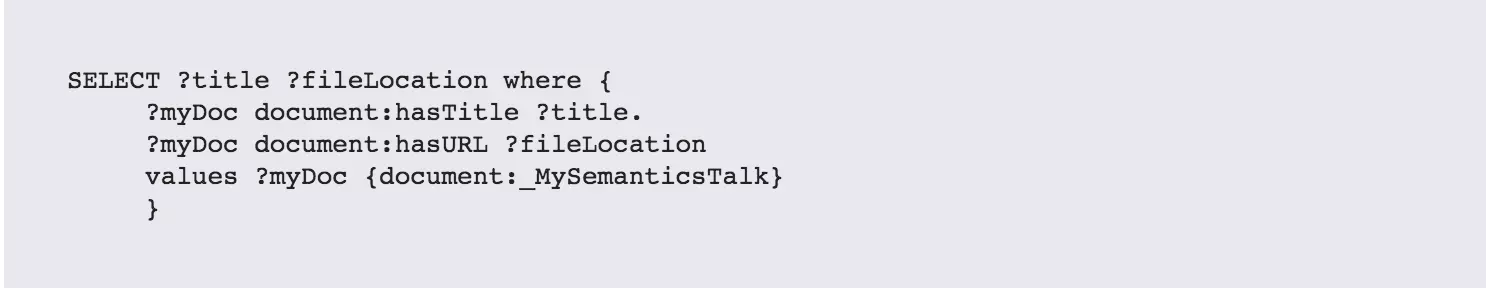

then retrieving the document becomes a trivial operation (and one that's actually divorced from a directory path):

The URI for the talk is not the same as the URL for its location (it could be, but it's surprising how difficult it is to make that happen).

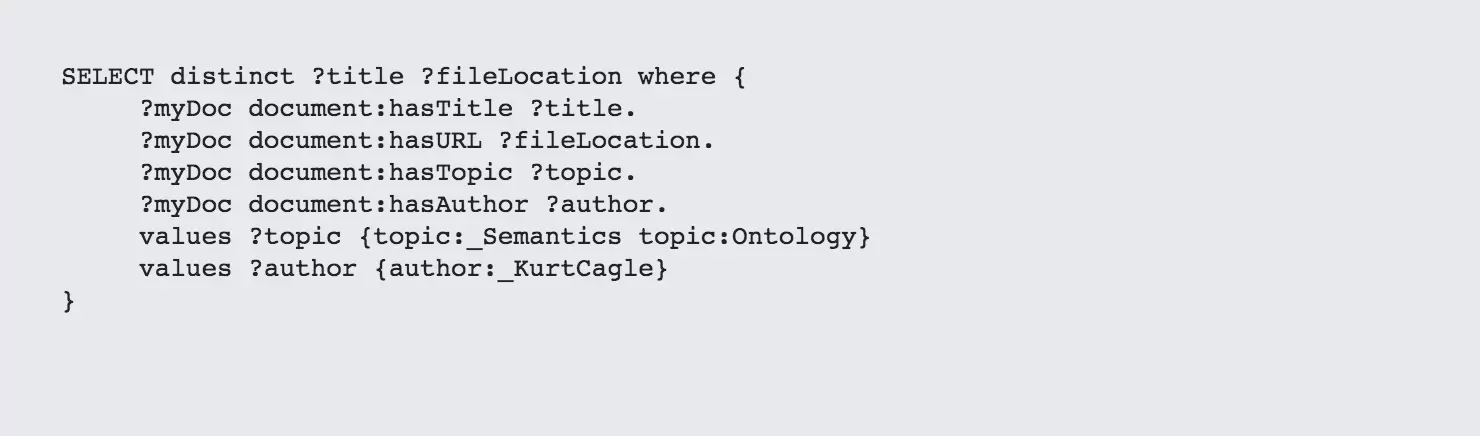

At the same time, I can also infer the location by constraining some possible values:

This above SPARQL query returns (I believe) those publications for which either Semantics or Ontology is a topic and author is Kurt Cagle. You still need to resolve which of the titles are the ones that you're looking for.

If this looks overly complicated, it should be pointed out that this can also be wrapped into a web services URL:

or turned into a GraphQL query, or any of a number of similar queries. What's worth noting here is that the result of such queries are not individual items but sets (though they could be sets that have only one member, which is a useful distinction to keep in mind). It's also a short step from this to:

It's even quite feasible to see everything as simply a live and growing knowledge metabase:

This would add the above knowledge graph (which could be a file system) to a catalog of web endpoints, file systems, and IoT devices that would be queried.

That's ultimately what a knowledge base should do. So why is it not like that today? I think that this comes back down to the issues of attempting to overengineer ontologies to be inference friendly. RDFS+ inferencing can get you a long way, SHACL can get you even farther, in most cases, but we also need to assume that simply because Protege (which was a fine tool for inferential modeling at a time when inferential modeling as the primary way of getting access to triple stores) prescribes a-priori modeling in a certain way that it is in fact the best approach towards modeling, when modeling a-priori is arguably a terribly way to build a knowledge graph that will need to grow as more context is known.

Indeed, I think this is a fundamental point:

Context must always assume that you don't know everything, only that you know enough to talk about the world right now in certain terms. The more is known, the better your context.

So, I think there are still many applications for which a-priori modeling may be appropriate, but if we become too fixated upon looking at the information space in closed-world terms, we'll never really make proper use of knowledge graphs. Knowledge graphs are living, breathing ontologies - they will grow or die as our understanding of the world continues, and because of this, we need to start thinking in more dynamic terms, or the field itself will stagnate before it has even really begun to take off.

They will also shift the focus of our thinking away from the idea that you can know APIs ahead of time, and move us towards a world where APIs reflect the state of the world at the time of query, and that such discovery mechanisms, while more difficult to manually code for, actually work great in systems where the deployment of machine learning patterns and neural networks are already threatening the primacy of fixed APIs in favor of discovery and negotiation.

I will have more to say about this last point in an upcoming post. I also have some VERY exciting news to report, next time on The Cagle Report.

They will get replaced...

It's a controversial topic. Can't give my opinion.

You are not afraid to make a point. That's what I admire the most.

Kurt is the founder and CEO of Semantical, LLC, a consulting company focusing on enterprise data hubs, metadata management, semantics, and NoSQL systems. He has developed large scale information and data governance strategies for Fortune 500 companies in the health care/insurance sector, media and entertainment, publishing, financial services and logistics arenas, as well as for government agencies in the defense and insurance sector (including the Affordable Care Act). Kurt holds a Bachelor of Science in Physics from the University of Illinois at Urbana–Champaign.

Leave your comments

Post comment as a guest