Comments (5)

Geoff Holmes

Very sensible and clear explanation

Roy Tappenden

Fabulous! Clear & concise.

Aditya Gowda

Got really inspired.. Thanks !

Justin Alexander

Amazing read

Sam Wilkinson

Thanks, that was a good refresh.

I grew up as a programmer in the 1980s, and as a consequence, came of age with the debate about object-oriented programming (colloquially known as OOP) raging around me.

In many respects, I suppose I could be considered the very tail end of the procedural generation: working with imported libraries that added functions when I needed them. There was a limited notion of namespaces back then: they made it easier to keep library functions straight and ensure that my print() function didn't step on somebody else's print() function.

Responsive GUIs were also well into the future - heck, to be a programmer in the early 80s meant that you had to understand how to write Zylog 80 or 6502 machine language code, and having a code editor that actually allowed you to compile code was cutting edge. I worked with Basic, Pascal, C, and occasionally Lisp and Perl, and discovered early on that regular expressions were your friend. I also developed, during that time, an appreciation for functional programming.

To be honest, I didn't realize at the time that I was learning functional programming, because, for the most part, there was no real need for the distinction. In functional programming, you pull information from a pipe, you do something to it, then you pass the result off to another pipe. Sometimes those pipes were "files". Sometimes they were other processes that did more things to the data that you passed out from your own particular function.

If you did it right when you pushed the data into a particular black box, the black box would cause the screen to light up as words, or a box, or a little gremlin icon if you wrote the data to the appropriate buffer. Sometimes it even caused notes of various duration to play. The key to all of this, however, was that when you passed the same information into the appropriate box, it would save the stream or print it or draw it or play it in exactly the same way. That's what functional programming is.

In the mid-1980s, however, there was a new paradigm making its way into computer programming, and it was what all the cool (read higher paid) code kids were doing. This paradigm was object-oriented programming, and it manifested itself in Bjarne Stroustrup's C++ language. I would learn somewhat later that Smalltalk preceded C++ by a few years, and had a known about Smalltalk I probably wouldn't have bashed myself repeatedly on the head trying to figure out why C++ seemed so ... illogical.

Stroustrup's C++ rested on three pillars, ones that most programmers could recite in their sleep: encapsulation, inheritance, and polymorphism. Encapsulation was perhaps the most significant of the three, in that it made the assertion that an object was a dynamic thing that maintained its own internal state, and that what the object exposed as state was not necessarily reflective of what was stored internally. The other two properties - inheritance and polymorphism, worked upon the conjecture that more complex interfaces could be created from inheriting simpler interfaces and that different objects may express different but similar functionality by using the same "method" acting on this object.

The C++ language was basically an objectified overlap on the original c functional streams, and arguably it contributed significantly to the evolution of the modern user operating system as it provided a moderately intuitive metaphor for the construction of windowed applications, while creating relevant separations of concerns. The biggest problem with C++ came down to the fact that it was still largely pointer-based, and as such one of the central problems that it had to deal with was keeping track of whether or not an object existed via garbage collection.

The introduction of the Java language in 1995 took care of that particular conundrum, in essence completing the transition from functional to object-oriented code by removing most references to pointers to memory blocks. An object in Java was thus considered an abstraction, with no need by the developer to have to worry about tracking reference handles or managing the explicit deletion of no longer needed objects.

Over time, though, OOP began to run into problems. For starters, inheritance, while good in theory, turned out not to really be the way that people composed objects. Instead, more and more programmers found that the best way to create a new type of class was to compose two existing classes, and that the deeper the inheritance stack, the less likely that the resulting class would actually be all that useful.

You can see that in the notion of a Vehicle class, which can be thought of as a machine capable of progressive movement, steered by a person. A car, a train, and a ship are all vehicles. However, their points of difference - a train is confined to a railway, a boat travels on the water, and a car requires the use of a road, each involves specialized functions that are not found in the other types of classes. The notion of functions that invoke some form of transformation (such as a move() function) is also limited. Ultimately, the utility of that vehicle class as a programmatic abstraction is consequently very low.

One additional artifact of such inheritance is that a given class ends up carrying around a lot of otherwise unused baggage. Some of this can be abstracted out by compilers, but the very fact that you have to do that abstraction process hints that inheritance is not really all that beneficial. The same thing can be said for polymorphism, which is, in essence, the attempt to create actionable metaphors. In this case, a property called speed may seem obvious for a vehicle, but even here the metadata of that property (e.g., whether the speed is in miles per hour, knots, or a percentage of the speed of light) may vary.

Finally, the most egregious problem that OOP has is that by encapsulating information (in effect hiding it), you end up with situations where the same query against the same object at different times may in fact return different values. In this case, OOP encapsulation opens up the potential for side effects. That term has multiple meanings, so it's worth differentiating here.

If I write a stream of data to a given buffer in memory that represents a screen visualization, then while there is a physical manifestation (a side effect) that takes place, the same manifestation will occur regardless. On the other hand, because the via encapsulated state is hidden, there is no way to know whether sending a signal then awaiting a response will return the same value or not, even via the same interface. The state in such an object is said to be mutable. In a functional system, on the other hand, the state is immutable. The same input will always return the same output.

Mutable state errors are incredibly difficult to find, and consequently to debug. They may be due to race conditions or similar asynchronous updates, and they also may be emergent - existing only when certain internal conditions align across multiple objects. This is part of the reason that more and more programmers are moving to functional programming paradigms where only immutable transmission of information is performed.

The classic example of this is the sort method of an array. There are a number of algorithms that will sort arrays in place, working on the assumption that it takes up less memory to do the requisite swapping for sorts. Unfortunately, once sorted, the previous ordering is lost, and querying against a position in an array will not return the same value. Immutable arrays, on the other hand, eat the memory cost to create a new array, while keeping the old one unmodified. Because these are distinct objects, if only in sequencing order, the original array is preserved for additional processing.

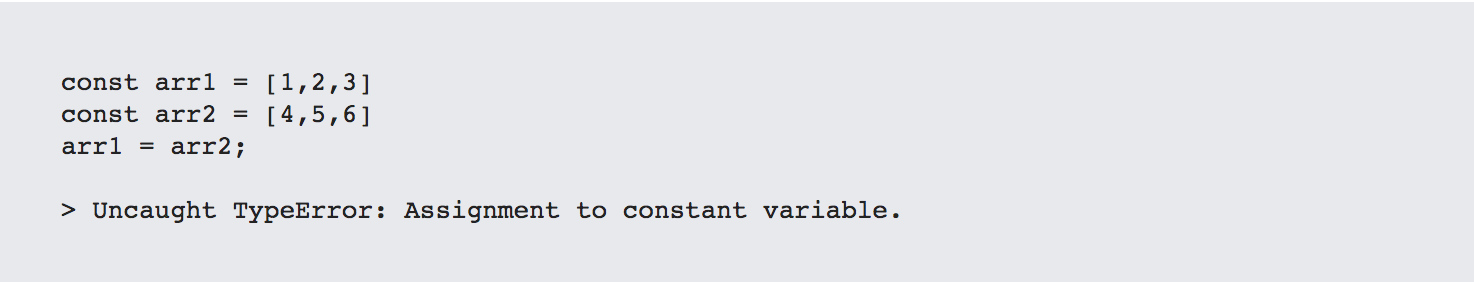

Modern Javascript is actually pretty good at enforcing immutability. The var keyword, belonging to pre-ECMA-2015 Javascript, permits redefinition, but the post-ECMA-2015 const keyword does not. Thus, the following sequence of expressions will throw an error:

This immutability may take up more memory, but it makes code checking far easier because there are no dependencies upon hidden variables, resulting in far less time spent debugging. This is one reason among many that immutable programming ... functional programming ... is making a comeback when dealing with larger-scale distributed environments.

The same philosophy holds true when discussing the distinction between application-specific and enterprise-specific databases. Object-oriented programming made a basic assumption early on: global state is bad. This was an assumption that was driven partially by necessity - the amount of available RAM for processing in 1985 was a near infinitesimal fraction of what exists today, and the protection of data assets (a form of referential integrity) was paramount.

Increasingly, however, there is a distinction between operational data (that data which is necessary to keep components functional) and global data (that data which describes an application context, such as an enterprise dataset). Global data frequently is stored within a centralized (or at least reasonably federated) data repository, and at any given point in time, only a small portion of the overall data-space is sent to the client. Messaging, in general, is accomplished not by creating messages between components on the client but by updating the global data repository then creating a view of the relevant data that changed.

This is the approach that Redux and similar "global" data repositories take. The presentation (view) reflects the echo coming from the server, and interactions are then sent not at the atomic level but at the concept (or resource) level. In essence, the full "description" of the resource becomes the message sent to the knowledge base, and should that message be acknowledged and validated, then any application which has subscribed to this particular view of the changed knowledge base will update in realtime.

One key point that is worth stressing here is that knowledge bases are not, necessarily semantic in the traditional (e.g., RDF) way, though many are. Instead, knowledge graphs are differentiated from relational data stores on the basis of a few key points:

While this covers semantic triple stores, it can also cover property graphs, JSON and XML stores, GraphQL servers, and even conventional SQL stores in certain contexts. The most important aspect is that it represents globally contextual data.

Such graph data may or may not be immutable. Mutable graph data typically represents a snapshot of information at a given moment in time. For instance, if an assertion exists that Jane Doe is the CEO of BigCo, within a mutable database, this assertion holds true at a certain moment in time, say roughly the current moment. In an immutable database, on the other hand, there exists a record indicating that Jane Doe had a position as CEO of BigCo over a certain interval of time, but that the time in which that statement is no longer true has not yet come. It is an inference.

This is likely to be the next battleground of object-oriented vs. functional programming, even if the definition of OOP is considerably weaker than that defined by people like Alan Kay or Bjarne Stroustrup. My belief is that our notion of object-oriented comes down to whether or not we see data as a snapshot in time, or a much vaster data fabric. It is, ultimately, a matter of temporal context.

Kurt Cagle is the editor of The Cagle report, and a longtime blogger and writer focused on the field of information and knowledge management.

The Cagle Report is a daily update of what's happening in the Digital Workplace. He lives in Issaquah, Washington with his wife, kid, and cat. For more of the Cagle Report, please subscribe.

Very sensible and clear explanation

Fabulous! Clear & concise.

Got really inspired.. Thanks !

Amazing read

Thanks, that was a good refresh.

Kurt is the founder and CEO of Semantical, LLC, a consulting company focusing on enterprise data hubs, metadata management, semantics, and NoSQL systems. He has developed large scale information and data governance strategies for Fortune 500 companies in the health care/insurance sector, media and entertainment, publishing, financial services and logistics arenas, as well as for government agencies in the defense and insurance sector (including the Affordable Care Act). Kurt holds a Bachelor of Science in Physics from the University of Illinois at Urbana–Champaign.

Leave your comments

Post comment as a guest