Comments

- No comments found

AI refers to the development of computer systems that can perform tasks that typically require human intelligence, such as learning, reasoning, problem-solving, perception, and natural language understanding.

AI is based on the idea of creating intelligent machines that can work and learn like humans. These machines can be trained to recognize patterns, understand speech, interpret data, and make decisions based on that data.

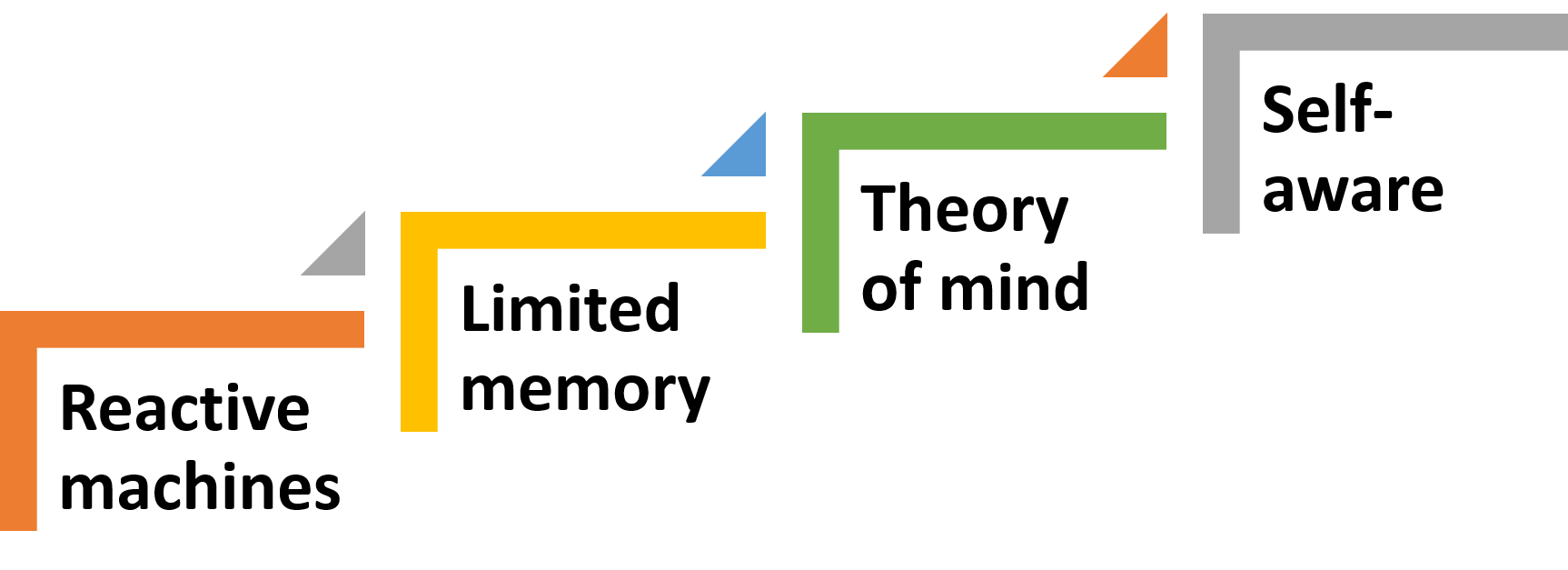

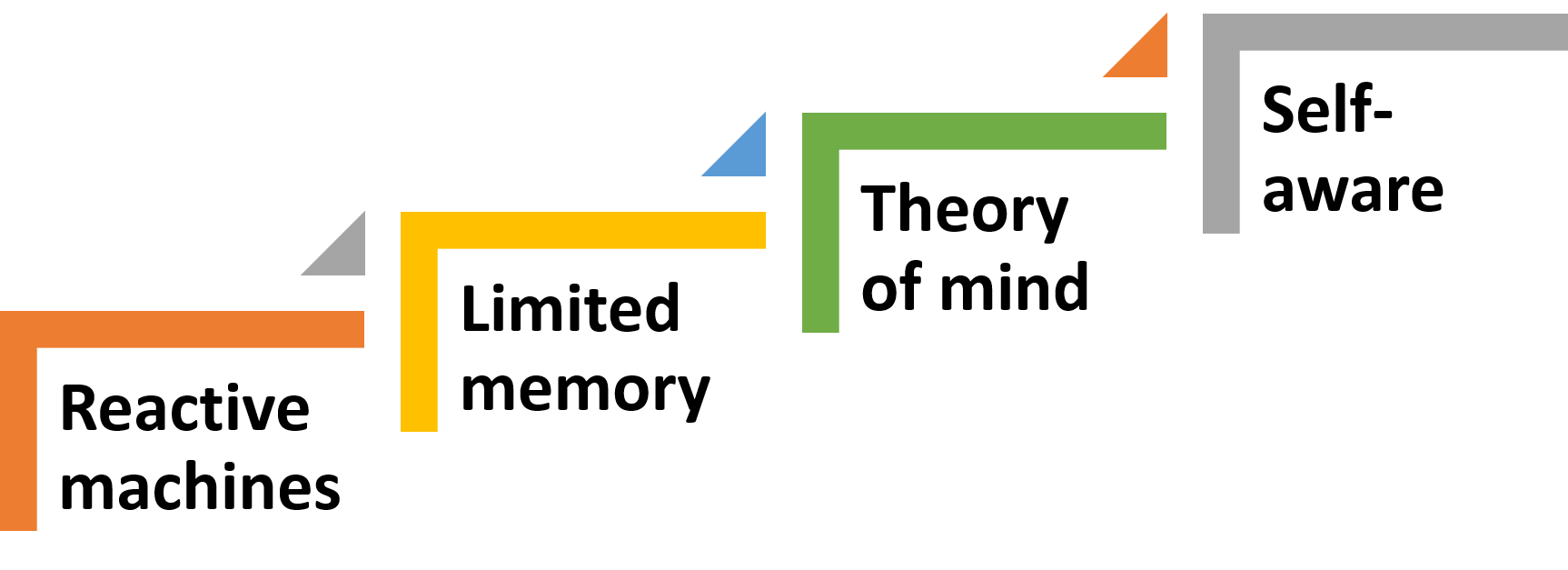

AI can be classified into different categories, such as:

1. Reactive machines: These machines can only react to specific situations based on pre-programmed rules.

2. Limited memory: These machines can learn from previous data and make decisions based on that data.

3. Theory of mind: These machines can understand human emotions and respond accordingly.

4. Self-aware: These machines can understand their own existence and modify their behavior accordingly.

AI has many practical applications, including speech recognition, image recognition, natural language processing, autonomous vehicles, and robotics, to name a few.

Narrow AI, also known as weak AI, is an AI system designed to perform a specific task or set of tasks. These tasks are often well-defined and narrow in scope, such as image recognition, speech recognition, or language translation. Narrow AI systems rely on specific algorithms and techniques to solve problems and make decisions within their domain of expertise. These systems do not possess true intelligence, but rather mimic intelligent behavior within a specific domain.

General AI, also known as strong AI or human-level AI, is an AI system that can perform any intellectual task that a human can do. General AI would have the ability to reason, learn, and understand any intellectual task that a human can perform. It would be capable of solving problems in a variety of domains, and would be able to apply its knowledge to new and unfamiliar situations. General AI is often thought of as the ultimate goal of AI research, but is currently only a theoretical concept.

Super AI, also known as artificial superintelligence, is an AI system that surpasses human intelligence in all areas. Super AI would be capable of performing any intellectual task with ease, and would have an intelligence level far beyond that of any human being. Super AI is often portrayed in science fiction as a threat to humanity, as it could potentially have its own goals and motivations that could conflict with those of humans. Super AI is currently only a theoretical concept, and the development of such a system is seen as a long-term goal of AI research.

1. Rule-based AI: Rule-based AI, also known as expert systems, is a type of AI that relies on a set of pre-defined rules to make decisions or recommendations. These rules are typically created by human experts in a particular domain, and are encoded into a computer program. Rule-based AI is useful for tasks that require a lot of domain-specific knowledge, such as medical diagnosis or legal analysis.

2. Supervised Learning: Supervised learning is a type of machine learning that involves training a model on a labeled dataset. This means that the dataset includes both input data and the correct output for each example. The model learns to map input data to output data, and can then make predictions on new, unseen data. Supervised learning is useful for tasks such as image recognition or natural language processing.

3. Unsupervised Learning: Unsupervised learning is a type of machine learning that involves training a model on an unlabeled dataset. This means that the dataset only includes input data, and the model must find patterns or structure in the data on its own. Unsupervised learning is useful for tasks such as clustering or anomaly detection.

4. Reinforcement Learning: Reinforcement learning is a type of machine learning that involves training a model to make decisions based on rewards and punishments. The model learns by receiving feedback in the form of rewards or punishments based on its actions, and adjusts its behavior to maximize its reward. Reinforcement learning is useful for tasks such as game playing or robotics.

5. Deep Learning: Deep learning is a type of machine learning that involves training deep neural networks on large datasets. Deep neural networks are neural networks with multiple layers, allowing them to learn complex patterns and structures in the data. Deep learning is useful for tasks such as image recognition, speech recognition, and natural language processing.

6. Generative AI: Generative AI is a type of AI that is used to generate new content, such as images, videos, or text. It works by using a model that has been trained on a large dataset of examples, and then uses this knowledge to generate new content that is similar to the examples it has been trained on. Generative AI is useful for tasks such as computer graphics, natural language generation, and music composition.

Generative AI is a type of artificial intelligence that is used to generate new content, such as images, videos, or even text. It works by using a model that has been trained on a large dataset of examples, and then uses this knowledge to generate new content that is similar to the examples it has been trained on.

One of the most exciting applications of generative AI is in the field of computer graphics. By using generative models, it is possible to create realistic images and videos that look like they were captured in the real world. This can be incredibly useful for a wide range of applications, from creating realistic game environments to generating lifelike product images for e-commerce websites.

Another application of generative AI is in the field of natural language processing. By using generative models, it is possible to generate new text that is similar in style and tone to a particular author or genre. This can be useful for a wide range of applications, from generating news articles to creating marketing copy.

One of the key advantages of generative AI is its ability to create new content that is both creative and unique. Unlike traditional computer programs, which are limited to following a fixed set of rules, generative AI is able to learn from examples and generate new content that is similar, but not identical, to what it has seen before. This can be incredibly useful for applications where creativity and originality are important, such as in the arts or in marketing.

However, there are also some potential drawbacks to generative AI. One of the biggest challenges is ensuring that the content generated by these models is not biased or offensive. Because these models are trained on a dataset of examples, they may inadvertently learn biases or stereotypes that are present in the data. This can be especially problematic in applications like natural language processing, where biased language could have real-world consequences.

Another challenge is ensuring that the content generated by these models is of high quality. Because these models are based on statistical patterns in the data, they may occasionally produce outputs that are nonsensical or even offensive. This can be especially problematic in applications like chatbots or customer service systems, where errors or inappropriate responses could damage the reputation of the company or organization.

Despite these challenges, however, the potential benefits of generative AI are enormous. By using generative models, it is possible to create new content that is both creative and unique, while also being more efficient and cost-effective than traditional methods. With continued research and development, generative AI could play an increasingly important role in a wide range of applications, from entertainment and marketing to scientific research and engineering.

One of the challenges in creating effective generative AI models is choosing the right architecture and training approach. There are many different types of generative models, each with its own strengths and weaknesses. Some of the most common types of generative models include variational autoencoders, generative adversarial networks, and autoregressive models.

Variational autoencoders are a type of generative model that uses an encoder-decoder architecture to learn a compressed representation of the input data, which can then be used to generate new content. This approach is useful for applications where the input data is high-dimensional, such as images or video.

Generative adversarial networks (GANs) are another popular approach to generative AI. GANs use a pair of neural networks to generate new content. One network generates new content, while the other network tries to distinguish between real and fake content. By training these networks together, GANs are able to generate content that is both realistic and unique.

Autoregressive models are a type of generative model that uses a probabilistic model to generate new content. These models work by predicting the probability of each output.

Generative AI is a rapidly advancing field that holds enormous potential for many different applications. As the technology continues to develop, we can expect to see some exciting advancements and trends in the future of generative AI. Here are some possible directions for the field: Improved Natural Language Processing (NLP): Natural language processing is one area where generative AI is already making a big impact, and we can expect to see this trend continue in the future. Advancements in nlp will allow for more natural-sounding and contextually appropriate responses from chatbots, virtual assistants, and other AI-powered communication tools. Increased Personalization: As generative AI systems become more sophisticated, they will be able to generate content that is more tailored to individual users. This could mean everything from personalized news articles to custom video game levels that are generated on the fly. Enhanced Creativity: Generative AI is already being used to generate music, art, and other forms of creative content. As the technology improves, we can expect to see more and more AI-generated works of art that are indistinguishable from those created by humans. Better Data Synthesis: As data sets become increasingly complex, generative AI will become an even more valuable tool for synthesizing and generating new data. This could be especially important in scientific research, where AI-generated data could help researchers identify patterns and connections that might otherwise go unnoticed. Increased Collaboration: One of the most exciting possibilities for generative AI is its potential to enhance human creativity and collaboration. By providing new and unexpected insights, generative AI could help artists, scientists, and other creatives work together in novel ways to generate new ideas and solve complex problems.

The future of generative AI looks bright, with plenty of opportunities for innovation and growth in the years ahead.

ChatGPT is a specific implementation of Generative AI that is designed to generate text in response to user input in a conversational setting. ChatGPT is based on the GPT (Generative Pre-trained Transformer) architecture, which is a type of neural network that has been pre-trained on a massive amount of text data. This pre-training allows ChatGPT to generate high-quality text that is both fluent and coherent.

In other words, ChatGPT is a specific application of Generative AI that is designed for conversational interactions. Other applications of Generative AI may include language translation, text summarization, or content generation for marketing purposes.

ChatGPT is a powerful tool for natural language processing that can be used in a wide range of applications, from customer service to education to healthcare.

As an AI language model, ChatGPT's future is constantly evolving and growing. Temperature is a parameter used in chatting with chatgpt to control the quality of the results (0.0 conservative, while 1.0 is creative ). With a temperature of 0.9, ChatGPT has the potential to generate more imaginative and unexpected responses, albeit at the cost of potentially introducing errors and inconsistencies.

In the future, ChatGPT will likely continue to improve its natural language processing capabilities, allowing it to understand and respond to increasingly complex and nuanced queries. It may also become more personalized, utilizing data from users' interactions to tailor responses to individual preferences and needs.

However, as with any emerging technology, ChatGPT will face challenges, such as ethical concerns surrounding its use, potential biases in its responses, and the need to ensure user privacy and security.

The future of ChatGPT is exciting and full of potential. With continued development and improvement, ChatGPT has the potential to revolutionize the way we interact with technology and each other, making communication faster, more efficient, and more personalized.

As with any emerging technology, ChatGPT will face challenges and limitations. Some potential issues include:

1. Ethical concerns: There are ethical concerns surrounding the use of AI language models like ChatGPT, particularly with regards to issues like privacy, bias, and the potential for misuse.

2. Accuracy and reliability: ChatGPT is only as good as the data it is trained on, and it may not always provide accurate or reliable information. Ensuring that ChatGPT is trained on high-quality data and that its responses are validated and verified will be crucial to its success.

3. User experience: Ensuring that users have a positive and seamless experience interacting with ChatGPT will be crucial to its adoption and success. This may require improvements in natural language processing and user interface design.

The future of ChatGPT is full of potential and promise. With continued development and improvement, ChatGPT has the potential to transform the way we interact with technology and each other, making communication faster, more efficient, and more personalized than ever before.

Ahmed Banafa is an expert in new tech with appearances on ABC, NBC , CBS, FOX TV and radio stations. He served as a professor, academic advisor and coordinator at well-known American universities and colleges. His researches are featured on Forbes, MIT Technology Review, ComputerWorld and Techonomy. He published over 100 articles about the internet of things, blockchain, artificial intelligence, cloud computing and big data. His research papers are used in many patents, numerous thesis and conferences. He is also a guest speaker at international technology conferences. He is the recipient of several awards, including Distinguished Tenured Staff Award, Instructor of the year and Certificate of Honor from the City and County of San Francisco. Ahmed studied cyber security at Harvard University. He is the author of the book: Secure and Smart Internet of Things Using Blockchain and AI.

Leave your comments

Post comment as a guest