Comments

- No comments found

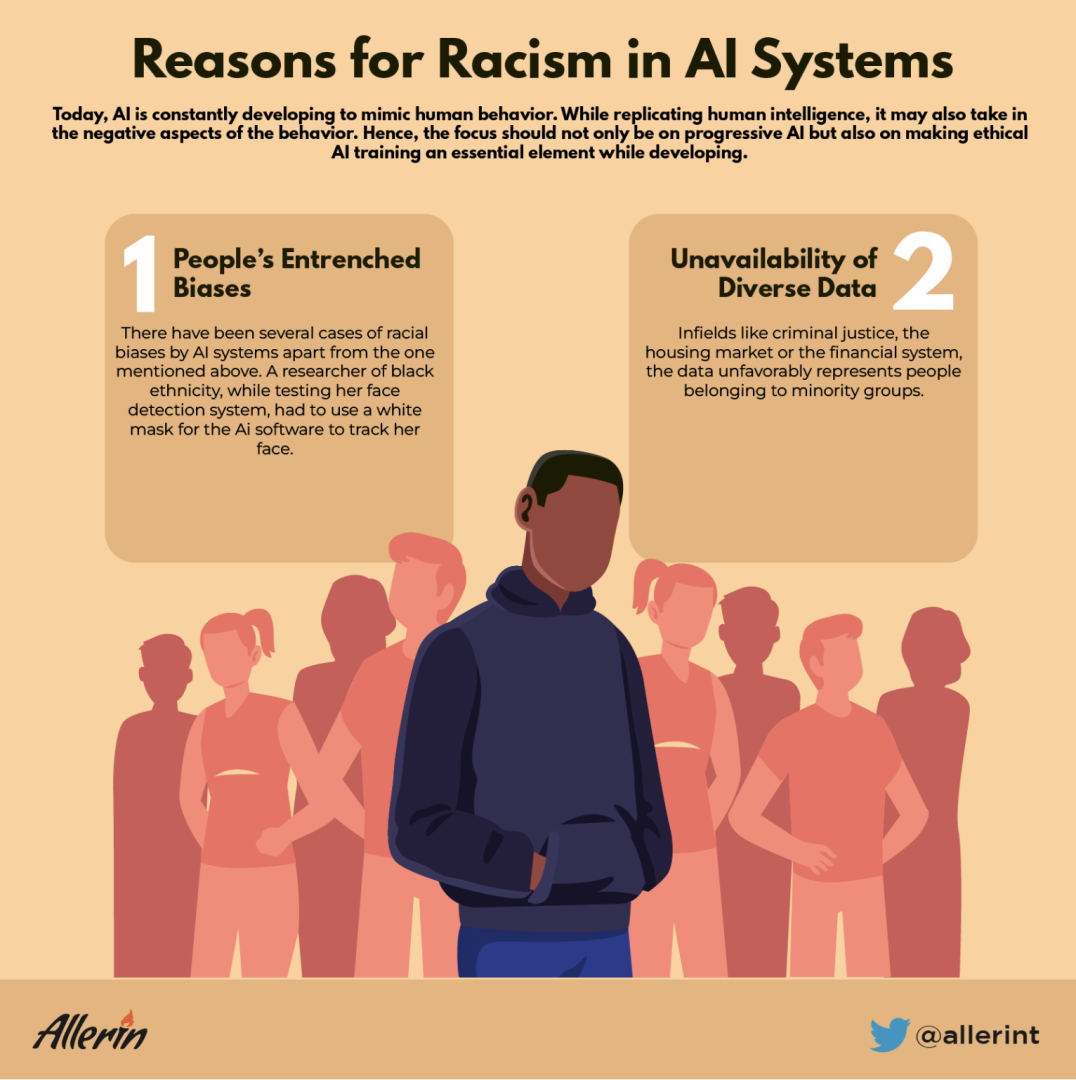

Today, AI is constantly developing to mimic human behavior.

While replicating human intelligence, it may also take in the negative aspects of the behavior. Hence, the focus should not only be on progressive AI but also on making ethical AI training an essential element while developing.

According to the New York Times, FN Meka, the first digital AI-powered rap artist to be signed by a major label, was canceled because it used "gross stereotypes" against black culture. This massive digital star, which had 10.3 million TikTok followers, was developed utilizing more than a thousand data points gathered from video games and social media. Reportedly, this AI robot with more than 200+ followers on Instagram offended the black community by using the N-word repeatedly, resulting in almost an immediate termination of the contract.

This is just one more recent example of AI picking up racial stereotypes. Such instances can be easily avoided if more focus is given to ethical AI training. This means that the AI developers have to scrutinize each and every piece of data used for AI training, which is a nearly impossible task. The absence of ethical AI training can lead to racism in AI systems. Since the data which goes into the AI systems is not properly inspected, it may lead to biases that may or may not have been deliberately passed on.

There have been several cases of racial biases by AI systems apart from the one mentioned above. A researcher of black ethnicity, while testing her face detection system, had to use a white mask for the AI software to track her face. Another instance of such a bias would be when Google Photos organized a black person’s photo along with pictures of gorillas. These biases don’t necessarily stem from racism but from the fact that there might not be a diverse set of data used while the AI systems are being trained.

Even then, there are cases where racism has stemmed from people’s entrenched biases. For example, in fields like criminal justice, the housing market or the financial system, the data unfavorably represents people belonging to minority groups. These records are then fed to AI applications during their training. The resulting AI applications acquire these prejudices, leading to discrimination.

Governments across the world are investing millions of dollars in AI systems and other technological advancements to incorporate them into daily life and make for an easier and more accepting environment. AI tools, having been involved in almost every industry, have the potential to eliminate prejudice and create a new, judgment-free world or do the exact opposite and reinforce those stereotypes and biases that people have fought against for generations.

The concern is not that AI systems and robots will replace humans but that those developing AI systems are ignorant. The most crucial component of this entire operation is the people developing this new-age technology and the data that goes into it. Ethical AI systems have the potential to succeed if done with awareness. Otherwise, many AI applications might just end up being another technology that benefits a select few at the expense of thousands.

Naveen is the Founder and CEO of Allerin, a software solutions provider that delivers innovative and agile solutions that enable to automate, inspire and impress. He is a seasoned professional with more than 20 years of experience, with extensive experience in customizing open source products for cost optimizations of large scale IT deployment. He is currently working on Internet of Things solutions with Big Data Analytics. Naveen completed his programming qualifications in various Indian institutes.

Leave your comments

Post comment as a guest