Comments

- No comments found

Artificial intelligence is impacting every single aspect of our future, but it has a fundamental flaw that needs to be addressed.

The fundamental flaw of artificial intelligence is that it requires a skilled workforce. Apple is currently leading the race of artificial intelligence by acquiring 29 AI startups since 2010.

Success in creating effective AI, could be the biggest event in the history of our civilization. Or the worst. We just don't know. So we cannot know if we will be infinitely helped by AI, or ignored by it and side-lined, or conceivably destroyed by it.

Stephen Hawking

Source: Reuters

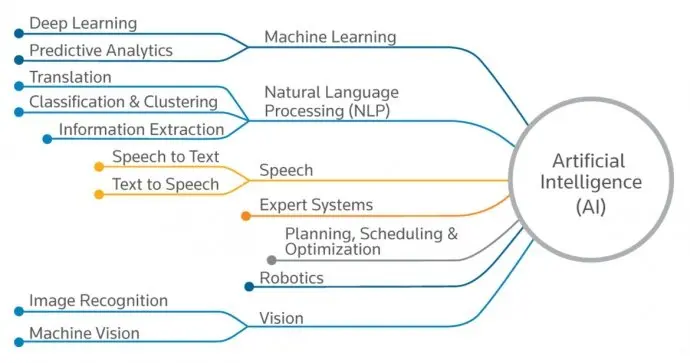

Artificial intelligence is reduced to the following definitions:

1: a branch of computer science dealing with the simulation of intelligent behavior in computers; the capability of a machine to imitate intelligent human behavior;

2: an area of computer science that deals with giving machines the ability to seem like they have human intelligence;

3: the ability of a digital computer or computer-controlled robot to perform tasks commonly associated with intelligent beings; systems endowed with the intellectual processes characteristic of humans, such as the ability to reason, discover meaning, generalize, or learn from past experience;

4: system that perceives its environment and takes actions that maximize its chance of achieving its goals;

5: machines that mimic cognitive functions that humans associate with the human mind, such as learning and problem solving.

Source: Deloitte

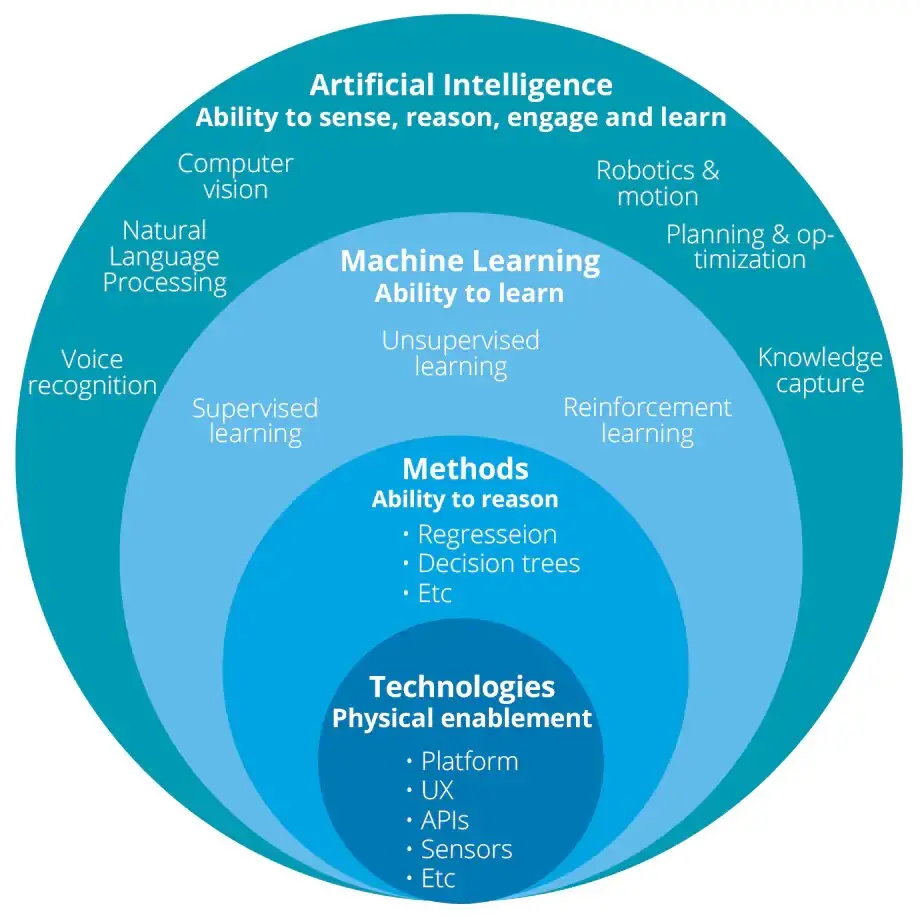

The purpose of artificial intelligence is to enable computers and machines to perform intellectual tasks such as problem solving, decision making, perception, and understanding human communication.

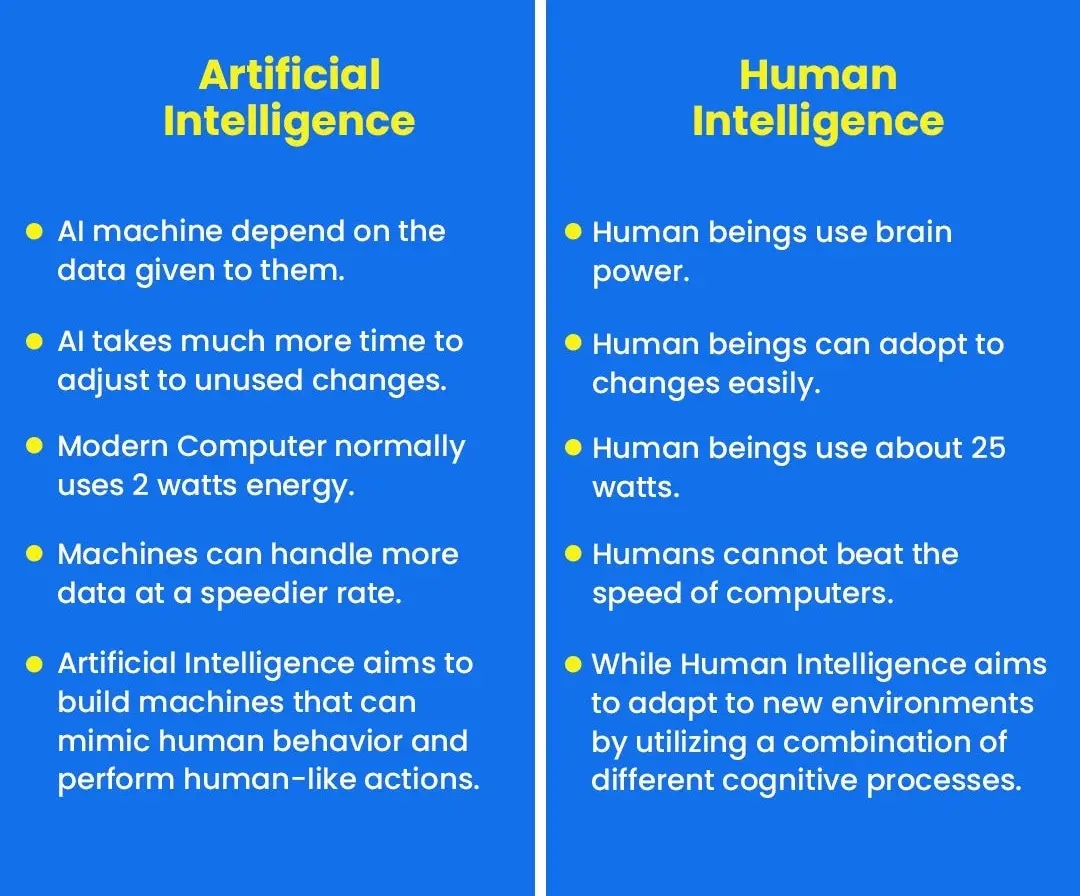

In fact, today's AI is not copying human brains, mind, intelligence, cognition, or behavior. It is all about advanced hardware, software and dataware, information processing technology, big data collection, big computing power. As it is rightly noted at the Financial Times Future Forum “The Impact of Artificial Intelligence on Business and Society”: “Machines will outperform us not by copying us but by harnessing the combination of colossal quantities of data, massive processing power and remarkable algorithms.”

They are advanced data-processing systems: weak or narrow AI applications, neural networks, machine learning, deep learning, multiple linear regression, RFM modeling, cognitive computing, predictive intelligence/analytics, language models, or knowledge graphs. Be it cognitive APIs (face, speech, text etc.), the Microsoft Azure AI platform, web searches or self-driving transportation, GPT-3-4-5 or BERT, Microsoft' KG, Google's KG or Diffbot, training their knowledge graph on the entire internet, encoding entities like people, places and objects into nodes, connected to other entities via edges.

Source: DZone

Today's "AI is meaningless" and "often just a fancy name for a computer program", software patches, like bug fixes, to legacy software or big databases to improve their functionality, security, usability, or performance.

Such machines are not yet self-aware and they cannot understand context, especially in language. Operationally, too, they are limited by the historical data from which they learn, and restricted to functioning within set parameters.

Today’s artificial intelligence (AI) is limited. It still has a long way to go.

Artificial intelligence can be duped by scenarios it has never seen before.

With AI playing an increasingly major role in modern software and services, each major tech firm is battling to develop robust machine-learning technology for use in-house and to sell to the public via cloud services.

However most of the tech companies are still struggling to unlock the real power of artificial intelligence.

Today's artificial intelligence is at best narrow. Narrow artificial intelligence is what we see all around us in computers today -- intelligent systems that have been taught or have learned how to carry out specific tasks without being explicitly programmed how to do so.

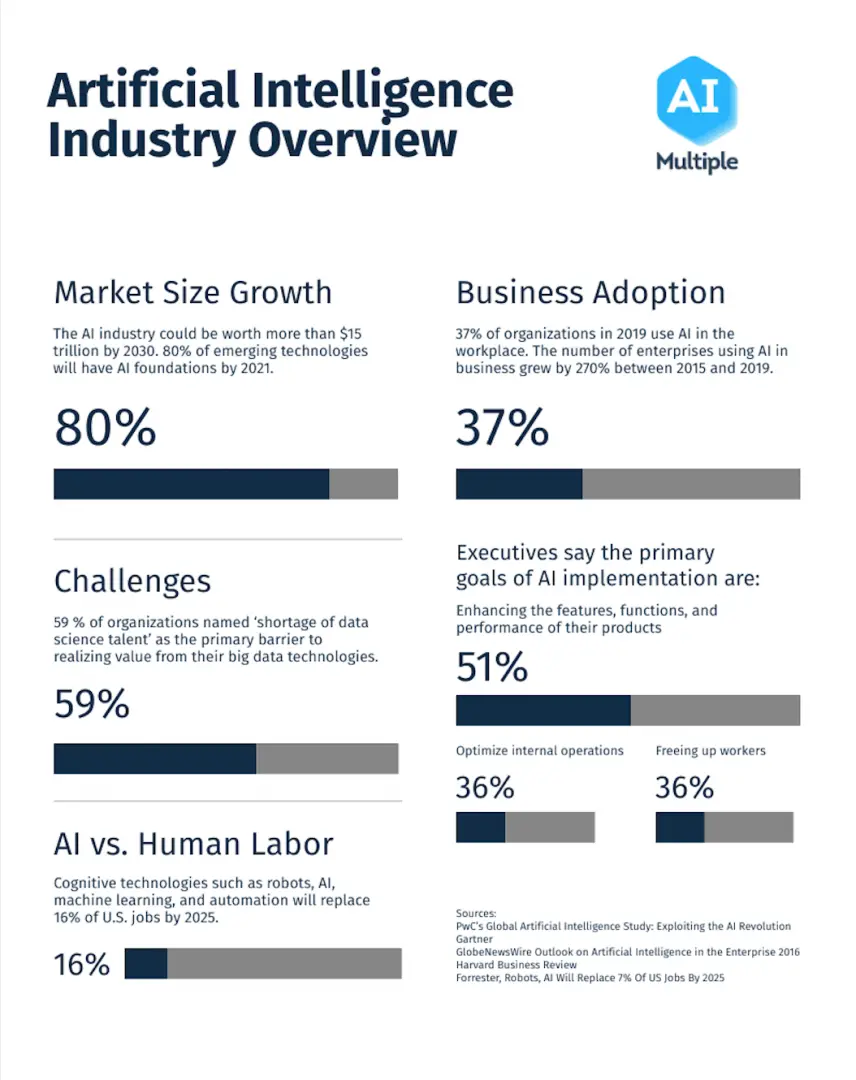

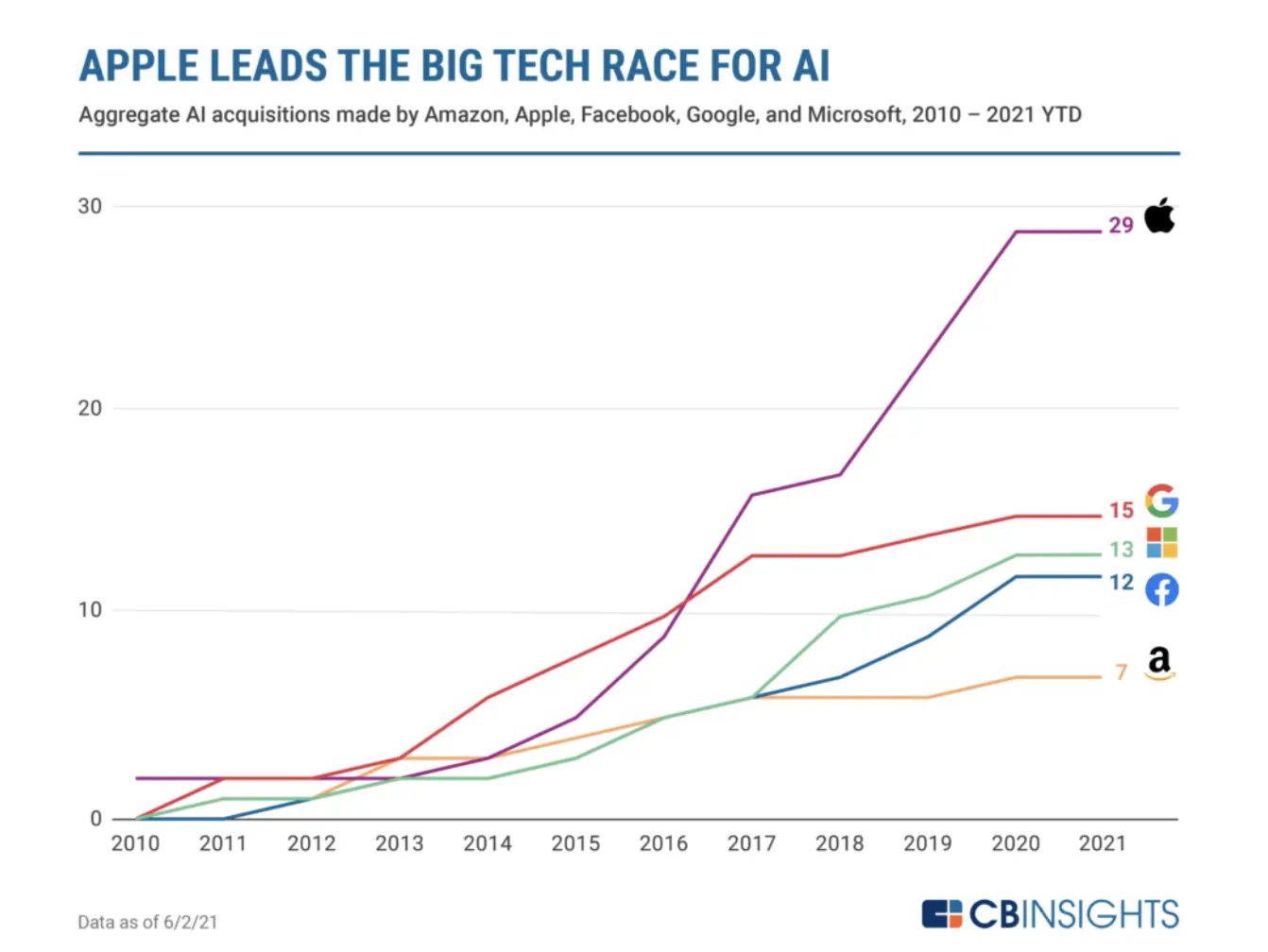

Acording to CB Insights, artificial intelligence companies are a prime acquisition target for companies looking to leverage AI tech without building it from scratch. In the race for AI, this is who's leading the charge.

The usual suspects are leading the race for AI: tech giants like Facebook, Amazon, Microsoft, Google, and Apple (FAMGA) have all been aggressively acquiring AI startups for the last decade.

Among FAMGA, Apple leads the way. With 29 total AI acquisitions since 2010, the company has made nearly twice as many acquisitions as second-place Google (the frontrunner from 2012 to 2016), with 15 acquisitions.

Apple and Google are followed by Microsoft with 13 acquisitions, Facebook with 12, and Amazon with 7.

Source: CB Insights

Apple’s AI acquisition spree, which has helped it overtake Google in recent years, has been essential to the development of new iPhone features. For example, FaceID, the technology that allows users to unlock their iPhones by looking at them, stems from Apple’s M&A moves in chips and computer vision, including the acquisition of AI company RealFace.

In fact, many of FAMGA’s prominent products and services — such as Apple’s Siri or Google’s contributions to healthcare through DeepMind — came out of acquisitions of AI companies.

Other top acquirers include major tech players like Intel, Salesforce, Twitter, and IBM.

Source: Analytics Steps

Artificial Intelligence with robotics is poised to change our world from top to bottom, promising to help solve some of the world’s most pressing problems, from healthcare to economics to global crisis predictions and timely responses.

But while adopting and integrating and implementing AI technologies, as a Deloitte report says, around 94% of the enterprises face potential problems.

This article is not about the AI problems, such as the lack of technical know-how, data acquisition and storage, transfer learning, expensive workforce, ethical or legal challenges, big data addiction, computation speed, black box, narrow specialization, myths & expectations and risks, cognitive biases, or price factor. It is not our subject to discuss why small and mid-sized organizations struggle to adopt costly AI technologies, while big firms like Facebook, Apple, Microsoft, Google, Amazon, IBM allocate a separate budget for acquiring AI startups.

Instead, we focus on the AI itself, as the biggest issue, with its three fundamental problems looking for fundamental solutions in terms of Real Human-Machine Intelligence, as briefed below.

First, it is about AI philosophy, or rather lack of any philosophy, and blindly relying on observations and empirical data or statistics, its processes, algorithms, and inductive inferences, needing a large volume of big data as the ”fuel” to train the model for the special tasks of the classifications and the predictions in very specific cases.

Second, today's AI is not a scientific AI that agrees with the rules, principles, and method of science. Today’s AI is failing to deal with reality and its causality and mentality strictly following a scientific method of inquiry depending upon the reciprocal interaction of generalizations (hypothesis, laws, theories, and models) and observable/experimental data. Most ML models tuned and tweaked to best perform in labs fail to work in real settings of the real world at a wide range of different AI applications, from image recognition to natural language processing (NLP) to disease prediction due to data shift, under-specification or something else. The process used to build most ML models today cannot tell which models will work in the real world and which ones won’t.

Third, extreme anthropomorphism in today's AI/ML/DL, "attributing distinctively human-like feelings, mental states, and behavioral characteristics to inanimate objects, animals, religious figures, the environment, and technological artifacts (from computational artifacts to robots)". Anthropomorphism permeates AI R & TD & D & D, making the very language of computer scientists, designers, and programmers, as "machine learning", which is not any human-like learning, "neural networks", which are not any biological neural networks, or "artificial intelligence", which is not any human-like intelligence. What entails the whole gamut of humanitarian issues, like AI ethics and morality, responsibility and trust, etc.

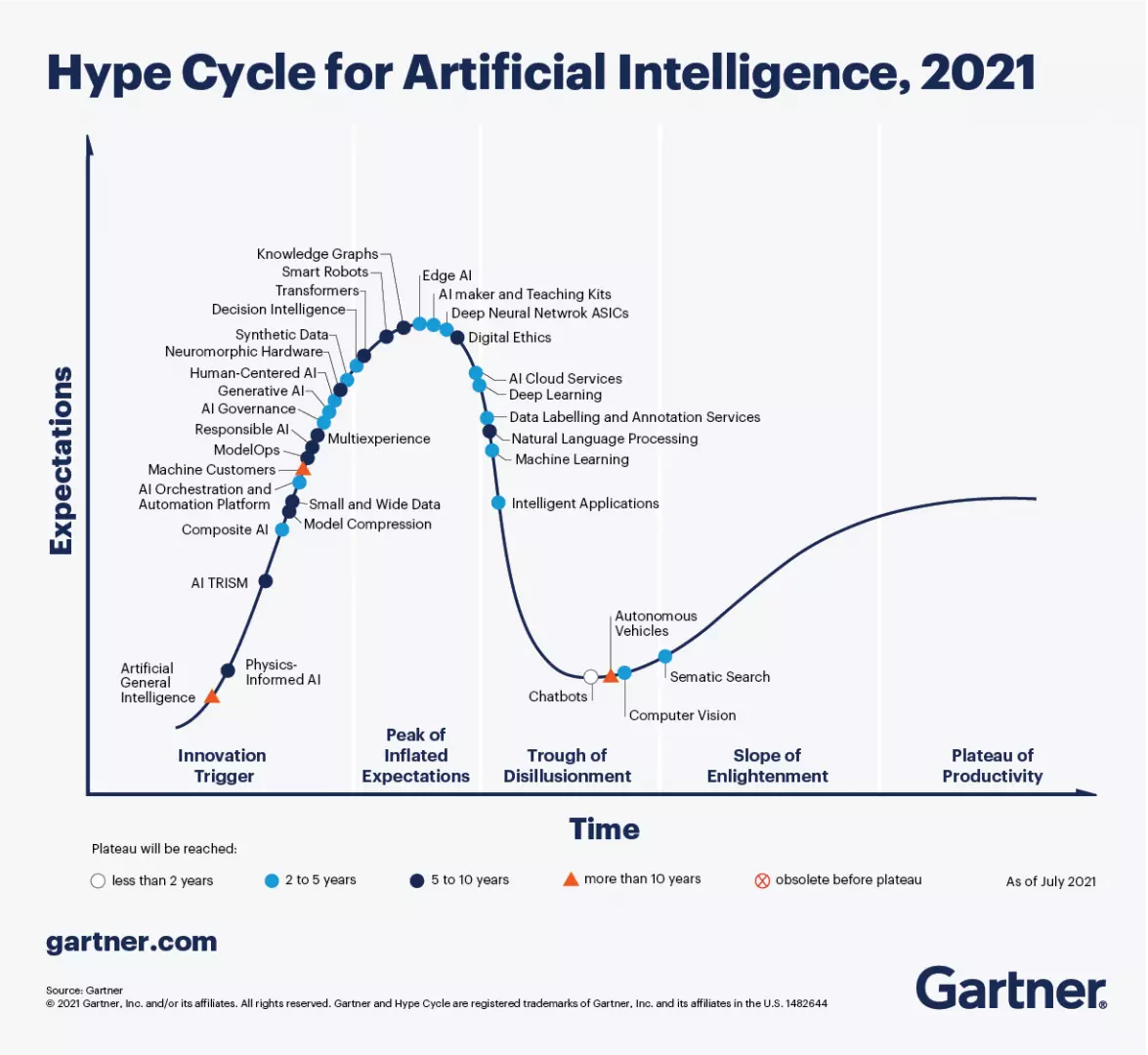

As a result, its trends are chaotic, sporadic and unsystematic, as the Gartner Hype Cycle for Artificial Intelligence 2021 demonstrates.

Source: Gartner

In consequence, there is no common definition of AI, and each one sees AI in its own way, mostly marked by an extreme anthropomorphism replacing real machine intelligence (RMI) with artificial human intelligence (AHI).

Source: Econolytics

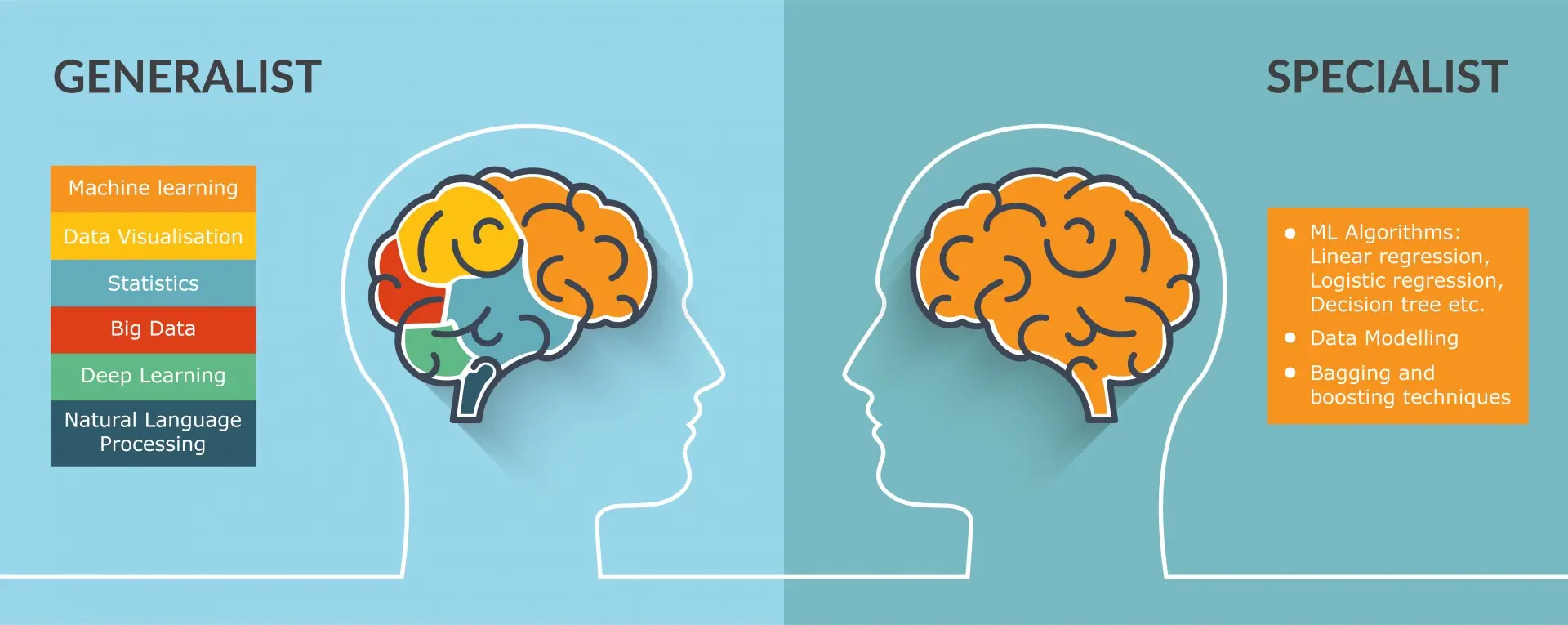

Generally, there are two groups of ML/AI researchers, AI specialists and ML generalists.

Most AI folks are narrow specialists, 99.999…%, involved with different aspects of the Artificial Human Intelligence (AHI), where AI is about programming human brains/mind/intelligence/behavior in computing machines or robots.

Artificial Human Intelligence (AHI) is sometimes defined as “the ability of a machine to perform cognitive functions we associate with human minds, such as perceiving, reasoning, learning, interacting with the environment, problem solving, and even exercising creativity”.

The EC High-Level Expert Group on artificial intelligence has formulated its own specific behaviorist definition.

“Artificial intelligence (AI) refers to systems that display intelligent behaviour by analysing their environment and taking actions – with some degree of autonomy – to achieve specific goals

“Artificial intelligence (AI) refers to systems designed by humans that, given a complex goal, act in the physical or digital world by perceiving their environment, interpreting the collected structured or unstructured data, reasoning on the knowledge derived from this data and deciding the best action(s) to take (according to predefined parameters) to achieve the given goal. AI systems can also be designed to learn to adapt their behaviour by analysing how the environment is affected by their previous actions''.

In all, the AHI is fragmented as in:

Very few of MI/AI researchers (or generalists), 00.0001%, know that Real MI is about programming reality models and causal algorithms in computing machines or robots.

The first group lives on the anthropomorphic idea of AHI of ML, DL and NNs, dubbed as a narrow, weak, strong or general, superhuman or superintelligent AI, or Fake AI simply. Its machine learning models are built on the principle of statistical induction: inferring patterns from specific observations, doing statistical generalization from observations or acquiring knowledge from experience.

“This inductive approach is useful for building tools for specific tasks on well-defined inputs; analyzing satellite imagery, recommending movies, and detecting cancerous cells, for example. But induction is incapable of the general-purpose knowledge creation exemplified by the human mind. Humans develop general theories about the world, often about things of which we’ve had no direct experience.

Whereas induction implies that you can only know what you observe, many of our best ideas don’t come from experience. Indeed, if they did, we could never solve novel problems, or create novel things. Instead, we explain the inside of stars, bacteria, and electric fields; we create computers, build cities, and change nature — feats of human creativity and explanation, not mere statistical correlation and prediction”.

The second advances a true and real AI, which is programming general theories about the world, instead of cognitive functions and human actions, dubbed as the real-world AI, or Transdisciplinary AI, the Trans-AI simply.

To summarize the hardest ever problem, the philosophical and scientific definitions of AI are of two polar types, subjective, human-dependent, and anthropomorphic vs. objective, scientific and reality-related.

So, we have a critical distinction, AHI vs. Real AI, and should choose and follow the true way.

Today’s narrow AI advances are due to the computing brute force: the rise of big data combined with the emergence of powerful graphics processing units (GPUs) for complex computations and the re-emergence of a decades-old AI computation model—the compute-hungry machine deep learning. Its proponents are now looking for a new equation for future AI innovation, that includes the advent of small data, more efficient deep learning models, deep reasoning, new AI hardware, such as neuromorphic chips or quantum computers, and progress toward unsupervised self-learning and transfer learning.

Ultimately, researchers hope to create future AI systems that do more than mimic human thought patterns like reasoning and perception—they see it performing an entirely new type of thinking. While this might not happen in the very next wave of AI innovation, it’s in the sights of AI thought leaders.

Considering the existential value of AI Science and Technology, we must be absolutely honest and perfectly fair here.

Today’s AI is hardly any real and true AI, if you automate the statistical generalization from observations, with data pattern matching, statistical correlations, and interpolations (predictions), as the AI4EU is promoting.

“Today’s AI is narrow. Applying trained models to new challenges requires an immense amount of new data training, and time. We need AI that combines different forms of knowledge, unpacks causal relationships, and learns new things on its own”.

Such a defective AI can only compute what it observes being fed with its training data, for very special tasks on well-defined inputs: blindly text translating, analyzing satellite imagery, recommending movies, or detecting cancerous cells, for example. By the very design it is incapable of general-purpose knowledge creation, where the beauty of intelligence is sitting.

Their machine learning models are built on the principle of induction: inferring patterns from specific observations or acquiring knowledge from experience, focused on “big-data” — the more observations, the better the model. They have to feed their statistical algorithm millions of labelled pictures of cats, or millions of games of chess to reach the best prediction accuracy.

As the article, The False Philosophy Plaguing AI, wisely noted:

“In fact, most of science involves the search for theories which explain the observed by the unobserved. We explain apples falling with gravitational fields, mountains with continental drift, disease transmission with germs. Meanwhile, current AI systems are constrained by what they observe, entirely unable to theorize about the unknown”.

Again, no big data can lead you to a general principle, law, theory, or fundamental knowledge. That is the damnation of induction, be it mathematical or logical or experimental.

Due to lack of a deep conceptual foundation, today’s AI is closely associated with its logical consequences, “AI will automate entirety and remove people out of work”, “AI is totally a science-fiction based technology”, or “Robots will command the world”? It is misrepresented as the top five myths about Artificial Intelligence:

That means we need the true, real and scientific AI, not AHI, as the Real-World Machine Intelligence and Learning, or the Trans-AI, simulating and modeling reality, physically, mental or virtual, with its causality and mentality, as reflected in the real superintelligence (RSI).

Last not last, the transdisciplinary technology is S. Hawking’s called effective and human-friendly AI and what the Google’s founder is dreaming about “AI would be the ultimate version of Google. The ultimate search engine would understand everything on the web. It would understand exactly what you wanted, and it would give you the right thing.” —Larry Page

Our approach to artificial intelligence is fundamentally wrong by not training and developing a skilled workforce capable of handling AI. We’ve thought about AI the wrong way by focusing on algorithms instead of finding solutions to make AI better and unbiased.

Artificial intelligence has to be optimized based on human preferences so that it solves real problems. Apple is currently leading the race but it's a very competitive battle. American and Chinese tech companies are ahead of European tech companies when it comes to artificial intelligence.

A lot of work will need to be done to avoid the negative consequences of artificial intelligence especially with the advent of artificial superintelligence. The sooner we begin regulating artificial intelligence, the better equipped we will be to mitigate and manage the dark side of artificial intelligence.

Transdisciplinary artificial intelligence as a responsible global man-machine intelligence has all potential to help solve several problems related to AI and consequently improve the lives of billions.

Leave your comments

Post comment as a guest