Comments

- No comments found

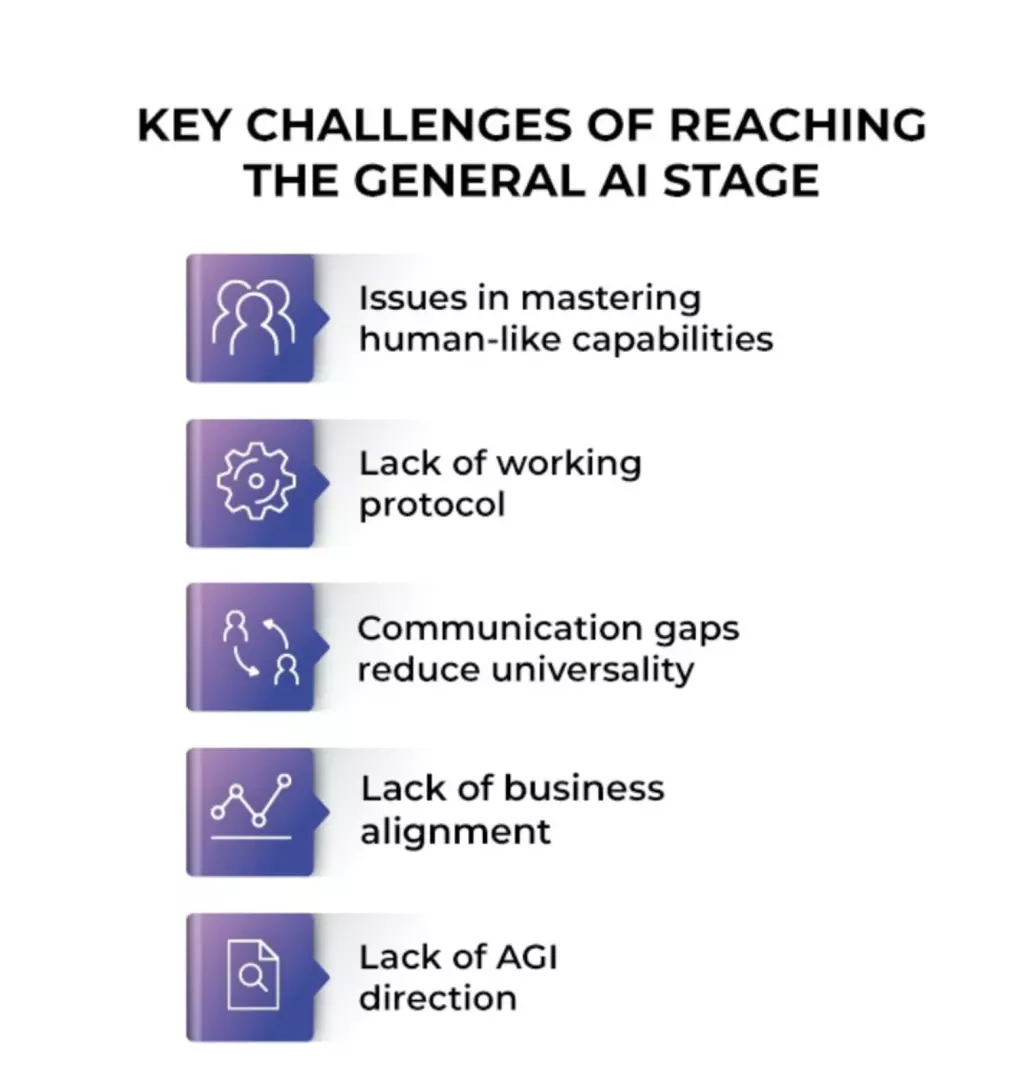

As of today, we are still far away from artificial general intelligence.

It still remains speculative as no such progress has been demonstrated yet. However, there are some positive signs as artificial intelligence plays a crucial role in military, healthcare, cybersecurity, automation and self-driving cars.

Today’s artificial intelligence models are narrow. They typically fail if applied outside the domain for which they were trained.

Artificial general intelligence (AGI) is still a distant prospect, however businesses can still benefit from improved machine reasoning.

Recent advances in artificial intelligence (AI) are improving many aspects of our lives.

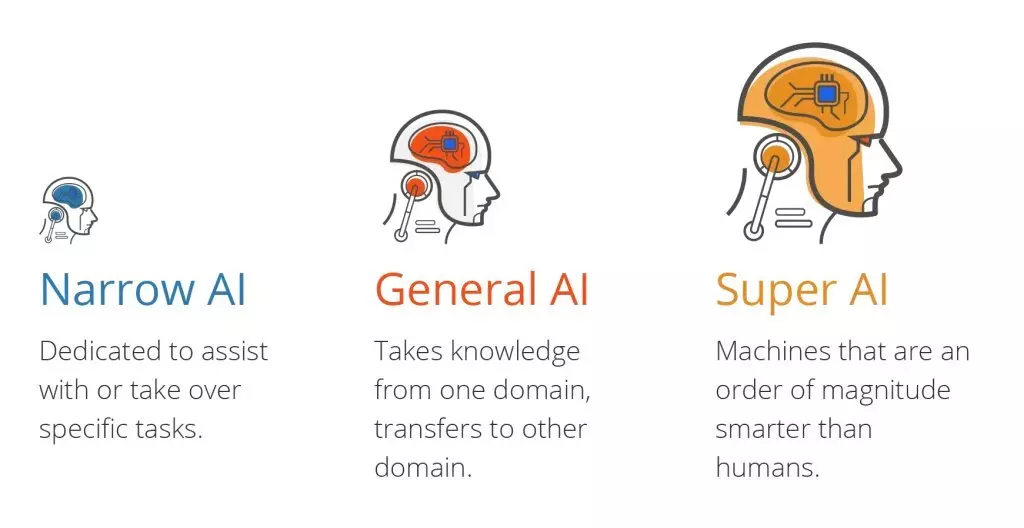

There are three types of artificial intelligence:

Artificial narrow intelligence (ANI), which has a narrow range of abilities.

Artificial general intelligence (AGI), which is on par with human capabilities.

Artificial superintelligence (ASI), which is more capable than a human.

An Artificial General Intelligence (AGI) would be a machine capable of understanding the world as well as any human, and with the same capacity to learn how to carry out a huge range of tasks.

AGI doesn't exist yet, but has featured in science-fiction stories for more than a century, and has been popularized in modern times by films such as 2001: A Space Odyssey.

When I read about AI, 80% of the time it’s just flat out wrong information.

Professor Stewart Russell of the University of California at Berkeley, author of the Human-Centric AI textbook.

In reality, “When I hear or read about AI, 99.9999% of the time it’s just flat wrong information”. This misunderstanding of what AI really is has largely enabled the emergence of fake AI, a subjective, non-scientific human-like, human-level AI.

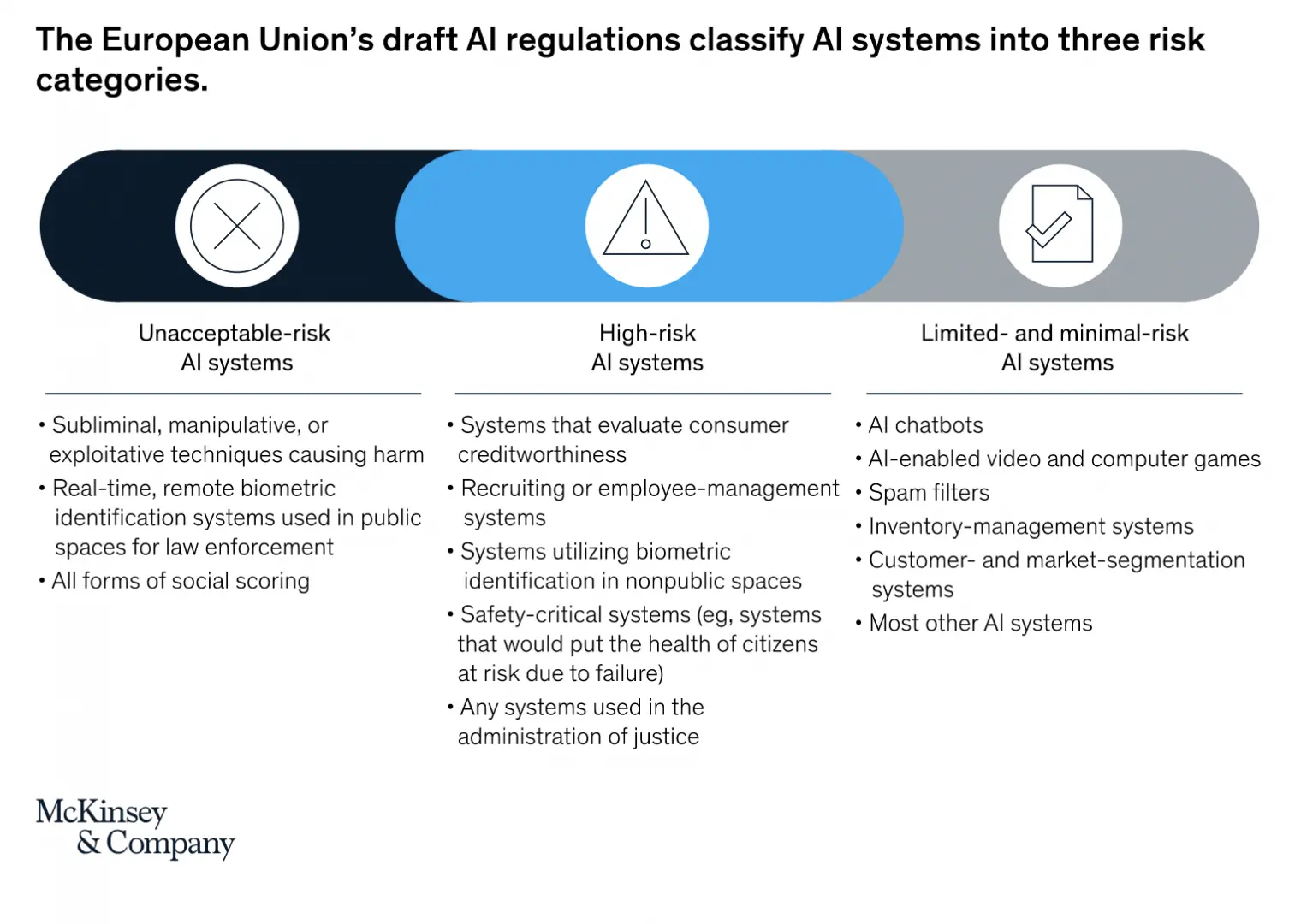

There is currently no legislation specifically designed to regulate the use of artificial general intelligence. The current AI systems are regulated by other existing regulations. These include data protection, consumer protection and market competition laws. Bills have also been passed to regulate certain specific AI systems.

According to McKinsey, the regulation of AI systems is divided into three categories: unacceptable-risk AI systems, high-risk AI systems, and limited- and minimal-risk AI systems.

There are two big approaches to AGI: A human-centric model based on human intelligence and a transdisciplinary model which combines reality, causality, data, mentality and computation. Transdisciplinarity transcends traditional boundaries to integrate the philosophical, mathematical, natural, cognitive, social, engineering and computer sciences in a man-machine intelligence context.

A transdisciplinary cognitive thinking skill set and a disposition/mindset includes observing, patterning, abstracting, embodied thinking, modelling, play and synthesizing, comprehension, application, analysis, synthesis, dialectical thought, and metacognition.

All the most wicked problems as identified in the United Nations 17 Sustainable Development Goals from ending poverty to climate change, are so complex and formally unsolvable that they require approaching the issue collaboratively using multiple lenses, with all intersections and relationships - in short, to develop the transdisciplinary man-machine hyperintelligence.

Such a transdisciplinary approach can provide an integrated, systematic, comprehensive intelligence framework for the definition, analysis and understanding of the scientific and nonscientific (social, economic, political, environmental, and institutional) factors towards reaching artificial general intelligence in the future.

No, a machine cannot be taught what is fair unless the engineers designing the AI system have a precise conception of what fairness is. Deviant behavior in AGI will need to be addressed. In order to build AI systems that robustly behave well, we of course need to decide what 'good behavior' means in each application domain.

AGI has a dark side: It could empower surveillance and the control of populations, entrenching power in the hands of a small group of organizations, underpinning fearsome weapons, and removing the need for governments to look after the obsolete populace.

While AGI may not be imminent, substantial advances may be possible in the coming years.

Artificial general intelligence (AGI) has the potential to improve upon itself at an exponential rate, rapidly evolving to the point where its intelligence operates at a level beyond human comprehension.

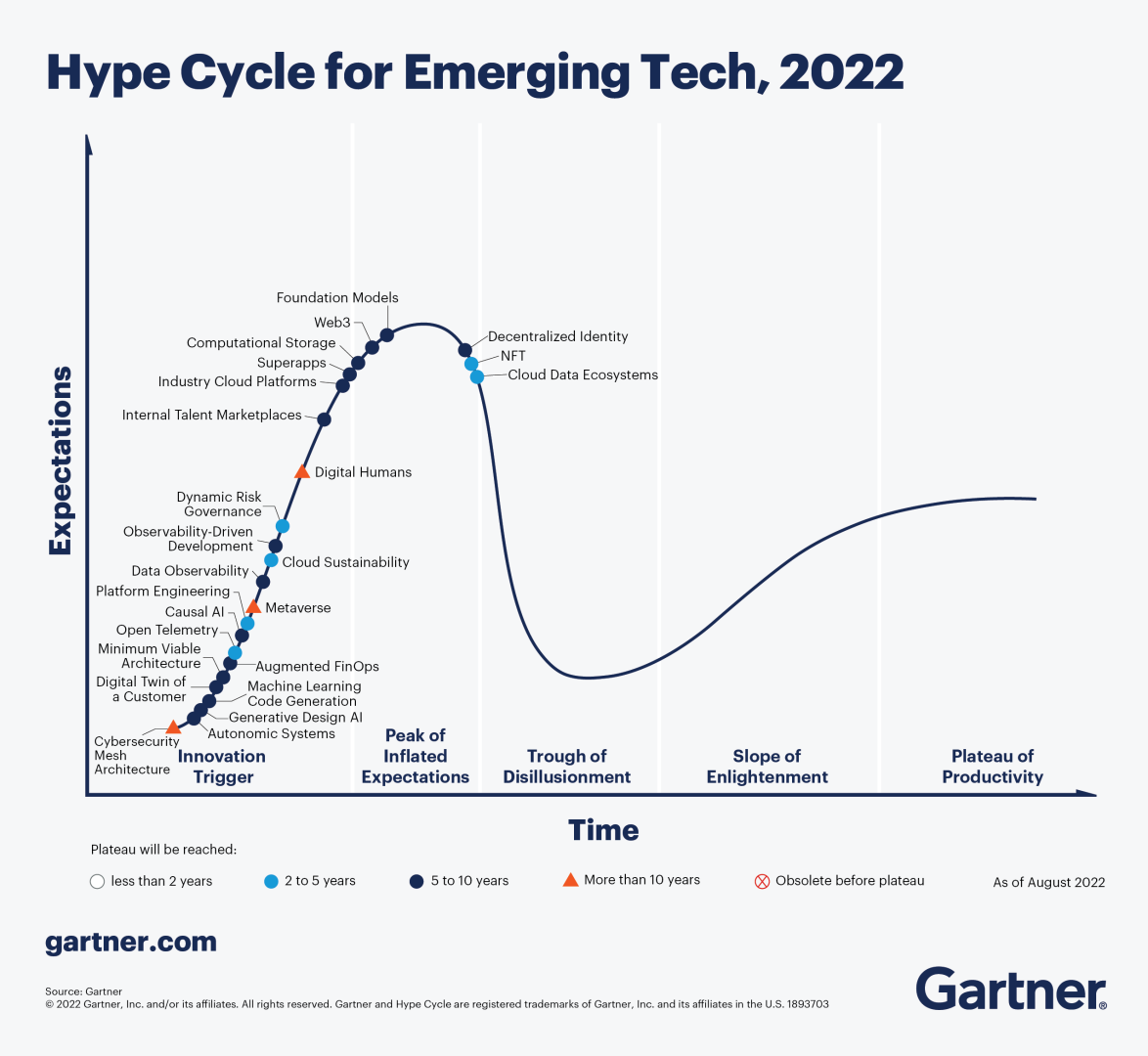

According to Gartner, several technologies such as machine learning are at an early stage, but some are at an embryonic stage including artificial general intelligence (AGI), and great uncertainty exists about how they will evolve.

While machines may exhibit stellar performance on a certain task, performance may degrade dramatically if the task or patterns are slightly modified. AI systems are still far away from developing human-like sensory-perception capabilities.

Leave your comments

Post comment as a guest