Comments

- No comments found

The development of counterfeit AI is a real concern as it is often created by humans with their own implicit biases and limited perspectives.

Counterfeiting AI (CAI), which imitates human intelligence, behavior, and tasks, is a serious economic threat to industries, workforce, and the overall economy.

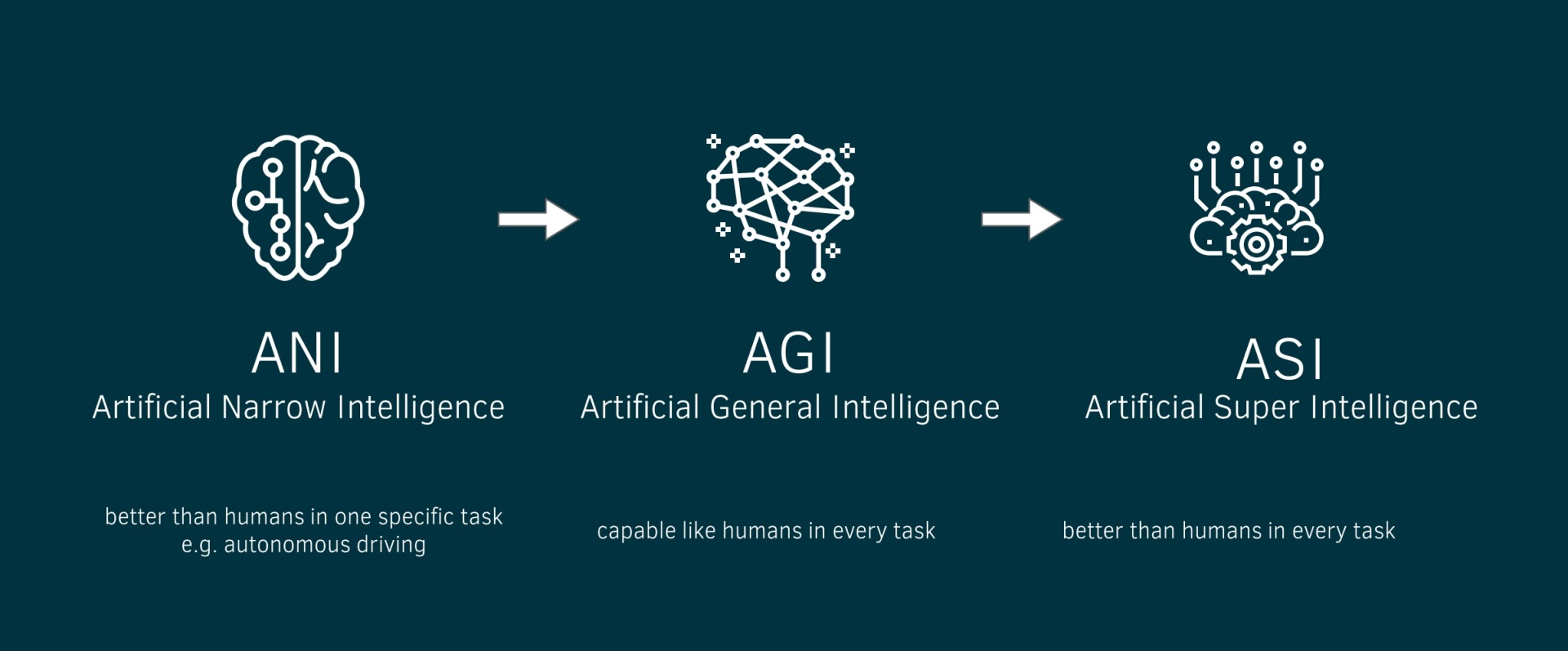

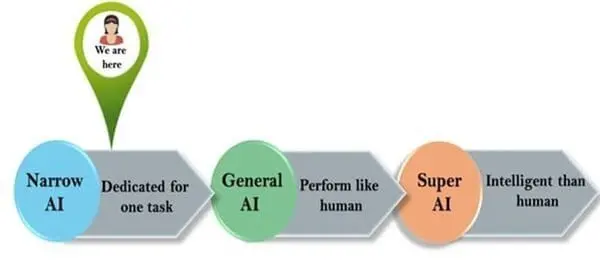

It's important to state that artificial intelligence (AI) is classified into three main types:

Artificial narrow intelligence (ANI), which has a narrow range of abilities.

Artificial general intelligence (AGI), which is on par with human capabilities.

Artificial superintelligence (ASI), which is more capable than a human.

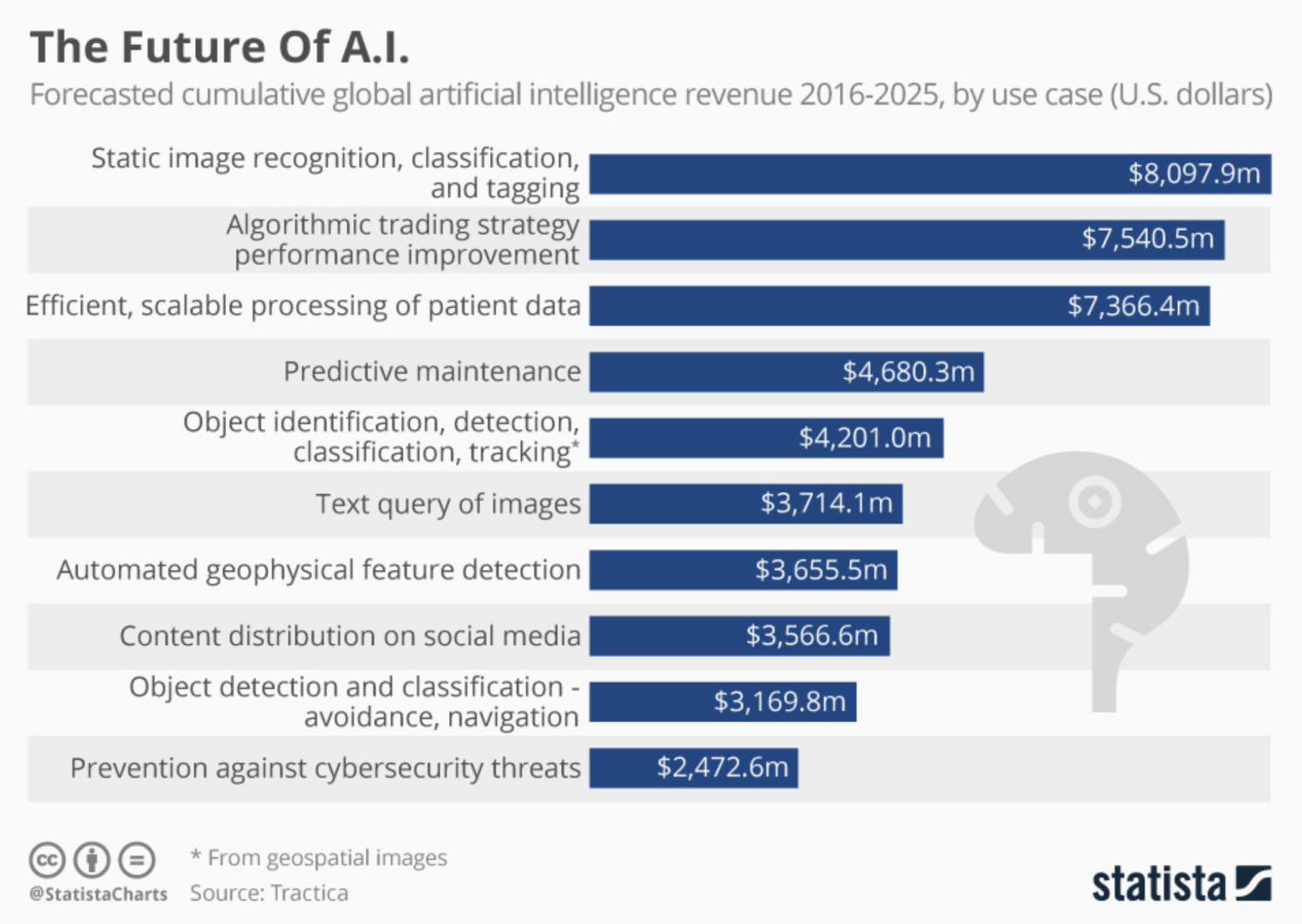

One of the primary concerns regarding large language model (LLM) systems is their potential impact on the job market. Due to the impressive content they generate, these systems are now being referred to as "human-competitive intelligence," which could lead to workers being replaced by LLM systems in a wide range of professions, including art, writing, programming, and finance.

A recent study conducted by Open AI, OpenResearch, and the University of Pennsylvania explored this issue, comparing GPT-4 capabilities to job requirements. The study found that 20% of the U.S. workforce may have at least 50% of their tasks impacted by GPT-4, with higher-income jobs facing a greater impact.

Counterfeit AI is the act of imitating the human brain, intelligence, or behavior. It is a fraudulent imitation that can deceive individuals into believing that the fake is of equal or greater value than the real thing. This is particularly concerning for industries that rely on AI, such as healthcare and finance. If a counterfeit AI is used in these industries, it can lead to disastrous consequences for individuals and the economy as a whole.

To combat the threat of counterfeit AI, real AI-based detection systems are needed. These systems will allow the general public to identify counterfeit machine learning (ML) applications, neural network (NN) products, and deep learning (DL) services. The goal is to stop any counterfeit AI that could lead to the theft or destruction of authentic human intelligence, behavior, and tasks.

Calls for temporary research pausing on AI systems have been made in the past. However, this is not enough to address the issue of counterfeit AI. A temporary pause in research will not solve the problem of counterfeit AI that has already been created. What is needed is a real and lasting solution to detect and prevent counterfeit AI from being used in society.

It's important to note that not all AI is bad. In fact, a good AI with the right language model structures in place can be incredibly useful in society. For example, AI can be used to improve healthcare outcomes, detect fraud, and automate manual tasks. Biased AI can lead to serious consequences. This is why it's important to have real AI-based detection systems in place to identify and prevent counterfeit AI from being used.

GPT-4 has already demonstrated its ability to excel in the legal field, potentially outperforming those who have taken the bar exam. PricewaterhouseCoopers (PwC) intends to introduce a legal chatbot powered by OpenAI to its employees.

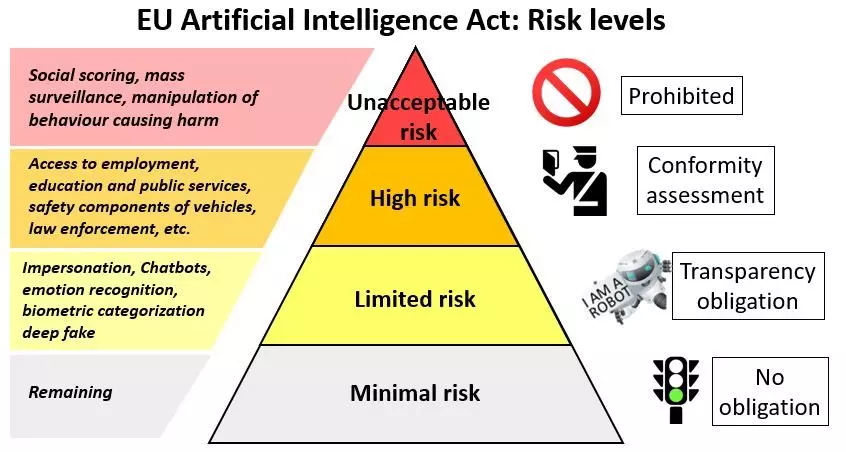

Counterfeit AI poses a serious threat to society, and it's important to take action to prevent its use. Real AI-based detection systems are needed to identify counterfeit machine learning applications, neural network products, and deep learning services. Regulating artificial intelligence can be incredibly useful in society, but it's important to ensure that the right language model structures are in place to prevent biased or human-made AI. The stakes are high, and it's time to take the threat of counterfeit AI seriously.

A trustworthy artificial intelligence should respect all applicable laws and regulations, as well as a series of requirements; specific assessment lists aim to help verify the application of each of the key requirements:

Human agency and oversight: AI systems should enable equitable societies by supporting human agency and fundamental rights, and not decrease, limit or misguide human autonomy.

Robustness and safety: Trustworthy AI requires algorithms to be secure, reliable and robust enough to deal with errors or inconsistencies during all life cycle phases of AI systems.

Privacy and data governance: Citizens should have full control over their own data, while data concerning them will not be used to harm or discriminate against them.

Transparency: The traceability of AI systems should be ensured.

Diversity, non-discrimination and fairness: AI systems should consider the whole range of human abilities, skills and requirements, and ensure accessibility.

Societal and environmental well-being: AI systems should be used to enhance positive social change and enhance sustainability and ecological responsibility.

Accountability: Mechanisms should be put in place to ensure responsibility and accountability for AI systems and their outcomes.

Leave your comments

Post comment as a guest