Comments

- No comments found

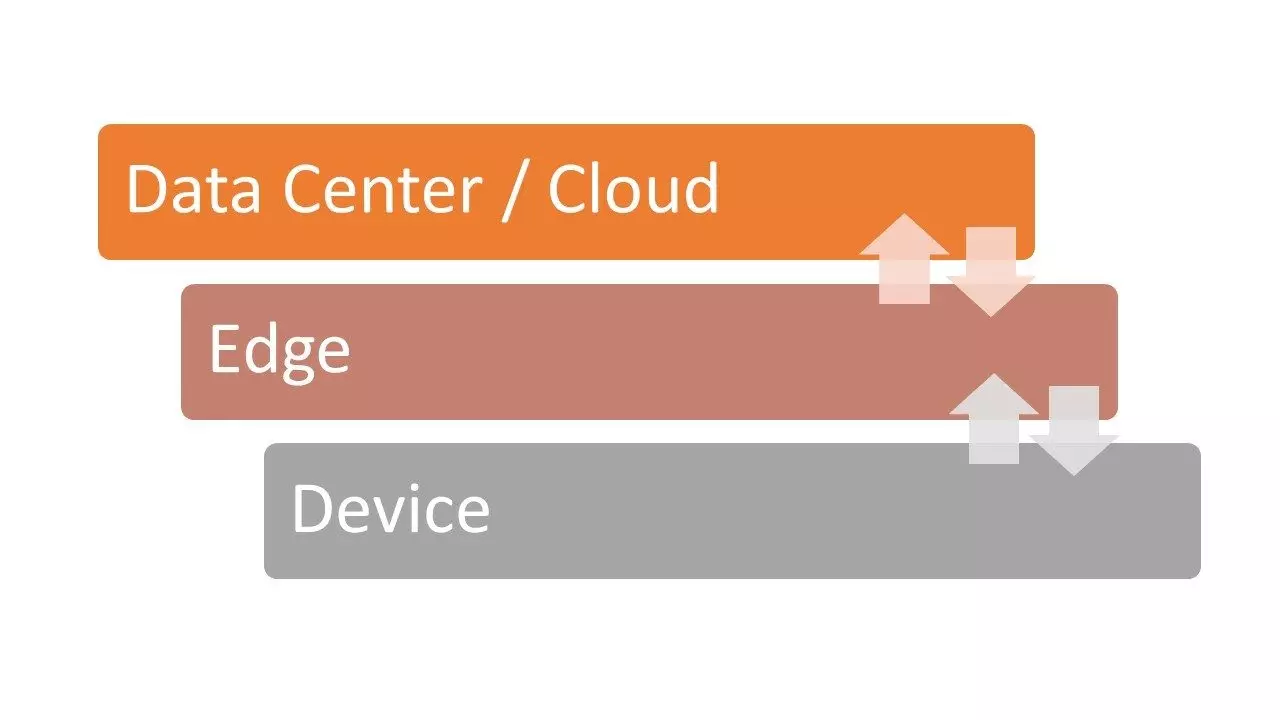

Edge computing is a model in which data, processing and applications are concentrated in devices at the network rather than existing almost entirely in the cloud.

Edge computing is a paradigm that extends cloud computing and services to the of the network, similar to cloud, edge provides data, compute, storage, and application services to end-users.

Edge computing reduces service latency, and improves QoS (Quality of Service), resulting in superior user-experience. Edge computing supports emerging concepts of metaverse applications that demand real-time/predictable latency (industrial automation, transportation, networks of sensors and actuators). Edge computing paradigm is well positioned for real time big data and real time analytics, it supports densely distributed data collection points, hence adding a fourth axis to the often-mentioned big data dimensions (volume, variety, and velocity).

Unlike traditional data centers, Edge devices are geographically distributed over heterogeneous platforms, spanning multiple management domains. That means data can be processed locally in smart devices rather than being sent to the cloud for processing.

Edge Computing Services Cover

Advantages of Edge computing

Benefits of Edge Computing

Real-Life Example

A traffic light system in a major city is equipped with smart sensors. It is the day after the local team won a championship game and it’s the morning of the day of the big parade. A surge of traffic into the city is expected as revelers come to celebrate their team’s win. As the traffic builds, data is collected from individual traffic lights. The application developed by the city to adjust light patterns and timing is running on each edge device. The app automatically makes adjustments to light patterns in real time, at the edge, working around traffic impediments as they arise and diminish. Traffic delays are kept to a minimum, and fans spend less time in their cars and have more time to enjoy their big day.

After the parade is over, all the data collected from the traffic light system would be sent up to the cloud and analyzed, supporting predictive analysis and allowing the city to adjust and improve its traffic application’s response to future traffic anomalies. There is little value in sending a live steady stream of everyday traffic sensor data to the cloud for storage and analysis. The civic engineers have a good handle on normal traffic patterns. The relevant data is sensor information that diverges from the norm, such as the data from parade day.

Future of Edge Computing

As more services, data and applications are pushed to the end-user, technologists will need to find ways to optimize the delivery process. This means bringing information closer to the end-user, reducing latency and being prepared for the Metaverse and its applications in Web 3.0. More users are utilizing mobility as their means to conduct business and their personal lives. Rich content and lots of data points are pushing cloud computing platforms, literally, to the Edge – where the user’s requirements are continuing to grow.

With the increase in data and cloud services utilization, Edge computing will play a key role in helping reduce latency and improving the user experience. We are now truly distributing the data plane and pushing advanced services to the Edge. By doing so, administrators are able to bring rich content to the user faster, more efficiently, and – very importantly – more economically. This, ultimately, will mean better data access, improved corporate analytics capabilities, and an overall improvement in the end-user computing experience.

Moving the intelligent processing of data to the edge only raises the stakes for maintaining the availability of these smart gateways and their communication path to the cloud. When the internet of things (IoT) provides methods that allow people to manage their daily lives, from locking their homes to checking their schedules to cooking their meals, gateway downtime in the Edge computing world becomes a critical issue. Additionally, resilience and failover solutions that safeguard those processes will become even more essential. Generally speaking, we are moving towards localization to a distributed model away from the current strained centralized system defining the Internet infrastructure.

Ahmed Banafa is an expert in new tech with appearances on ABC, NBC , CBS, FOX TV and radio stations. He served as a professor, academic advisor and coordinator at well-known American universities and colleges. His researches are featured on Forbes, MIT Technology Review, ComputerWorld and Techonomy. He published over 100 articles about the internet of things, blockchain, artificial intelligence, cloud computing and big data. His research papers are used in many patents, numerous thesis and conferences. He is also a guest speaker at international technology conferences. He is the recipient of several awards, including Distinguished Tenured Staff Award, Instructor of the year and Certificate of Honor from the City and County of San Francisco. Ahmed studied cyber security at Harvard University. He is the author of the book: Secure and Smart Internet of Things Using Blockchain and AI.

Leave your comments

Post comment as a guest