Comments

- No comments found

The sheer potential of facial recognition technology in various fields is almost unimaginable.

However, certain errors that commonly creep into its functionality and a few ethical considerations need to be addressed before its most elaborate applications can be realized.

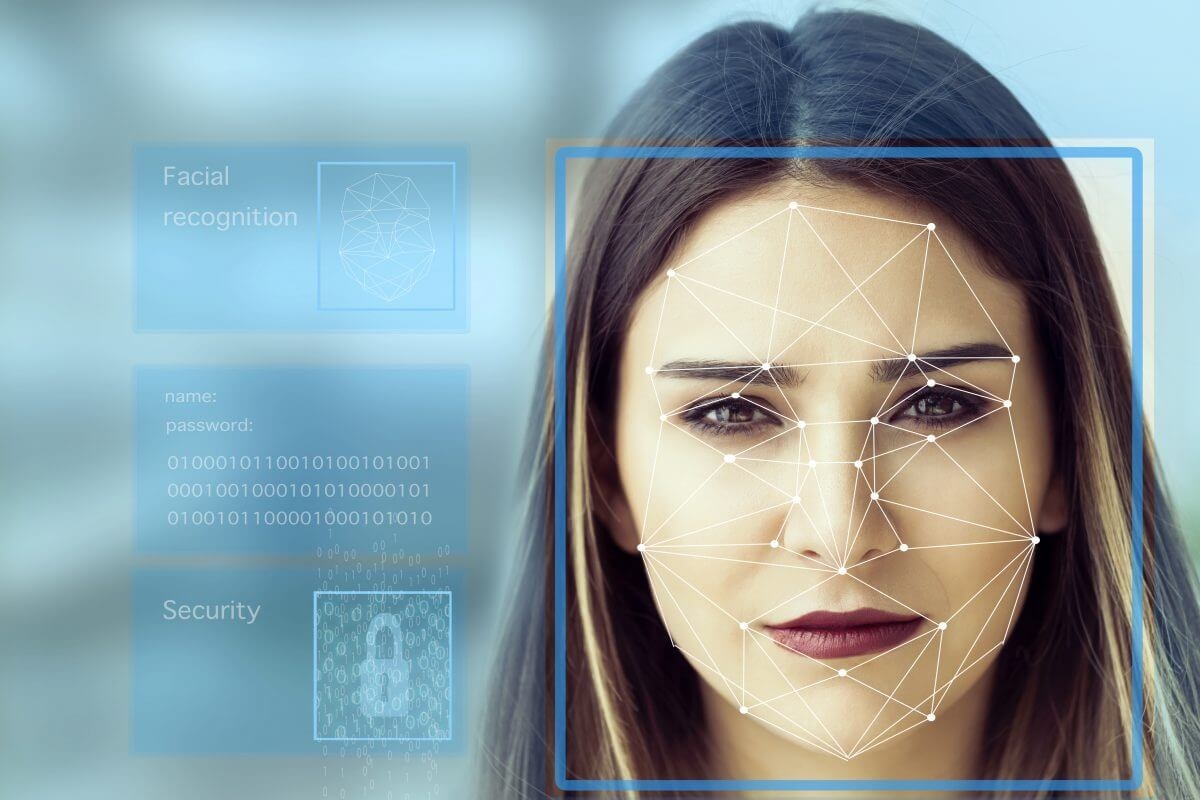

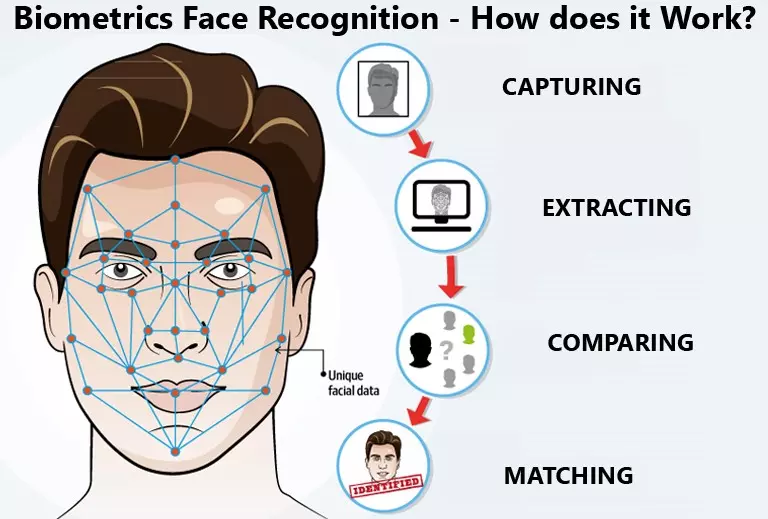

An accurate facial recognition system uses biometrics to map facial features from a photograph or video. It compares the information with a database of known faces to find a match. Facial recognition can help verify a person's identity, but it also raises privacy issues.

A few decades back, we could not have predicted that facial recognition would go on to become a near-indispensable part of our lives in the future. From unlocking your smartphone to making a digital transaction for an online (or offline) purchase, the technology is well and truly ingrained in our daily life today. An incredible application of AI’s computer vision and machine learning components, facial recognition systems work in the following way: trained algorithms determine the various distinctive details in a person’s face, such as the number of pixels that can fit between their eyes or the curvature of their lips, amongst other details interpreted logically to recreate the face within the system. This recreation is then compared with a wide array of faces stored in the system database. If the algorithms detect that the recreation mathematically matches a face present in the database, then the system ‘recognizes’ it and carries out the user’s task.

Apart from executing this entire exercise in a matter of nanoseconds, today’s facial recognition systems can do their job competently even in poor lighting, image resolution, and angle of view.

Like other AI-powered technologies, facial recognition systems need to follow a few ethical principles while being used for various purposes. These regulations include:

Firstly, a facial recognition device must be developed in a way that the system completely prevents, or at least minimizes, bias against any person or group based on their race, gender, facial features, deformities or other aspects. Now, it is well documented that facial recognition systems cannot be 100% fair in their operations. Therefore, companies that build the systems supporting this technology generally spend hundreds of hours eliminating all traces of bias found in them.

Reputed organizations such as Microsoft generally employ qualified experts from as many ethnic communities as possible. During the research, development, testing, and design phase of their facial recognition systems, the diversity allows them to create massive datasets to train the AI, data models. While the huge datasets reduce the bias quotient, the diversity is symbolic too. The selection of individuals from all over the world is useful to reflect the diversity found in the real world.

Organizations must travel the extra mile to remove bias from facial recognition systems. To achieve this, the datasets used for machine learning and labeling must be diversified. More than anything, a fair facial recognition system will be incredibly high on output quality as it will work seamlessly in any part of the world without an element of bias.

To ensure fairness in a facial recognition system, developers can also involve end customers during the beta testing phase. Testing the competence of such a system in a real-world scenario will only enhance the quality of its functionality.

Organizations that incorporate facial recognition systems in their workplaces and cybersecurity systems need to have all the details about where the machine learning information is stored. Such organizations need to understand the limitations and capabilities of the technology before implementing it in their daily operations. The company which provides AI-based technology must be completely transparent with their clients regarding these details. Additionally, the service provider must also ensure that their facial recognition system can be used by customers from any location based on their convenience. Any updates in the system must be made only after receiving valid approval from the client.

As specified earlier, facial recognition systems are deployed in several sectors. Organizations that manufacture such systems must provide accountability for them, especially in cases where the technology could directly impact any person or group (law enforcement, surveillance). Accountability in such systems means the inclusion of use cases to prevent physical or health-based injuries, financial embezzlement or other issues that may be caused by the system. To bring an element of control into the process, a qualified individual is put in charge of the system in organizations to make measured and logical decisions. Apart from this, organizations that incorporate facial recognition systems in their daily operations must resolve customer grievances related to the technology on an immediate basis.

Under normal circumstances, a facial recognition system must not be used to snoop on individuals, groups or otherwise without their consent. Certain bodies, such as the European Union (EU), have a standardized set of laws (GDPR) to prevent unauthorized organizations from monitoring individuals within the governing body's jurisdiction. Organizations possessing such systems must comply with all the data protection and privacy laws of the land.

Unless authorized for the same by a national government or decisive governing body for purposes related to national security or other high-profile situations, an organization cannot use a facial recognition system to monitor any person or group. Basically, the technology is strictly prohibited from being used to violate the victim's human rights and freedom.

Despite being programmed to follow these regulations without any exception, facial recognition systems can cause problems due to errors in their operations. Some of the main problems related to the technology are:

As specified earlier, facial recognition systems are incorporated in digital payment applications so that users can verify transactions with the technology. Criminal activities such as facial identity theft and debit card fraud are quite possible with the presence of this technology for the purpose of payments. Customers opt for facial recognition systems for the purpose because of the sheer convenience it offers for users. However, an error that can take place in such systems is when identical twins use them to make unauthorized payments from each other's bank accounts. Worryingly, duplication of faces allows financial embezzlement despite the security protocols present in facial recognition systems.

Facial recognition systems are used to identify criminals out in the open before capturing them. While the technology is undeniably useful as a concept in law enforcement, there are some glaring issues in its working. There are a few ways in which criminals can abuse this technology. For example, the biased AI concept provides inaccurate results to law enforcement officers as, on occasions, the systems cannot distinguish between men of color. Generally, such systems are trained with datasets containing images of white men. As a result, the system's workings are error-ridden when it comes to identifying people from other ethnicities.

There have been several instances wherein organizations or public bodies have been accused of unlawful surveillance of civilians with advanced facial recognition systems. The video data collected by continuously monitoring individuals can be used for several devious purposes. One of the biggest complaints with facial recognition systems is the generalized output it provides. For instance, if an individual is suspected to have committed a felony, their picture is taken and run alongside the pictures of several criminals to check whether the individual had any criminal record or not. However, the stacking of data together means that the facial recognition database maintains the picture of the man alongside seasoned felons. So, despite the individual's relative innocence, his or her privacy is invaded. Secondly, the person may be seen in a bad light despite being, by all accounts, innocent.

As we can see, the main issues and errors related to facial recognition technology stem from a lack of advancement in technology, a lack of diversity in datasets, and inefficient handling of the system by organizations. In my opinion, AI and its applications have infinite scope for application in real-world requirements. The risks around facial recognition technology typically take place when the technology works in the same way it is supposed to work despite differences in real-world requirements.

It can be expected that, with further technological advancements in the future, the problems related to the technology will be ironed out. The problems related to bias in AI's algorithms will eventually be eliminated. However, for the technology to work perfectly without any ethical breaches, organizations will have to maintain a strict level of governance over such systems. With a greater degree of governance, the facial recognition system's errors can be resolved in the future. As a result, improvements in the research, development, and design of such systems must be carried out to achieve positive solutions.

Naveen is the Founder and CEO of Allerin, a software solutions provider that delivers innovative and agile solutions that enable to automate, inspire and impress. He is a seasoned professional with more than 20 years of experience, with extensive experience in customizing open source products for cost optimizations of large scale IT deployment. He is currently working on Internet of Things solutions with Big Data Analytics. Naveen completed his programming qualifications in various Indian institutes.

Leave your comments

Post comment as a guest