The idea of a super-intelligent machine taking control of humans sounds like a far-fetched plot of a science-fiction novel. But in future societies, experts predict that super-intelligent artificial intelligence (AI) will play a key role – and some are worried that it could be humanity’s greatest mistake.

Artificial Intelligence is developing significantly following a very fast pace. In today's society, this development has not gone beyond expectations, being considered relatively ‘weak’, not living up to the hype. Indeed, the current super machines are only able to perform ‘narrow’ tasks, such as facial recognition or performing an internet search. However, it is important to state that the next generation of machines, super-intelligent artificial intelligence, will be far more powerful than the human brain. Though, super AI won't be threatening humanity yet, many of us will be affected its actions and decisions.

‘Strong’ Artificial Intelligence?

However, the main aim of research and development in AI is the creation of what is known as Strong AI. This type of AI would not be limited to certain jobs, and would outperform humans in practically every task it can be applied to. A survey of experts in the AI field suggests that there is a 50% chance of strong AI being created by 2050, and a 90% chance by 2075. It is this form of AI that would be the basis of super-intelligence in the future.

The potentially more apocalyptic implications of such intelligence are most prominently described by Nick Bostrom, a Swedish philosopher based at the University of Oxford. Bostrom hypothesises a Darwinian scenario; he believes that if humans create a machine superior to us and allow it to learn through the internet, there is no reason why it would not act to secure its dominance over the humans race changing the landscape of the biological world. Mr Bostrom used the example of humans and gorillas to demonstrate the one-sided nature of the relationship, and how an inferior intelligence always depends on a superior one for survival.

In addition to the warnings of Elon Musk and other renown scientists , the Future of Life Institute has stated the main two ways in which super-intelligence could be dangerous for humanity. The first is if it is programmed to ‘do something devastating’, such as an autonomous weapon, then we should expect mass casualties. It goes on to describe the catastrophic implications of an AI arms race, including how weapons would be designed to be extremely hard to turn off thwarting enemies, meaning that the situation could escalate out of human control.

The other dangerous situation is if AI is programmed to perform something beneficial, but forms a destructive method of carrying it out. This could happen at any time that the AI machine’s objectives are not perfectly aligned with humans. For example, if a car was programmed to drive across a city to a certain location without programming it with perfect knowledge of traffic systems, it would cause utter havoc. In scenarios involving automated weapons, this could have even more severe consequences.

An Existential Threat?

Clearly, super-intelligence, if not implemented safely, could have some rather disastrous repercussions for humanity. But is it an existential threat? Many people aren’t convinced.

One main argument is simply that humans aren’t foolish enough to build a machine that would be more intelligent than us without having sufficient safeguards in place – particularly if the AI had even a tiny chance of destroying humanity. In line with this argument is the view that if someone was able to create a potentially deadly AI that was out of human control, then humans could simply create another AI to destroy the first ‘evil’ one. Essentially, the situation would not be as instantly apocalyptic as some may believe.

Furthermore, some people challenge the opinion of Bostrom with regards to his belief that super-intelligent AI would naturally seek to dominate humans as the superior species. They argue that intelligence does not correlate with a desire for power, also stating that the will to dominate others is very much a trait among a select few humans and has no reason to be shared by AI.

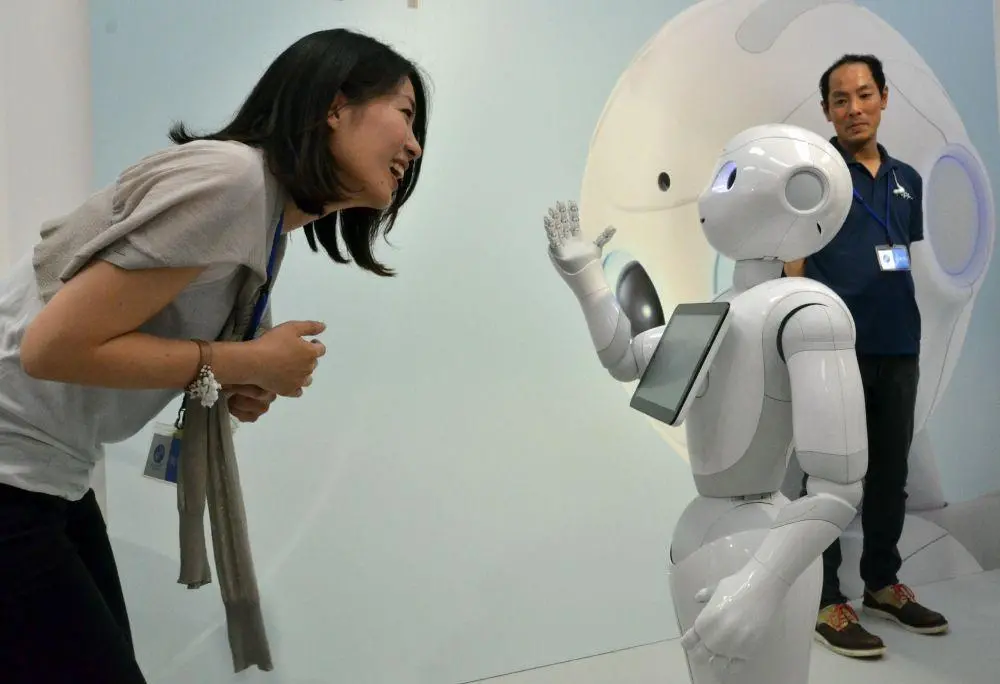

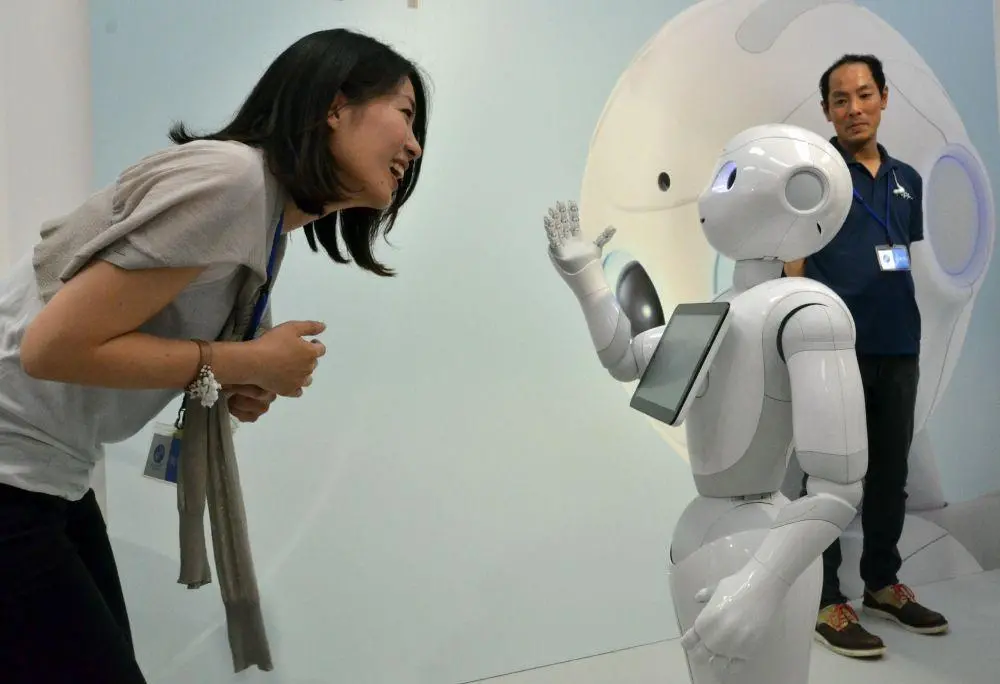

There is also a third view worth mentioning, which holds a negative view of the development of AI in general. Japan is already using robots to take care of old people, and some believe that this is dehumanising. Will human interaction be replaced by that of technology? AI is also being used in industry, replacing human workers, and a similar question is being raised. Will machines take our jobs ? AI is still raising a lot of eyebrows.

Conclusion

To conclude, the threat that super-intelligence poses to humanity is in many ways a matter of perspective and interpretation – but it’s certainly not an issue to be taken lightly. To quote the great Stephen Hawking:

The rise of powerful AI will be either the best, or the worst thing, ever to happen to humanity.

Leave your comments

Post comment as a guest