Comments (1)

Jeff Davis

That’s absolutely in line with everything I have heard from Peter Diamandis … embrace it and make it your partner to be even better at what ever you do…

By 2025, artificial intelligence (AI) will significantly improve our daily life by handling some of today's complex tasks with great efficiency.

The leading AI researcher, Geoff Hinton, stated that it is very hard to predict what advances AI will bring beyond five years, noting that exponential progress makes the uncertainty too great.

This article will therefore consider both the opportunities as well as the challenges that we will face along the way across different sectors of the economy. It is not intended to be exhaustive.

Artificial Intelligence

AI deals with the area of developing computing systems which are capable of performing tasks that humans are very good at, for example recognising objects, recognising and making sense of speech, and decision making in a constrained environment. Some of the classical approaches to AI include (non-exhaustive list) Search algorithms such as Breath-First, Depth-First, Iterative Deepening Search, A* algorithm, and the field of Logic including Predicate Calculus and Propositional Calculus. Local Search approaches were also developed for example Simulated Annealing, Hill Climbing (see also Greedy), Beam Search and Genetic Algorithms (see below).

Machine Learning

Machine Learning is defined as the field of AI that applies statistical methods to enable computer systems to learn from the data towards an end goal. The term was introduced by Arthur Samuel in 1959. A non-exhaustive list of examples of techniques include Linear Regression, Logistic Regression, K-Means, k-Nearest Neighbour (kNN), Naive Bayes, Support Vector Machine (SVM), Decision Trees, Random Forests, XG Boost, Light Gradient Boosting Machine (LightGBM), CatBoost.

Deep Learning:

Deep Learning refers to the field of Neural Networks with several hidden layers. Such a neural network is often referred to as a deep neural network. Neural Networks are biologically inspired networks that extract abstract features from the data in a hierarchical fashion. Key techniques that will play roles in the next decade include Generative Adversarial Networks (GANs), Recurrent Neural Networks (RNNs used for Time Series and NLP although see Transformers for NLP) including Long Short Term Memory Networks (LSTMs), Transformers with Self-Attention (NLP and possibly Time-Series) and possibly Capsule Networks (an area of ongoing research). Deep Reinforcement Learning will be considered in greater detail in a future part of this series.

The field of Evolutionary Genetic Algorithms and Neuroevolution will also be considered in more detail in a future part to this series. The role of Federated Learning and Differentiated Privacy will also be considered in a future article.

For the purpose of this article I will consider AI to cover Machine Learning and Deep Learning.

Narrow AI: the field of AI where the machine is designed to perform a single task and the machine gets very good at performing that particular task. However, once the machine is trained, it does not generalise to unseen domains. This is the form of AI that we have today, for example Google Translate.

Artificial General Intelligence (AGI): a form of AI that can accomplish any intellectual task that a human being can do. It is more conscious and makes decisions similar to the way humans take decisions. AGI remains an aspiration at this moment in time with various forecasts in terms of its arrival. It may arrive within the next 20 or so years but it has challenges relating to hardware, energy consumption required in today’s powerful machines, and the need to solve for catastrophic memory loss that affects even the most advanced Deep Learning algorithms of today.

Super Intelligence: is a form of intelligence that exceeds the performance of humans in all domains (as defined by Nick Bostrom). This refers to aspects like general wisdom, problem solving and creativity.

For more details on the types of AI and Machine Learning see the article in KDnuggets "An Introduction to AI".

McKinsey produced a detailed and helpful publication entitled "Notes from the AI frontier: Applications and value of Deep Learning" observing that "We collated and analyzed more than 400 use cases across 19 industries and nine business functions. They provided insight into the areas within specific sectors where Deep Neural Networks can potentially create the most value, the incremental lift that these neural networks can generate compared with traditional analytics (Exhibit 2), and the voracious data requirements—in terms of volume, variety, and velocity—that must be met for this potential to be realised." McKinsey also made clear that their library of use cases, while extensive, was not exhaustive, and may result in an overstatement or understatement of the potential for particular sectors with McKinsey continuing to refine and add to it.

Whilst the study from McKinsey provides a comprehensive and helpful overview, I believe that the impact from Deep Learning will be greater than McKinsey forecast because techniques such as Convolutional Neural Networks (CNNs) will have a major impact in sectors such as Healthcare with medical imaging, the Insurance sector with the automation of Retail sector with visual search as well as without having to pay at the cashier till in-store with Amazon Go , and in Banking with KYC for identity verification as just a few examples. Furthermore, some of the techniques for successfully enabling the training of Deep Neural Networks with smaller data sets are anticipated to have made it into production over the course of the next decade in turn allowing Deep Learning to scale further across the economy. This is dealt with in a short recap section below of some of these novel techniques provided.

I believe that over the period 2019 to 2029 it is worth revisiting the comment by Andrew Ng who stated:

"We need a Goldilocks Rule for AI:"

"Too optimistic: Deep learning gives us a clear path to AGI!"

"Too pessimistic: DL has limitations, thus here's the AI winter!"

"Just right: DL can’t do everything, but will improve countless lives & create massive economic growth."

As Jason Brownlee in "Deep Learning & Artificial Neural Networks" referenced the work by Andrew Ng and stated "That as we construct larger neural networks and train them with more and more data, their performance continues to increase. This is generally different to other machine learning techniques that reach a plateau in performance."

Source for image above Andrew Ng

As noted a great of research is underway to allow Deep Learning to also successfully train and scale with smaller data sets.

An example is provided in an earlier article "Smarter AI & Deep Learning" considered the potential to simplify and improve the training of Deep Neural Networks. It considered the work of Jonathan Frankle Michael Carbin of MIT CSAIL published The Lottery Ticket Hypothesis: Finding Sparse, Trainable Neural Networks with the insightful summary provided by Adam Conner-Simons in Smarter training of neural networks.

The article noted that MIT CSAIL project showed that neural nets contain "subnetworks" 10x smaller that can just learn just as well - and often faster.

These days, nearly all AI-based products in our lives rely on “Deep Neural Networks” that automatically learn to process labeled data.

"For most organizations and individuals, though, Deep Learning is tough to break into. To learn well, neural networks normally have to be quite large and need massive datasets. This training process usually requires multiple days of training and expensive graphics processing units (GPUs) - and sometimes even custom-designed hardware."

But what if they don’t actually have to be all that big after all?

In a new paper, researchers from MIT’s Computer Science and Artificial Intelligence Lab (CSAIL) have shown that neural networks contain subnetworks that are up to 10 times smaller, yet capable of being trained to make equally accurate predictions - and sometimes can learn to do so even faster than the originals.

An article in MIT Technology Review by Will Knight reported that "Two rival AI approaches combine to let machines learn about the world like a child". The article related to a paper entitled The Neuro-Symbolic Learner: Interpreting Scenes, Words, and Sentences form Natural Supervision is a joint paper between MIT CSAIL, MIT Brain Computer Science, MIT-IBM Watson AI Lab and Google DeepMind.

Will Knight in the Technology Review observed that:

"More practically, it could also unlock new applications of AI because the new technology requires far less training data. Robot systems, for example, could finally learn on the fly, rather than spend significant time training for each unique environment they’re in."

“This is really exciting because it’s going to get us past this dependency on huge amounts of labeled data,” says David Cox, the scientist who leads the MIT-IBM Watson AI lab.

It maybe that Capsule Networks will also have emerged into production.

Furthermore, this will be a period in which Deep Reinforcement Learning will have a major impact in areas such as robotics and other autonomous systems. For example Seth Adler authored "A Quick Guide to Reinforcement Learning" and provided the example of the impact in manufacturing where in Japanese manufacturer Fanuc " a robot uses Deep Reinforcement Learning to pick a device from one box and putting it in a container. Whether it succeeds or fails, it memorises the object and gains knowledge and train’s itself to do this job with great speed and precision." Such techniques will become common across manufacturing in the next decade and both GANs and Deep Reinforcement Learning will be more frequently applied across transportation (autonomous cars) and pharmaceutical sectors (drug discovery).

Data Science and Machine Learning functions will report directly to the CEO

I attended a talk by Quantum Black (@quantumblack) a McKinsey Company, during the CogX in London, where it was pointed out that the role of the head of Machine Learning / Data Science in companies was evolving from beyond the statistics and coding to one whereby the head of Data Science will be responsible for making business related judgements and over the course of the 2020s the AI and Data Science functions would come under the direct ownership of the CEO of the organisation.

A KPMG report forecast that business spending on Intelligent Augmentation covering AI and robotic process automation (RPA) technologies would increase from $12.4bn in 2018 to $232bn in 2025.

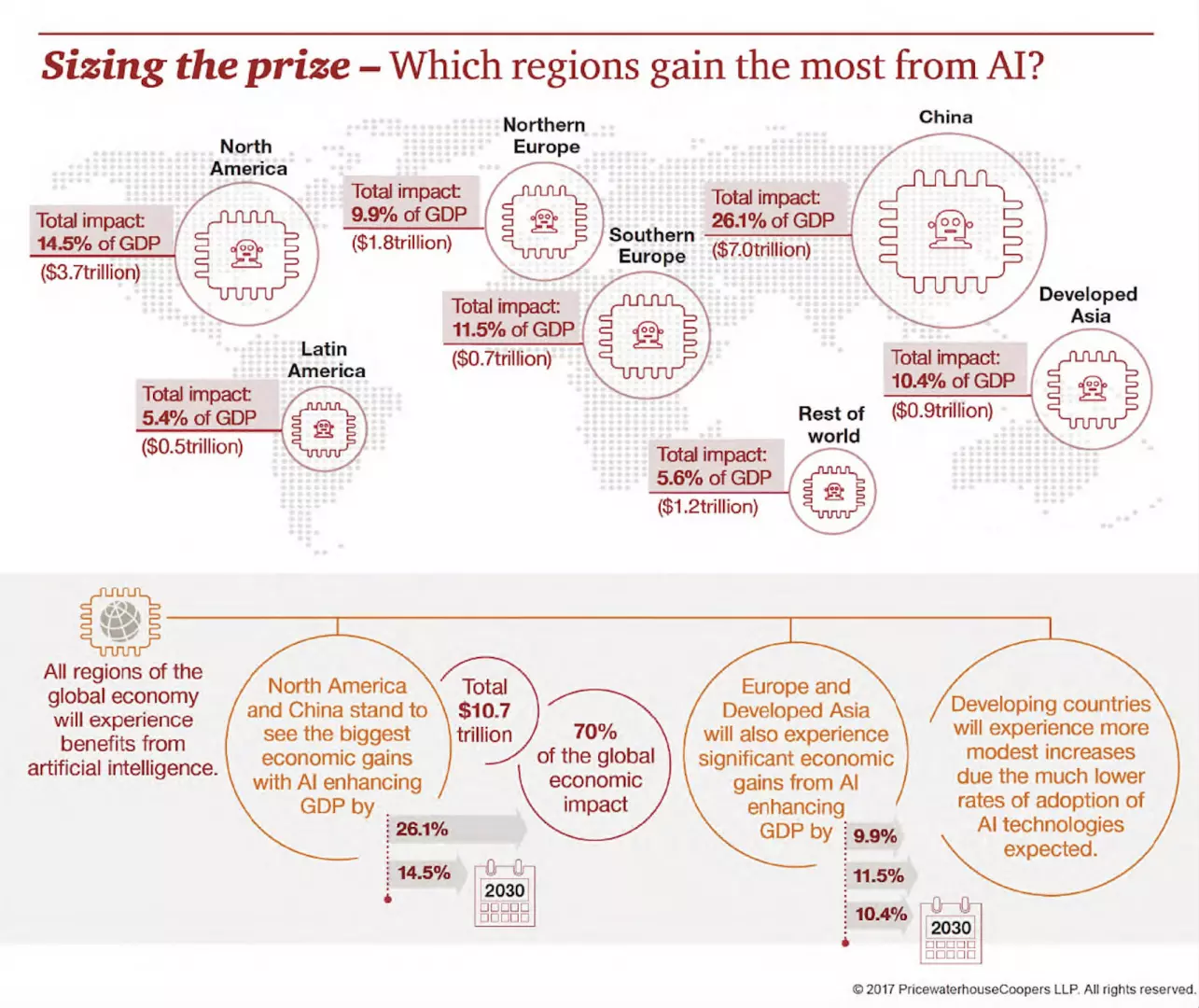

AI will drive economic growth across the global economy in the period to 2030.

PWC forecast that the potential contribution to the global economy by 2030 from AI will amount to $15.7tr, with up to 26% boost in GDP for local economies from AI by 2030.

A major advantage of processing AI workloads at the edge is that the latency is substantially reduced relative to waiting for a response to a query from a remote cloud based server. As a result the cameras, robots and computers of the future will be able to make improved and better informed judgements rather than constantly querying a remote cloud server and waiting before making a decision. For example an autonomous car will need to make real-time decisions on whether to turn left or right rather than wait for a server to respond with the decision. Moreover, drones using computer vision will have enhanced reliability using AI on the device to adjust their own flight paths.

The growth in edge computing as sensors spread across smart cities was noted by Jason Compton in an article entitled "Edge AI And Its Paradigm-Changing Effects" where he observed that "on-device AI can improve first-responder notification time by using sensors embedded in city infrastructure, such as street lights, to assess background noise and determine if an emergency situation exists. AI can also enable traffic cameras to identify vehicles instantly through optical recognition of license plates as well as through pattern and colour matching."

This will save valuable time for first responders in understanding the situation before they arrive at the scene. Furthermore, adoption of AI on the edge will enable immediate identification of interruptions to the business process in a manufacturing facility in turn allowing for recommendations to be made to those in the factory about what caused the issue (for example failure of a component) and how to best to respond to the incident in order to minimise the damage and restore operations to normal in the quickest time.

In this period Deep Reinforcement Learning will be frequently deployed into everyday activities around us. For example Zhu et al. "Deep Reinforcement Learning for Unmanned Aerial Vehicle-Assisted Vehicular Networks "proposed that unmanned aerial vehicles (UAVs) are deployed to complement the 5G communication infrastructure in future smart cities. Hot spots easily appear in road intersections, where effective communication among vehicles is challenging. UAVs may serve as relays with the advantages of low price, easy deployment, line-of-sight links, and flexible mobility.

Source for Figure above: Zhu et al. "Deep Reinforcement Learning for Unmanned Aerial Vehicle-Assisted Vehicular Networks"

For those seeking a recap on what 5G is and how it will change the world it is recommended to view the video below entitled "What is 5G? & How 5G Will Change the World!".

Sarah Wray observed in an article entitled "5G could drive trillions in media and entertainment by 2028" that 5G will continue to roll out and exert a greater influence over this period. It is forecast that media and entertainment ‘experiences’ enabled by 5G will generate up to $1.3 trillion in revenue by 2028, according to a new report commissioned by Intel and carried out by Ovum.

The report suggests that 2025 will represent a ‘tipping point’ for 5G in entertainment and media. By that time, the report forecasts that around 57 per cent of wireless revenue globally will be driven by the capabilities of 5G networks and devices. By 2028, Intel and Ovum expect that number to rise to 80 per cent.

It is anticipated that there will be widespread adoption of holographic technology for business, entertainment and personal communications with friends and family. Perhaps business meetings will be conducted via holographic calls thereby reducing the need for business travel in the future.

Ben Yu observed in an article entitled "The convergence of 5G and AI: A venture capitalist’s view" that 5G is not just “4G but better.” It taps new spectrum that will drive innovative business opportunities and use cases. For example, in the 28 GHz and 39 GHz bands, a.k.a. the “millimeter wave band,” reams of new bandwidth could transform the communications carrier landscape as we know it while further improving the end-user experience of mobility.

"If AI and 5G had a baby, its name would be AV. Autonomous vehicles are essentially data centers on wheels. If you look at them closely, you’ll notice they are loaded with multiple 4G LTE modems, because, with brains in the device, they require intelligence at the edge. That requires the rich and rapid movement of data that 5G is uniquely positioned to offer."

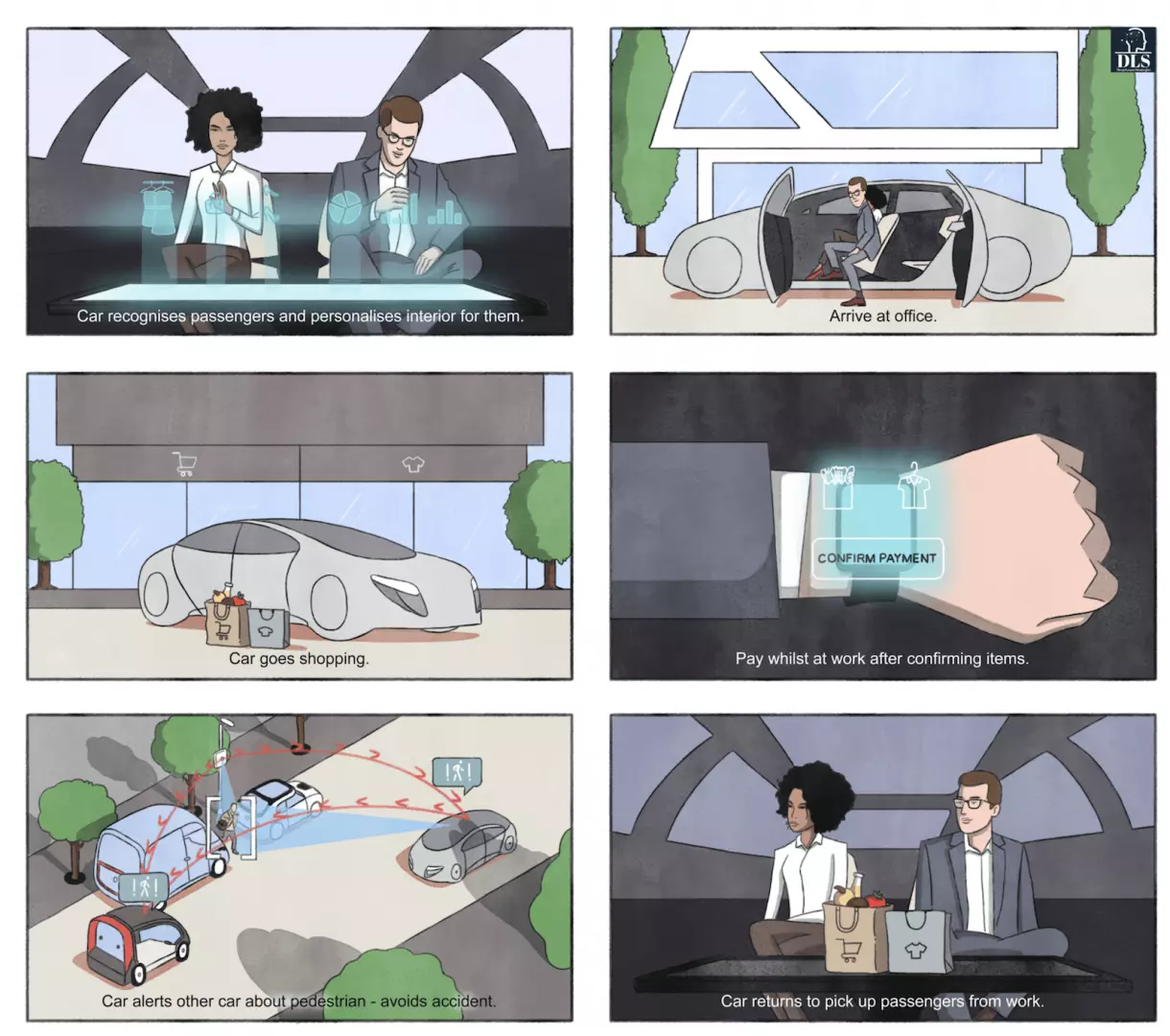

DLS view of Autonomous Journey with 5G linking Retail & Fintech (payments)

Autonomous driving and ride-sharing will combine to fundamentally change the dynamic of private-car ownership according to Credit Suisse. Furthermore Credit Suisse forecast that the global car-sharing and ride-sharing market to expand from $17bn in 2015 to $81bn in 2030.

The car manufacturing firms that succeed in this environment will be those that have adjusted towards ride sharing fleets for commuters as the younger generation move away from car ownership and more towards pooling and sharing. In addition there will be a growing demand for autonomous vehicles that can be shared by the increasingly ageing population (thereby giving them greater mobility) and autonomous delivery vehicles (for example imagine a clothes showroom on wheels and food delivery).

Image above: Autonomous Vehicle Sales to Surpass 33 Million Annually in 2040, Enabling New Autonomous Mobility in More Than 26 Percent of New Car Sales, IHS Markit.

Fintech influencer Jim Marous (@JimMarous) article in the Financial Brand "The Invisible Bank of the Future". Fintech influencer Spiros Magaris (@SpirosMargaris) has explained that it is the banks who work out how to successfully apply AI to customer solutions who will thrive in the future.

The video below provides an example of what the future of banking with AI in the 2020s may look like with a frictionless and interactive experience for the customer.

6G

6G is forecast by some analysts to arrive in the 2030s. It will offer even faster speeds, even greater capacity and even lower latency.

Steve McCaskill in "Get ready for 6G mobile networks: 1Tbps speeds, microsecond latency and AI optimisation" observed that "Early 6G networks will be largely based on 5G infrastructure, an acknowledgement that each generation ‘borrows’ elements from the previous one, and so will benefit from the increased number of radios and de-centralised network architecture that will take place with 5G."

"In terms of speed, 6G networks will allow for 1Tbps by making use of sub-1THZ spectrum and will focus on connecting the trillions of objects, rather than the billions of mobile devices. Latency will be improved through the use of AI to determine the best way to transmit data from the device to the base station and through the network. It is also predicted that organisations outside the mobile industry will play a much greater role in standardisation, meaning it can be tailored to their needs."

A number of market analysts predict that in the 2030s we will move towards the autonomous flying car.

Morgan Stanley predicts flying cars will be a $1.5 to $3 trillion business in 20 years, meaning the race is on to develop a fleet of ridesharing autonomous air taxis. Boeing's prototype took its first flight earlier this year.

One example is Next Future Transportation who claim to have developed artificial intelligence for collision avoidance in the air and plan to sell their ASKA hybrid vehicle in 2025.

Whether one refers to them as cars or drones, it is submitted that AI combined with edge computing will result in an ongoing revolution in transportation over the next two decades.

Source for image above: TransportUp.com, NASA holds Urban Air Mobility Industry Day in Seattle

The arrival and scaling of autonomous vehicles and 5G technology in general with an intelligent IoT will result in enormous changes across society and the manner in which we live our daily lives. The scale of change is too vast to deal with in any one article and hence there will be a series of articles considering areas such as Healthcare, smart cities, financial services, climate change, ethics and retail.

Furthermore, we will also make significant advancements with our exploration of space in particular our immediate solar system with AI playing a key role.

Yan Fisher authored an article entitled "How open source and AI can take us to the Moon, Mars, and beyond" observing how well the Spaceborn Computer performed. Furthermore, Oliver Peckham in "The Spaceborne Computer Returns to Earth, and HPE Eyes an AI-Protected Spaceborne 2" observed that "Spaceborne 2 will also seek to make the software hardening abilities of the supercomputer more intelligent using machine learning and AI"

We will increasingly use AI to gain a greater understanding of our solar system for example DisruptiveAsia quoted Carl Marchetto, vice president of New Ventures at Lockheed Martin Space as stating that “AI can revolutionize how we use information from space, both in orbit and on deep space missions, including crewed missions to Mars and beyond.” The importance of AI on the edge for space exploration was made clear by @joe_landon Vice President, Advanced Programs Development at Lockheed Martin during a panel on AI during the WEF at Davos where we both spoke and it was clear that AI on the edge was an essential component for future space missions and using robotic equipment to obtain a greater understanding of the planets around us.

Furthermore, NASA point out that "AI Will Prepare Robots for the Unknown". quotes Chien, a senior research scientist on autonomous space systems is quoted with the following observation about humans working alongside AI "The goal is for AI to be more like a smart assistant collaborating with the scientist and less like programming assembly code...It allows scientists to focus on the 'thinking' things -- analysing and interpreting data -- while robotic explorers search out features of interest."

AI will continue to enable the likes of NASA to make discoveries in deep space such as exoplanets around other starts

Marc Prosser and Jovan David Rebolledo in "AI Is Kicking Space Exploration Into Hyperdrive—Here’s How" suggest that AI will play a key role in the future by Terraforming Our Future Home with the observation that "Further into the future, moonshots like terraforming Mars await. Without AI, these kinds of projects to adapt other planets to Earth-like conditions would be impossible."

A study from Oxford and Yale University researchers forecast the years it will take for AI to take over particular tasks. A story in BusinessInsider covered the paper and the DLS team took inspiration from this to create our own version shown above.

"Lead investigator Katja Grace and her colleagues found the tasks most likely to get automated within the next 10 years were routine, mechanical tasks. Language translation could outpace human performance by 2024, responses indicated, and robots may be able to write better high-school-level essays than humans in 2026."

"More complex and creative tasks, like writing books and performing high-level math, will take longer. Ultimately, the researchers found AI could automate all human tasks by the year 2051 and all human jobs by 2136. "

Such projections cause anxiety about the future of humanity and what work we will do once AI becomes stronger in the future. I believe rather than obsessing about AGI at this moment in time, we have greater and very real threats that we have to face today and within our lifetimes.

A question some ask is why do we continue with AI and why not just ban it? This is tantamount to trying to stop the tide and as King Canute demonstrated stopping the tide cannot be done even at the command of a King at a time when King's where supposed to be all powerful. Today we live in a digital era with an ever growing deluge of big data. People want their mobile and social media and hence Machine Learning technology is needed to make sense of the data.

Dean Takashi in "Newzoo: Smartphone users will top 3 billion in 2018, hit 3.8 billion by 2021" observed that 39 percent of the global population were using a smart mobile phone at the end of 2018 and that 48 percent would use one by 2021.

Source Image above: Newzoo

The amount of big data being generated in an Internet Minute

Source for the image above: VisualCapitalist What Happens in an Internet Minute in 2019?

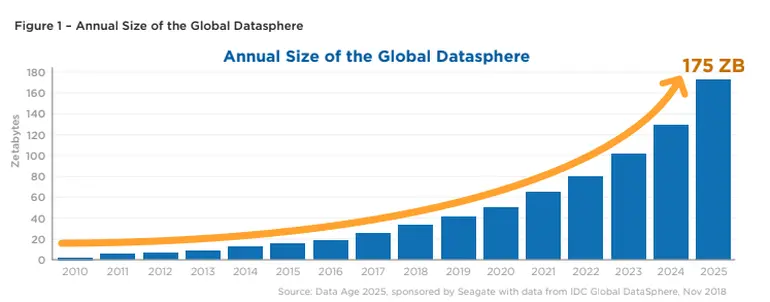

Stephanie Condon in an article entitled "By 2025, nearly 30 percent of data generated will be real-time, IDC says" explains that this is a doubling from the 15 percent from 2017 and states that "All told, of the 150 billion devices that will be connected across the globe in 2025, most will be creating real-time data, IDC says. The global datasphere is expected to grow from 23 Zettabytes (ZB) in 2017 to 175 ZB by 2025. One zettabyte is equivalent to a trillion gigabytes."

Source for Images above: IDC

The volume and velocity of data is too much and too fast for humans to analyse. Machine Learning algorithms enable us to make use of this tidal wave of data and detect patterns that we would otherwise miss.

An increase in the generation of real-time data as IoT sensors spread all around us will further the need for both Machine Learning to make sense of the data and also for AI on the edge.

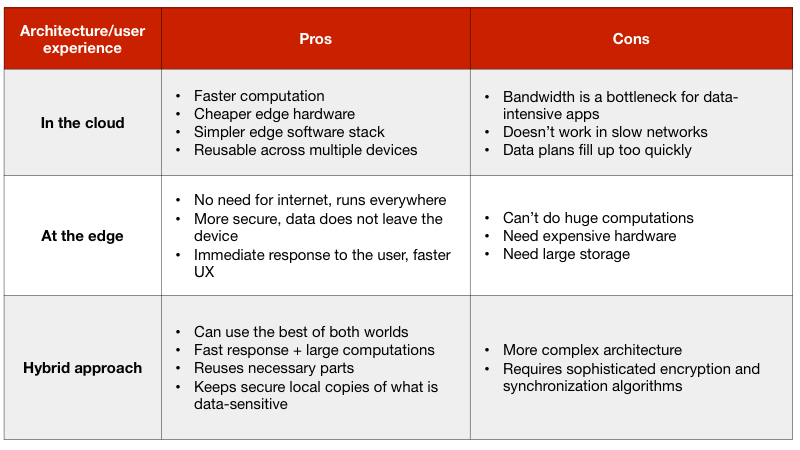

It is suggested that the hybrid cloud / edge model will continue grow in popularity. The hybrid approach entails training on the cloud and inferencing on the edge. Claudio Camacho explains in "Machine learning means a hybrid future for computing and storage" that there are three different ways of building the architecture for consumer-grade AI: in the cloud, at the edge, or a hybrid approach. The table below summarises the pros and cons of these architectures."

Source for the image above Claudio Camacho "Machine learning means a hybrid future for computing and storage

"With the advancements of current technology, the prevailing model will be one where cloud and edge will work more tightly together. This means that the cloud provides a baseline of computation and generalized models, whereas edge devices use local data sets and models to personalize and optimize results for a faster and greater user experience."

Claudio Camacho explained that the future will entail Machine Learning not only running on mobile phones but also across all IoT devices including connected cars, with AR and VR leveraging ongoing advancements in Machine Learning with the result that the new use cases will affect the "exponential need for local storage and computational speed."

Furthermore, I strongly believe that AI technology provides a means for us to make a fundamental shift to a cleaner and more sustainable basis for economic growth. Using AI to fight climate change will be dealt with in a future part to this series, however, it needs to be stressed that continuing with BAU will result in massive damage to our future.

Furthermore continuing with BAU means producing vast amounts of plastics with a forecast that by 2050 there will be more plastic in the ocean than fish.

Moreover, the World Health Organisation explains that the demographics in the world are changing:

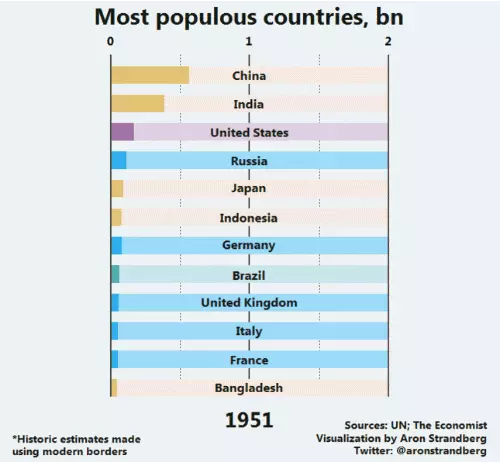

The World Economic Forum (WEF) explains data from the United Nations that sets out how the population across the world has increased from approximately 2.5 billion to around 7.5 billion today is set to rise to around 9.7 billion by 2050. The WEF also explain how the populations across countries are set to change.

Sources for Images below: Aron Strandberg

At the same time global Healthcare costs are increasingly rapidly as set out by Deloitte in the infographic below 2019 Global Health Care Outlook.

AI has the ability to help with the fight against climate change and towards cleaner manufacturing and less plastics in our oceans. AI will also be fundamental to reducing costs and improving patient outcomes in Healthcare.

As Forbes reports "The total public and private sector investment in Healthcare AI is stunning: All told, it is expected to reach $6.6 billion by 2021, according to some estimates. Even more staggering, Accenture predicts that the top AI applications may result in annual savings of $150 billion by 2026."

Examples of how AI will be used to plan and project for the future in the fight against Climate Change was provided by Shmidt et al. who published "Visualizing the Consequences of Climate Change Using Cycle-Consistent Adversarial Networks" whereby a project is presented with the objective to generate personalised and accurate outcomes resulting from climate change with the use of Cycle-Consistent Adversarial Networks (CycleGANs) and allow people to enhance their decision making with regard to the future of the climate whilst maintaining scientific credibility.

Source for image above: Shmidt et al. Visualizing the Consequences of Climate Change Using Cycle-Consistent Adversarial Networks

The growth in renewable energy such as wind farms has resulted in challenges in predicting the generation of power due to the uncertainty and variability in meteorological conditions. Zhang et al. " Typical wind power scenario generation for multiple wind farms using conditional improved Wasserstein generative adversarial network" to enhance the forecasting of power generation from wind power relative to current methods. This is another example of how AI will be used for positive use cases.

The Pathway towards AGI will be complex and many barriers would have to be overcome as noted by Yoshua Bengio. Other leaders in the field of AI research also believe that attain AGI is complex with Kyle Wiggers in "Geoffrey Hinton and Demis Hassabis: AGI is nowhere close to being a reality" quoting Demis Hassabis founder of DeepMind as stating in relation to attaining AGI at NeurIPS 2018 "There’s still much further to go...Real-world 3D environments and the real world itself is much more tricky to figure out."

Furthermore obtaining AGI is unlikely to happen in isolation without our understanding more about the human brain. Dr Anna Becker PhD in AI and CEO of Endotech.io explained that "Obtaining a greater understanding of the human brain is important for us to develop stronger forms of AI". Building upon this theme, it is worth noting that Surya Ganguli authored an article entitled "The intertwined quest for understanding biological intelligence and creating Artificial Intelligence" observing that AI for neuroscience and neuroscience for AI amount to a virtuous scientific spiral stating that "Recent exciting developments in the interaction between neuroscience and AI involve the development of Deep (including Recurrent) Neural Network models as models for different brain regions of animals performing tasks. This approach has achieved success for example in the ventral visual stream, auditory cortex, prefrontal cortex, motor cortex, and retina. In many of these cases, when a Deep or Recurrent Network is trained to solve a task, its internal representations look strikingly similar to the internal neural activity patterns measured in an animal trained to solve the same task."

Surya Ganguli also outlined the work by Stanford University and the human-centred AI initiative with the intention of "...generating new AI systems inspired by human intelligence."

It is highly probable that at that point in time we will be augmenting ourselves with AI in the longer term. For example Valeriani et al. " Brain–Computer Interfaces for Human Augmentation" state that "In the future, it is very likely that many tasks will be performed by AI, but it is also extremely likely that in many other complex tasks there will be a tight integration between humans and AI devices. To achieve the latter, Marc Cavazza proposes to use a BCI to keep the human in the loop, using his/her brain signals to influence the internal heuristic searches performed by the AI devices: the main computations are still performed by AI, with the human, however, being able to supervise the task." This issue will be considered in part 3.

Emily Durham reported in "Researchers Develop First Mind-controlled Robotic Arm Without Brain Implants" noted that "A team of researchers from Carnegie Mellon University, in collaboration with the University of Minnesota, has made a breakthrough that could benefit paralyzed patients and those with movement disorders."

"Using a noninvasive Brain-Computer Interface (BCI), scientists have developed the first successful mind-controlled robotic arm exhibiting the ability to continuously track and follow a computer cursor."

The video below provides a visual demonstration.

On the 17th July 2019 and with a great deal of media coverage, Elon Musk gave more details on his Neural Implant that Tanya Lewis noted in an article entitled "Elon Musk’s Secretive Brain Tech Company Debuts a Sophisticated Neural Implant" that "With typical panache, Musk talked about putting this technology into a human brain by as early as next year."

"The work is the product of Neuralink, a company Musk founded in 2016 to develop a high-bandwidth, implantable brain-computer interface (BCI). He says the initial goal is to enable people with quadriplegia to control a computer or smartphone using just their thoughts. But Musk’s vision is much more ambitious than that: he seeks to enable humans to “merge” with AI, giving people superhuman intelligence—an objective that is much more hype than an actual plan for new technology development."

Neuralink surgical robot. Credit: Neuralink

Perhaps of greater relevance to our immediate to medium term future in the 2020s is the need to ensure that people are prepared and ready to take advantage of the revolution that is on its way. The 2020s will witness every sector of the economy being disrupted, and we will find that many new jobs and even sectors will be created that do not exist today. We will need a revolution in the education system across all levels from the young to the older and more experienced workers to enable them to take advantage of the arrival of the new economy and rapid changes that are likely to arrive with areas such as AR, VR and the continued growth of Data Science.

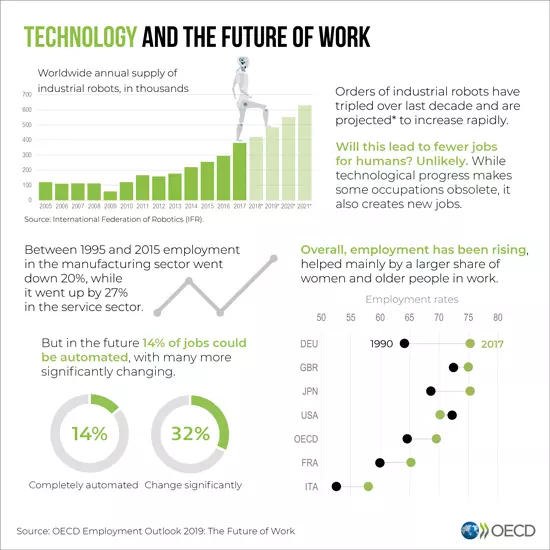

A report by PWC "Will robots really steal our jobs?" noted that "Potential automation rates vary widely by occupation – machine operators and assemblers could face a risk of over 60% by the 2030s, while professionals, senior officials and senior managers may face only around a 10% risk of automation. These variations stem from the different kinds of tasks performed in different occupations and their varied educational requirements."

The report by PWC noted that education was important for ensuring employment in the future.

Source for Image above PWC Will robots really steal our jobs?

PWC also noted the implications for social policy whereby "The most obvious implication of our analysis is the need for increased investment in education and skills to help people adapt to technological change throughout their careers. While increased training in digital skills and science technology engineering and mathematics (STEM) subjects is one important element in this, it will also require retraining of, for example, truck drivers to take jobs in services sectors where demand is high but automation is less easy due to the importance of social skills and the human touch."

My personal view is that we need to consider ways in which we can make STEM more accessible and interesting for a much wider section of the population (across all demographics too). The future will be one of continuous learning in order to remain up to date with the latest technological developments and so as to not become obsolete in terms of skills.

The OECD published "Data on the future of work" and the following infographics are taken from the report, with the stress being on training and learning new skills:

We should expect robotics and automation to play a significant part in our daily lives in the period beyond 2025. In particular the field of robotics and AI will be heavily interlinked with intelligent robots using Deep Learning and in particular GANs and Deep Reinforcement Learning on the edge all around us.

Ambassador for AI Tech North

Picture: Sherin Mathew and Imtiaz Adam AI Tech North conference in Leeds

AI will impact and transform each and every country across all regions and not just the major hubs such as California, Boston, New York, Toronto and London for example.

I have been appointed as an ambassador for AI Tech North and it was a pleasure to present on the Keynote about "AI and Strategy" and also the "Deep Learning and everything you need to know about it" sessions. This initiative is intended to enable the Midlands and the North of the UK to understand the potential of AI technology and how best to prepare for maximising for the adoption of AI. I believe that similar initiatives will be important across other regions of Europe, the USA and indeed around the world so that we can all gain from the revolutionary changes that will arrive over the course of the next decade and beyond.

MIT Article reproduced with permission, original by Rachel Gordon MIT CSAIL

Teaching AI to connect senses like vision and touch

In Canadian author Margaret Atwood’s book Blind Assassins, she says that “touch comes before sight, before speech. It’s the first language and the last, and it always tells the truth.”

While our sense of touch gives us a channel to feel the physical world, our eyes help us immediately understand the full picture of these tactile signals.

Image above credit: MIT CSAIL

Given tactile data, MIT CSAIL system can learn to see (and vice versa), suggesting future where robots can more easily grasp and recognize objects

Robots that have been programmed to see or feel can’t use these signals quite as interchangeably. To better bridge this sensory gap, researchers from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) have come up with a predictive AI that can learn to see by touching, and learn to feel by seeing.

The team’s system can create realistic tactile signals from visual inputs, and predict which object and what part is being touched directly from those tactile inputs. They used a KUKA robot arm with a special tactile sensor called GelSight, designed by another group at MIT.

Using a simple web camera, the team recorded nearly 200 objects, such as tools, household products, fabrics, and more, being touched more than 12,000 times. Breaking those 12,000 video clips down into static frames, the team compiled “VisGel”, a dataset of more than 3 million visual/tactile-paired images.

Image Above Credit: MIT CSAIL

“By looking at the scene, our model can imagine the feeling of touching a flat surface or a sharp edge”, says Yunzhu Li, CSAIL PhD student and lead author on a new paper about the system. “By blindly touching around, our model can predict the interaction with the environment purely from tactile feelings. Bringing these two senses together could empower the robot and reduce the data we might need for tasks involving manipulating and grasping objects.”

Recent work to equip robots with more human-like physical senses, such as MIT’s 2016 project using deep learning to visually indicate sounds, or a model that predicts objects’ responses to physical forces, both use large datasets that aren’t available for understanding interactions between vision and touch.

The team’s technique gets around this by using the VisGel dataset, and something called generative adversarial networks (GANs).

GANs use visual or tactile images to generate images in the other modality. They work by using a “generator” and a “discriminator” that compete with each other, where the generator aims to create real-looking images to fool the discriminator. Every time the discriminator “catches” the generator, it has to expose the internal reasoning for the decision, which allows the generator to repeatedly improve itself.

Humans can infer how an object feels just by seeing it.

To better give machines this power, the system first had to locate the position of the touch, and then deduce information about the shape and feel of the region.

The reference images - without any robot-object interaction - helped the system encode details about the objects and the environment. Then, when the robot arm was operating, the model could simply compare the current frame with its reference image, and easily identify the location and scale of the touch.

This might look something like feeding the system an image of a computer mouse, and then “seeing” the area where the model predicts the object should be touched for pickup -- which could vastly help machines plan more safe and efficient actions.

For touch to vision, the aim was for the model to produce a visual image based on tactile data. The model analyzed a tactile image, and then figured out the shape and material of the contact position. It then looked back to the reference image to “hallucinate” the interaction.

For example, if during testing the model was fed tactile data on a shoe, it could produce an image of where that shoe was most likely to be touched.

This type of ability could be helpful for accomplishing tasks in cases where there’s no visual data, like when a light is off, or, if a person is blindly reaching into a box or unknown area.

The current dataset only has examples of interactions in a controlled environment. The team hopes to improve this by collecting data in more unstructured areas, or by using a new MIT-designed tactile glove, to better increase the size and diversity of the dataset.

There are still details that can be tricky to infer from switching modes, like telling the color of an object by just touching it, or telling how soft a sofa is without actually pressing on it. The researchers say this could be improved by creating more robust models for uncertainty, to expand the distribution of possible outcomes.

In the future, this type of model could help with a more harmonious relationship between vision and robotics, especially for object recognition, grasping, better scene understanding, and helping with seamless human-robot integration in an assistive or manufacturing setting.

“This is the first method that can convincingly translate between visual and touch signals”, says Andrew Owens, a postdoctoral researcher at the University of California at Berkeley. “Methods like this have the potential to be very useful for robotics, where you need to answer questions like ‘is this object hard or soft?’, or ‘if I lift this mug by its handle, how good will my grip be?’ This is a very challenging problem, since the signals are so different, and this model has demonstrated great capability.”

Li wrote the paper alongside MIT professors Russ Tedrake and Antonio Torralba, and MIT postdoc Jun-Yan Zhu. It will be presented next week at The Conference on Computer Vision and Pattern Recognition in Long Beach, CA.

The section above was reproduced with permission from MIT CSAIL.

Advancing robotics technology with AI will result in significant improvements for manufacturing and Healthcare and gains across the economy and enabling human and robot collaboration can result in productivity gains.

A Knowledge@Wharton article entitled "Humans Plus Robots: Why the Two Are Better Than Either One Alone" interviewed James Wilson of Accenture Research who stated that "We find in our research that companies that focus on human and machine collaboration create outcomes that are two to more than six times better than those that focus on machine or human alone. For instance, BMW has found that robot/human teams were about 85% more productive than the old assembly line process, where you had industrial robots over on one side of the factory and people working on an old automated assembly line. When they got rid of that set up and started bringing people and collaborative robots to work together, they really started to see those big productivity improvements that just weren’t possible through the old way of thinking about automation."

An example in relation to robotics in Healthcare is provided by Emilia Marius in "6 Ways AI and Robotics Are Improving Healthcare" noted that " There is much work in a hospital, and not only doctors can use a helping hand. Nurses and hospital personnel can benefit from the help of robots such as the Moxi robot by Diligent Robotics. This robot takes care of restocking, bringing items and cleaning so that nurses can spend more time with patients and offer a human touch while leaving the grinding to the machine."

The amount of changes anticipated over the next 20 to 30 years will require massive investment in infrastructure, education and training. It will also require fundamental changes in the way that we think.

Significant sums will need to be spent on transforming our cities and buildings with the net benefit that we will become more energy efficient and predictive analytics mean that less accidents (things going wrong in buildings or people getting injured) will occur.

We stand today at a period of unprecedented transition and many of our senior economists, accountants, lawyers, heads of education, regulators and indeed leaders of countries don't really understand the new wave of technology, let alone the impact that AI will make over the next 10 to 20 years.

Infrastructure investment decisions (factories, power pants) have long-term implications that involve generating a rate of return over a 15 to 25 year period (and often much longer horizons for transportation and property projects). Some of these investments are enabled by pension funds (in effect our money) and many of these investments will be impacted by the massive changes that we are on the verge of. There is a real need for investment managers, business leaders and politicians to start understanding the sheer scale of change that will emerge in the not too distant future.

Currently it has been mostly the social media giants that have understood the value and power of data. This will have to change across other sectors of the economy. The likes of Facebook, Google, Amazon, etc understood that investment in data and data driven decision making is the foundation for future revenue streams. Many in the finance and other sectors view data infrastructure and technology as a cost centre. Yet at the same time many of these firms want AI to magically generate huge revenues for them.

The companies that survive and thrive in this period are the ones that today realise that investment in data is not just a cost but also the means to generate future revenue.

In my opinion the true danger with AI and a dystopian future over the next 5 to 10 years relates not to AGI with Skynet and Terminator machines but rather to the control of information and and the potential for misuse of algorithms on social media.

The social media giants have access to much of the data and have developed global platforms where national elections and referendum campaigns are increasingly fought. It is the place where companies, whether a global multinational or young startup, need to turn to in order to engage with their customers. Yet this sector is controlled by a few companies such as Facebook. So much of our information is obtained by social media. For example Amanda Zantal-Wiener authored "68% of Americans Still Get Their News on Social Media, Even If They Don't Trust It"citing research from a study from the Pew Centre.

AI has potential to provide many benefits on social media. Machine Learning algorithms can help identify who might engage with particular content but the question must also be asked what happens when the algorithms go wrong? Algorithms could be failing because there is a problem that the developers have missed or it could go wrong by deliberate design. Either way there needs to be a recourse.

A question that one must ask is whether we are sleep walking into a world where our voices can be silenced and the information that we see and engage with controlled by algorithms that may contain inherent flaws in their design.

It is understandable that on the one hand the social media giants have to consider varying objectives from different stakeholders whom they interact with. On the one hand the investors of the platform will want to see continued positive financial returns which in turn means making use of the data the platforms possess. At the same time there is pressure from regulators and governments to prevent misuse in terms of fake news campaigns that can impact national elections or referendums, and to prevent the use of social media by terrorist organisations and others promoting illegal and harmful acts.

There is considerable discussion in 2019 about the role of ethics in the development of AI algorithms and ensuring that we avoid bias in terms of personal, sub-conscious bias that the Data Scientists may not be aware of and allow to permeate into the data sets that are trained upon.

Examples where AI algorithms have not worked as intended and raised questions about ethics include the following:

In a data-driven world the impact of AI algorithms will have a disproportionate impact and there is a real danger that at a time when our politicians and regulators have not yet caught up with the technology that real harm can be done to businesses (in particular to SMEs / startups) and individuals. In order to help rebuild trust, transparency and fairness a number of influencers have grouped together to announce the creation of PositiveAI.

I wish to thank Jean Baptiste (@jblefevre60), Xavier Gomez (@Xbond49), Mark Lynd and Pierre Pinna (@pierrepinna) for agreeing to co-found a PositiveAI initiative. Our objective is to work with influencers and all of those amongst our audiences across social media to ensure the following objectives:

In summary we are at a unique crossroads in human history at a time when the ongoing digital revolution is taking us into a data-driven world where Machine Learning techniques will continue to gain momentum as data continues to grow at a rapid pace. We are entering into a world of inter-connected devices where AI will play a key role in machine to machine and machine to human communications. In order to maximise on the potential economic benefits and to bring positive developments for society we need to ensure that an education and training revolution is put into place and that our politicians hopefully start to understand the potential of AI technology along with the changes needed across the landscape (infrastructure and skills) to leverage the technology.

That’s absolutely in line with everything I have heard from Peter Diamandis … embrace it and make it your partner to be even better at what ever you do…

Imtiaz Adam is a Hybrid Strategist and Data Scientist. He is focussed on the latest developments in artificial intelligence and machine learning techniques with a particular focus on deep learning. Imtiaz holds an MSc in Computer Science with research in AI (Distinction) University of London, MBA (Distinction), Sloan in Strategy Fellow London Business School, MSc Finance with Quantitative Econometric Modelling (Distinction) at Cass Business School. He is the Founder of Deep Learn Strategies Limited, and served as Director & Global Head of a business he founded at Morgan Stanley in Climate Finance & ESG Strategic Advisory. He has a strong expertise in enterprise sales & marketing, data science, and corporate & business strategist.

Leave your comments

Post comment as a guest