Comments

- No comments found

Artificial intelligence (AI) is set to change how the world works. Although it's not perfect, artificial intelligence is a gamer changer.

© Towards Data Science

AI is the main engine of the digital revolution. The COVID-19 crisis has accelerated the need for human-machine digital intelligent platforms facilitating new knowledge, competences and workforce skills, advanced cognitive, scientific, technological, and engineering, social, and emotional skills.

In the AI and Robotics era, there is a high demand for the scientific knowledge, digital competence, and high-technology training in a range of innovative areas of exponential technologies, such as artificial intelligence, machine learning and robotics, data science and big data, cloud and edge computing, the Internet of Thing, 5G, cybersecurity and digital reality.

The combined value – to society and industry – of digital transformation across industries could be greater than $100 trillion over the next 10 years. “Combinatorial” effects of artificial intelligence (AI), machine learning (ML), deep learning (DL), robotics with mobile, cloud, sensors, and analytics among others – are accelerating progress exponentially, but the full potential will not be achieved without the collaboration between humans and machines.

Artificial Intelligence and machine Learning (ML) form the building block of next generation technology. Their innovative capabilities like computer vision, natural language processing, advanced analytics, enable schools and businesses to create insightful data-driven solutions and contribute to the advancement of the global economy.

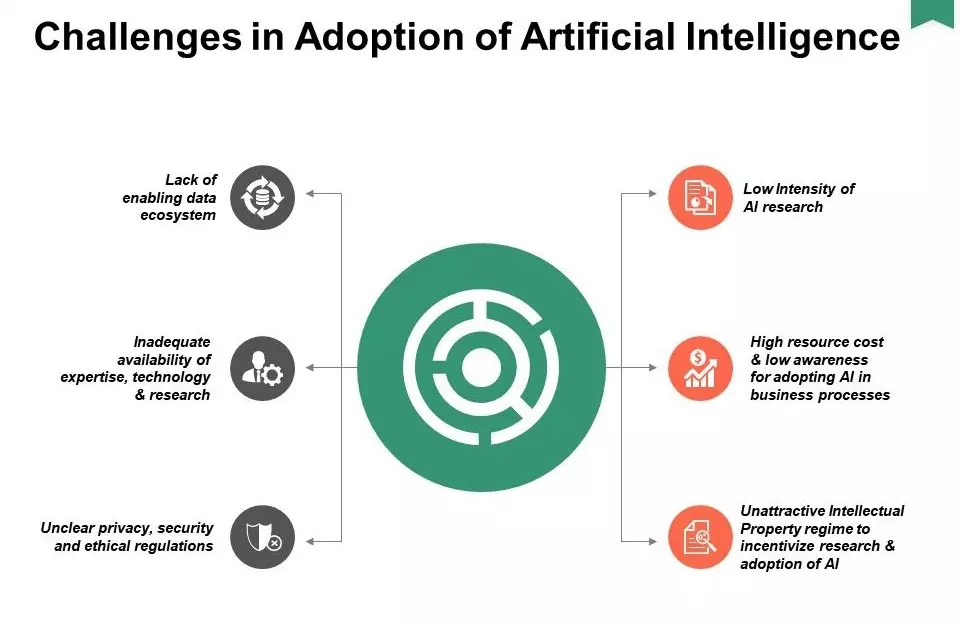

AI algorithms can show biased results when written by developers with biased minds. Since there isn’t any transparency about how the decision-making processes run in the background, the real users cannot be sure about its fairness. So, this can result in algorithms that yield biased results.

Most companies love data and they like to keep it. The privacy of citizens is constantly put at risk when companies collect consumer data without taking any prior permission — and this is made easy with the use of AI. Facial recognition algorithms are widely used across the world to support the functionality of different applications and products. Such products are collecting and selling huge amounts of customer data without consent.

Artificial Intelligence involves complex programming of products that cannot be explained to the common people. Moreover, algorithms of most of the AI-based products or applications are kept secret to avoid security breaches and similar threats. Due to these reasons, there is no transparency about the internal algorithms of AI products —making it difficult for customers to trust such products.

When an AI system or product does something unethical, it’s challenging to assign blame or accountability. Earlier governance functions had to deal with static processes, but AI and data processes are iterative. Thus we need a governance process that can similarly adapt and change.

A major chunk of artificial intelligence is based on the fact that tech companies train their computers using labeled data. Data Annotation/labeling requires a large human force and big tech giants like Google/Facebook hire a massive workforce who spend hours labeling the data. The irony here is that tech companies are trying to make smarter systems but they require substantial manual labor.

The current AI-based applications not only require labeled data but also massive data. If you think about the biggest players in AI, which are, Amazon, Google, Facebook, etc they are leading because they have access to so much data. Not all companies have access to massive data.

There are many aspects to data quality, including consistency, integrity, accuracy, and completeness. Modern systems need to become aware of the quality of data I/O. They must instantly identify potential issues and avoid exposing dirty, inaccurate, or incomplete data to connected production components/ clients. This implies that, even if there is a sudden problematic situation resulting in poor-data quality entries, the system will be able to handle the quality issue and proactively notify the right users. Depending on how critical the issues are, it might also deny serving data to its clients — or serve data while raising the alert/ flagging the potential issues.

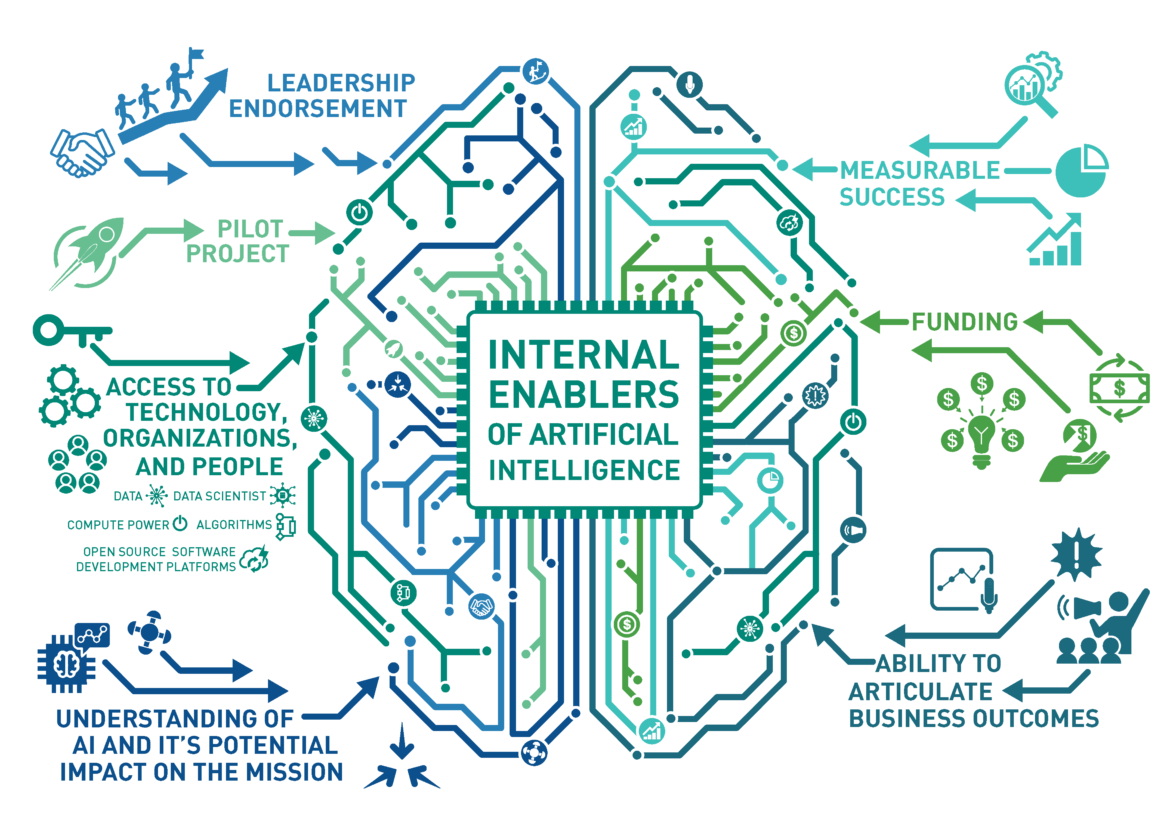

Reengineering: There is a great opportunity to redesign business processes and tasks around AI.

Organization and Culture—AI is the child of big data and analytics, and is likely to be subject to the same organization and culture issues as the parent.

Transparency - Companies should focus on increasing AI transparency by tackling poor quality and unlabeled data.

Investment—One key driver to improve artificial intelligence challenges is to invest in research & development. Few companies are demanding ROI analysis both before and after implementation.

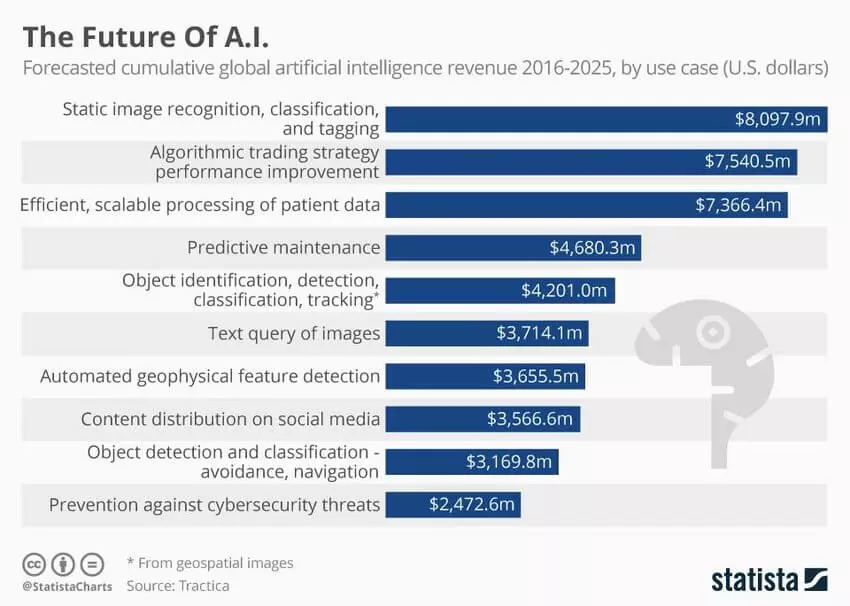

© Statista

In order to ensure appropriate utilization of artificial intelligence, companies need to embrace techniques that help them achieve fairness, security, and explainability. Responsible implementation of AI must reflect the ethics and values of an organization, thus, building trust among its customers, employees, and other stakeholders.

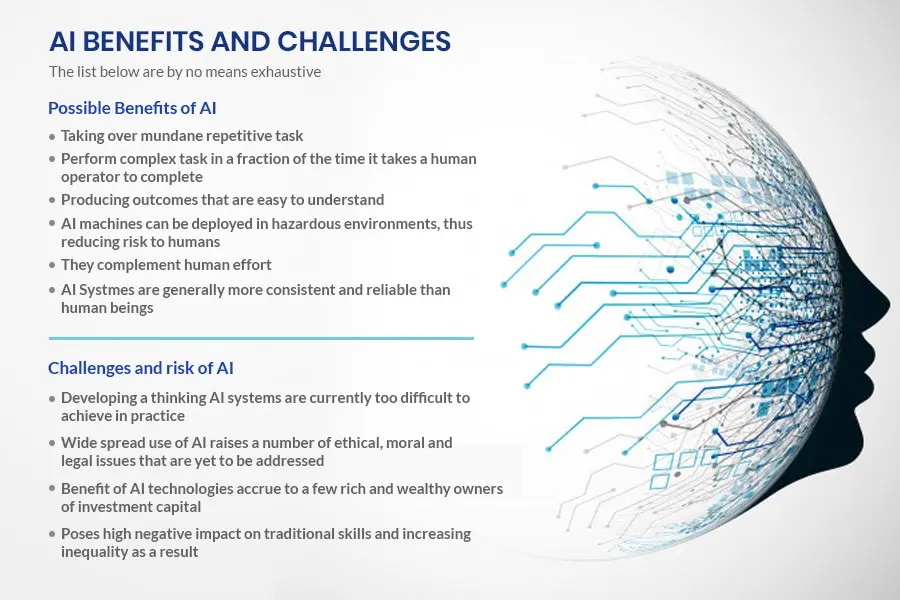

There is no doubt that the benefits and challenges of advanced technologies like AI are endless, but in seeking these new opportunities, we risk compromising the privacy and integrity of our society and its members. No matter what you may have heard, AI isn't going to solve all your problems. If you want to get the most out of AI, it's important to have the right expectations to avoid setting your team up for disappointment.

Leave your comments

Post comment as a guest