Comments

- No comments found

Conflict is an inevitable part of human interaction, and it can be caused by a variety of factors, including differences in opinions, values, and interests.

However, conflict can also have a significant impact on artificial intelligence (AI) and its development.

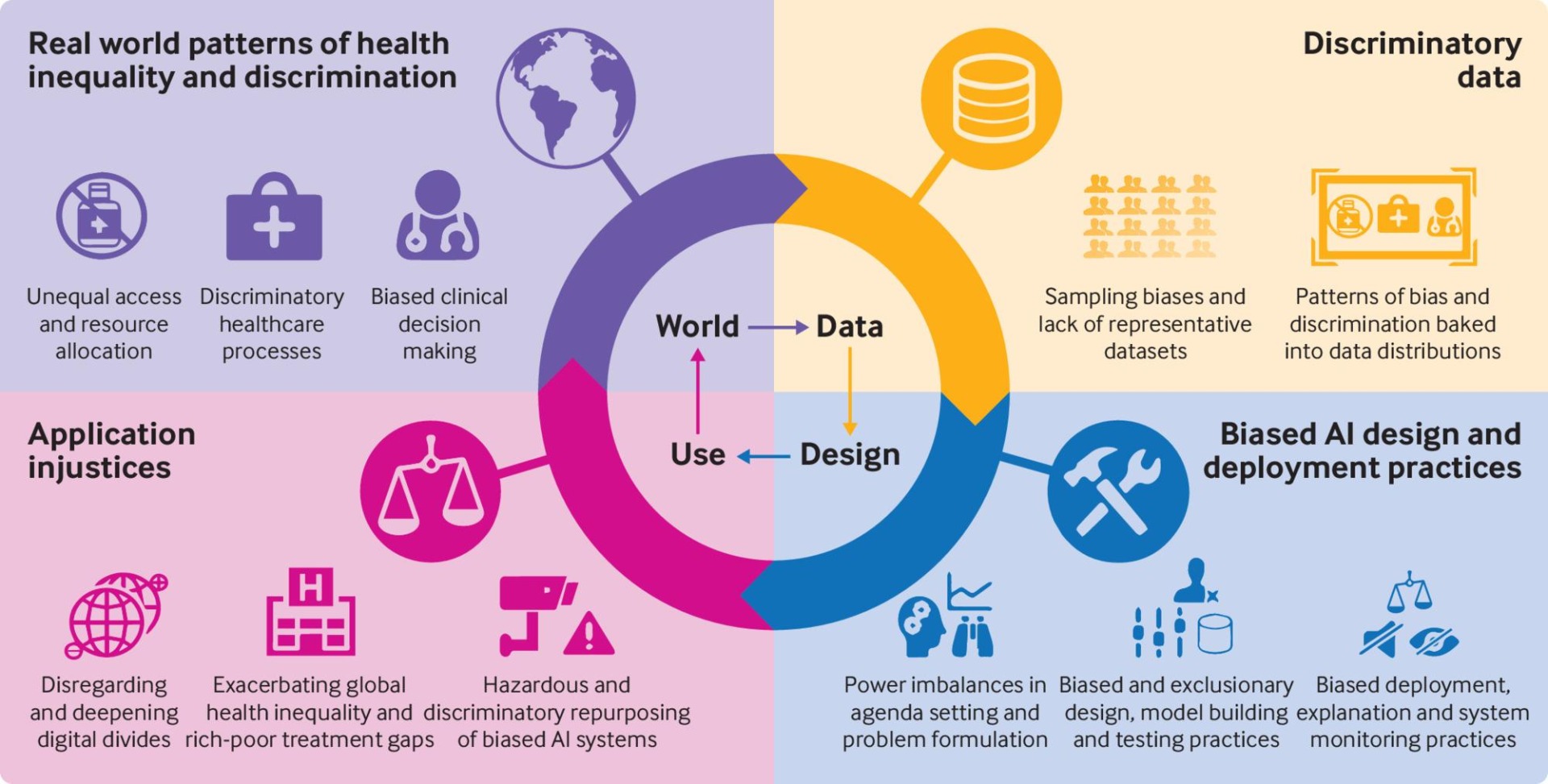

One example of the impact of conflict on AI is the development of facial recognition technology. This exciting technology has been criticized for being biased against individuals from certain racial and ethnic backgrounds. This bias is thought to be a result of the lack of diversity in the teams developing the technology, as well as the biases of the data used to train the systems.

Another example is the development of AI systems for hiring and recruitment. These systems have been criticized for perpetuating biases against certain groups, such as women and individuals from certain cultural backgrounds. This bias is thought to be a result of the biases of the individuals developing the systems, as well as the biases of the data used to train the systems.

In this article, we will explore the neuroscience of conflict and its impact on AI, including the ways in which conflict can affect the development of AI systems, the potential risks of biased AI, and strategies for managing conflict in the AI industry.

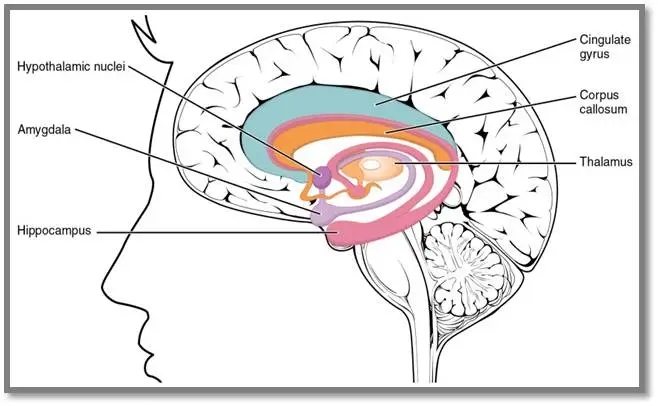

Conflict has been studied extensively by neuroscientists, who have identified several brain regions that are activated during conflict. One of these regions is the anterior cingulate cortex (ACC), which is responsible for detecting and processing conflicts. When the ACC is activated, it sends a signal to other regions of the brain, such as the prefrontal cortex and the amygdala, which are responsible for decision-making and emotional processing, respectively.

Another brain region that is involved in conflict is the insula, which is responsible for processing emotions and bodily sensations. During conflict, the insula is activated, and this activation is thought to contribute to the emotional response to conflict.

Conflict can have a significant impact on the development of AI systems. One potential impact is the potential for biased AI. When conflicts arise, people often resort to stereotypes and biases to make decisions, and these biases can be reflected in AI systems.

For example, if a team developing an AI system is composed entirely of men, the system may be biased against women. Similarly, if a team developing an AI system is composed entirely of individuals from a particular cultural background, the system may be biased against individuals from other cultures.

Another potential impact of conflict on AI is the potential for delays or mistakes in the development process. When conflicts arise, team members may become less focused on the task at hand and more focused on the conflict itself. This can lead to delays in the development process, and it can also lead to mistakes in the development of the AI system.

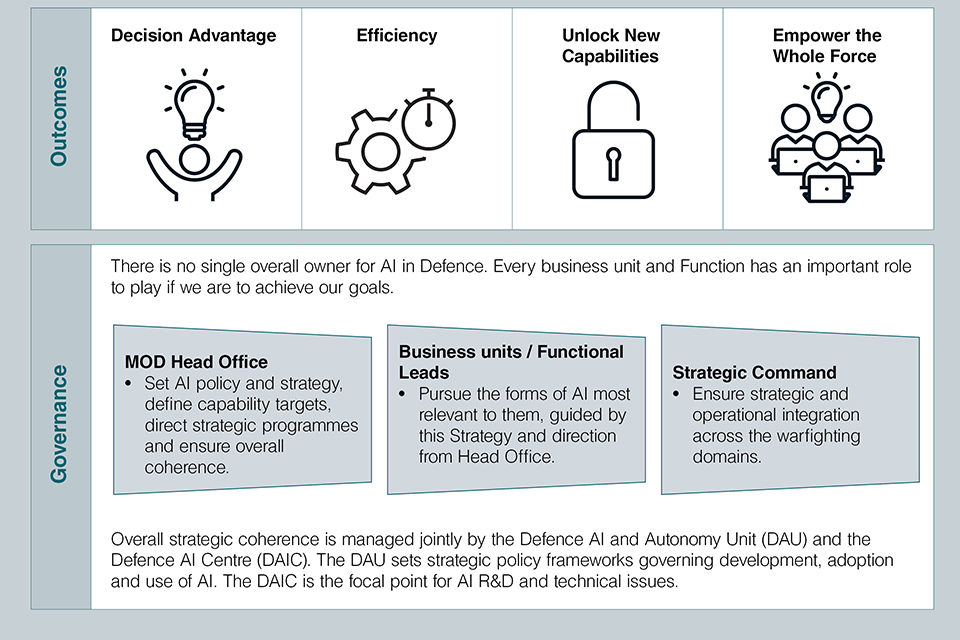

To manage conflict in the AI industry, it is important to establish clear communication channels and to ensure that team members are trained to recognize and manage conflicts effectively. One effective strategy is to establish clear guidelines for the development process, including the roles and responsibilities of team members and the decision-making process.

Another strategy is to ensure that team members are diverse and represent a range of perspectives and backgrounds. This can help to reduce the potential for bias in AI systems and can also lead to more innovative solutions.

Finally, it is important to establish a culture of respect and collaboration within the AI industry. By promoting a culture of respect and collaboration, team members are more likely to work together effectively and to resolve conflicts in a constructive manner.

Conflict is an inevitable part of human interaction, and it can have a significant impact on the development of AI systems. By understanding the neuroscience of conflict and its impact on AI, we can develop strategies for managing conflict effectively and for reducing the potential for bias in AI systems.

To manage conflict in the AI industry, it is important to establish clear guidelines for the development process, promote diversity within development teams, and foster a culture of respect and collaboration. These strategies can help to ensure that AI systems are developed in a responsible and ethical manner, and that they are not biased against certain groups or perpetuating harmful stereotypes.

Overall, the neuroscience of conflict and its impact on AI is a complex topic that requires careful consideration and attention. By understanding the potential risks of conflict in the development of AI systems, we can take steps to mitigate these risks and ensure that AI is developed in a responsible and ethical manner. This will require ongoing research, collaboration, and a commitment to promoting diversity and inclusion within the AI industry.

Leave your comments

Post comment as a guest