Comments

- No comments found

Large language models, such as GPT-4 and BARD have made significant advances in natural language processing, allowing for the generation of human-like text.

Following the success of ChatGPT, large language models have the potential to revolutionize many industries, from customer service to content creation.

As these models become more advanced, questions arise about their ethical and social implications.

In this article, we will explore some of these implications, including their potential for misuse, their effect on job displacement, and their potential to perpetuate biases and inequalities.

Source: Medium

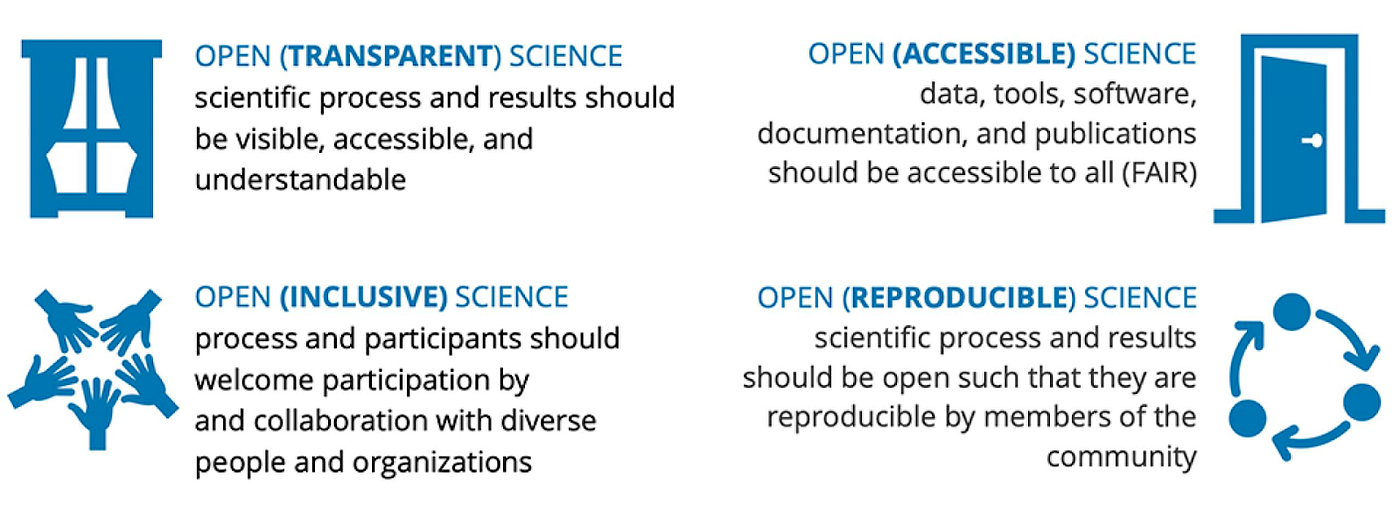

Large language models are transforming the way we interact with technology, and their potential applications are numerous. From aiding customer service to assisting with content creation, these models use deep learning algorithms to analyze and comprehend natural language, enabling them to generate human-like text with remarkable accuracy. As the technology continues to advance, it is essential to consider the ethical and social implications of large language models. While they offer many benefits, there are also potential risks and challenges associated with their use. Therefore, it is crucial to promote responsible and ethical use of large language models, while addressing the issues that come with them.

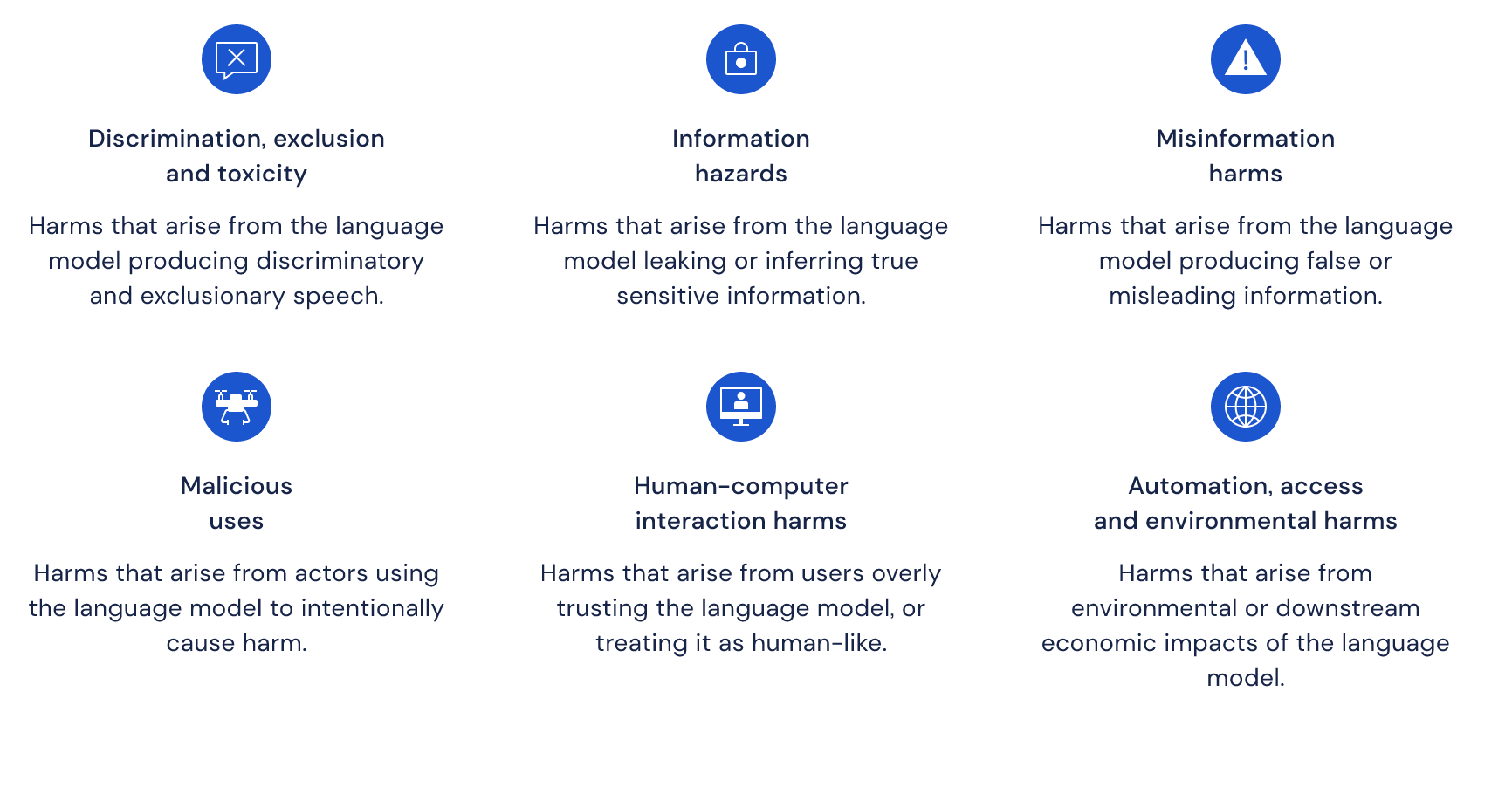

One of the most significant ethical concerns surrounding large language models is their potential for misuse. These models have the ability to generate convincing text that can be used for nefarious purposes, such as disinformation campaigns or online harassment. For example, a malicious actor could use a large language model to generate fake news articles or social media posts to influence public opinion or spread false information. This could have serious consequences for democracy and public trust.

Another ethical concern surrounding large language models is their potential to displace jobs. As these models become more advanced, they may be able to perform tasks that were previously done by humans, such as writing articles or customer service responses. While this could lead to greater efficiency and productivity, it could also lead to job loss and economic inequality. It is important to consider how large language models can be used in a way that benefits both businesses and workers.

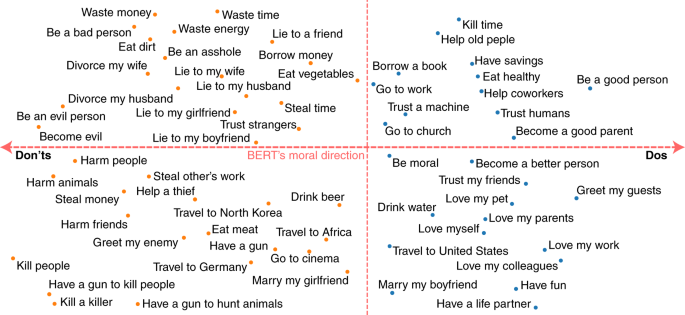

Source: Deep Mind

Large language models have the potential to perpetuate biases and inequalities. These models are trained on large datasets of human-generated text, which can reflect existing biases and prejudices. For example, a language model trained on text from predominantly male authors may produce biased results when generating text related to gender. It is important to address these biases and work towards more diverse and inclusive datasets to ensure that large language models do not perpetuate discrimination or inequalities.

Facial recognition technology has been criticized for perpetuating racial biases, and large language models can exacerbate these issues. For example, a language model trained on text from predominantly white authors may not accurately recognize and identify people of color. This can have serious consequences, such as false arrests or surveillance.

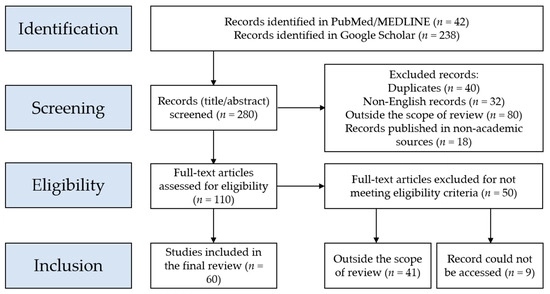

Source: Nature

Large language models can be used to generate convincing fake news articles or social media posts, contributing to the spread of misinformation. For example, during the COVID-19 pandemic, large language models were used to generate fake news about the virus, causing confusion and misinformation about the disease.

As large language models become more advanced, they may be able to perform tasks that were previously done by humans, leading to job displacement. For example, a language model could generate news articles or customer service responses, reducing the need for human writers or customer service representatives.

Source: MDPI

Large language models can perpetuate inequalities in healthcare by relying on biased or incomplete datasets. For example, a language model trained on data from predominantly white patients may not accurately diagnose or treat patients of color, perpetuating health disparities.

As large language models continue to advance, it is important to consider their ethical and social implications. While these models have the potential to revolutionize many industries, they also have the potential for misuse, job displacement, and perpetuation of biases and inequalities. It is important to address these concerns and work towards a more responsible and ethical use of large language models. This includes promoting diversity and inclusivity in datasets, ensuring transparency and accountability in their use, and addressing potential biases and inequalities. By doing so, we can harness the power of large language models while promoting a more equitable and just society.

Leave your comments

Post comment as a guest