Comments

- No comments found

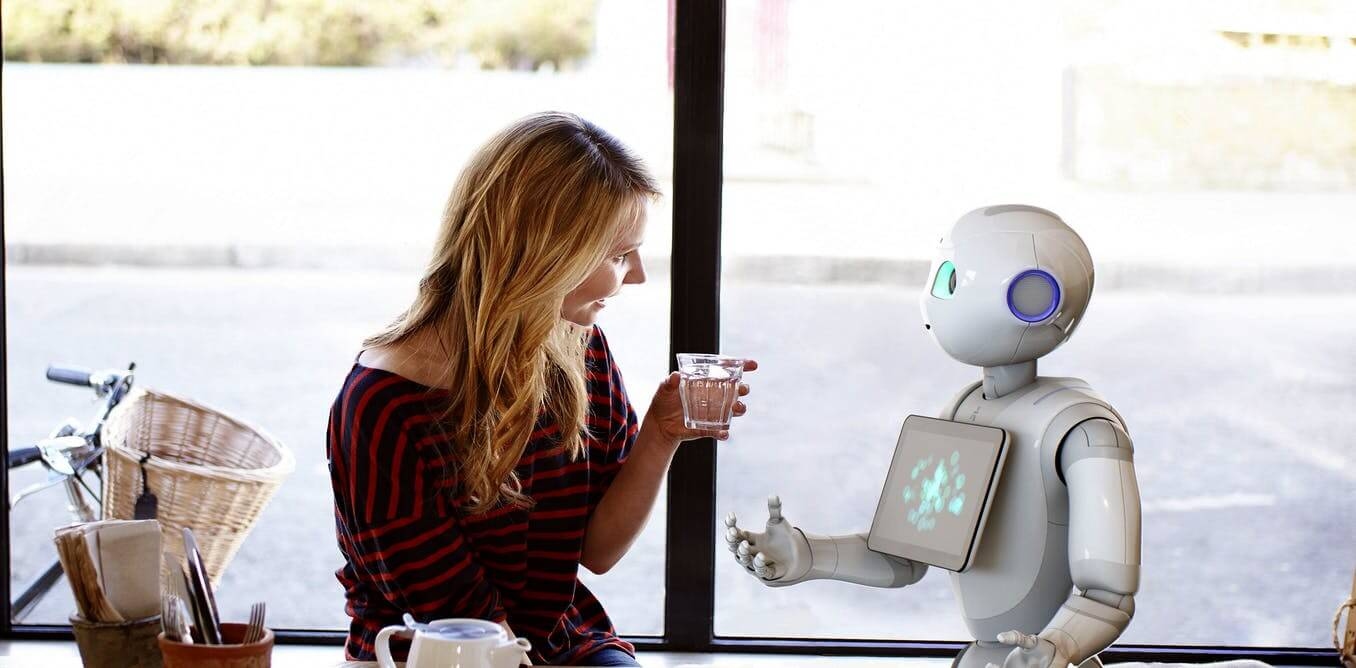

Artificial intelligence (AI) machine learning is fueling the current commercial boom in automation, and robots are becoming increasingly more sophisticated.

In a step forward in endowing robots with human-like behavior, researchers at Columbia University showed how AI machine learning can predict a robot’s future actions by observation and published their results earlier this month in Nature Scientific Reports.

Empathy, the ability to understand and relate to another person’s feelings or experiences vicariously, is a competency that is decidedly within the biological realm. Or is it? Can a machine be endowed with some semblance of this capability?

This ability to mentalize, known as theory of mind, involves inferring what others are feeling and thinking. Jean Piaget (1896-1980) is a Swiss psychologist and epistemologist known for his contributions to understanding the cognitive development of children. According to Piaget, the thought-processes of children are mostly egocentric up until the age of four, when their executive functioning starts to improve and theory of mind emerges.

The phrase “theory of mind” was put forth by University of Pennsylvania researchers David Premarck and Guy Woodruff when they published their study on primate intelligence in The Behavioral and Brain Sciences in 1978.

“An individual has a theory of mind if he imputes mental states to himself and others,” wrote Premarck and Woodruff. “A system of inferences of this kind is properly viewed as a theory because such states are not directly observable, and the system can be used to make predictions about the behavior of others.”

Columbia University professor Hod Lipson led the study with contributions from researchers Carl Vondrick and Boyuan Chen. The team sought to test their hypothesis that a robot can model the behavior of another via observation only. What sets their research apart from other AI machine behavior modeling studies is that their design would not depend on providing prior input assumptions or symbolic information.

The researchers created an actor robot enabled to do pre-programmed behaviors and an AI observer to perform image predictions using deep learning architecture.

“Our goal is to see if the observer can predict the long-term outcome of the actor’s behavior, rather than simply the next frame in a video sequence, as do many frame-to-frame predictors,” the researchers wrote.

The deep neural network has an encoder network where a batch normalization layer and a ReLU non-linear activation function follow each convolutional layer. The decoder network is multi-scale, designed to transform rough feature representations to a higher resolution. The network is optimized using a Mean Square Error loss. Overall, there are 609,984 neurons, 570,000 parameters, and 12 fully convolutional layers in the image prediction network.

The AI observer “sees” raw camera images from an overhead camera that records the actor robot in a pen. The AI observer is not provided any accompanying information with the raw images such as motor commands, segmentation, trajectory coordinates, nor data labeling. The AI observer’s output consists of a single image of what it predicts will be the results of the actor robot’s actions, thus, it is a visual representation of its observations.

The AI observer was only shown limited amounts of images. According to the researchers, the AI observer performance was similar to predicting how a movie will end based solely on viewing the opening scene.

“Our observer AI achieves a 98.5% success rate on average across all four types of behaviors of the actor robot,” reported the researchers.

“The ability of a machine to predict actions and plans of other agents without explicit symbolic reasoning, language, or background knowledge, may shed light on the origins of social intelligence, as well as pave a path towards more socially adept machines.”

Copyright © 2021 Cami Rosso All rights reserved.

A version of this article first appeared on Psychology Today.

Leave your comments

Post comment as a guest