Comments

- No comments found

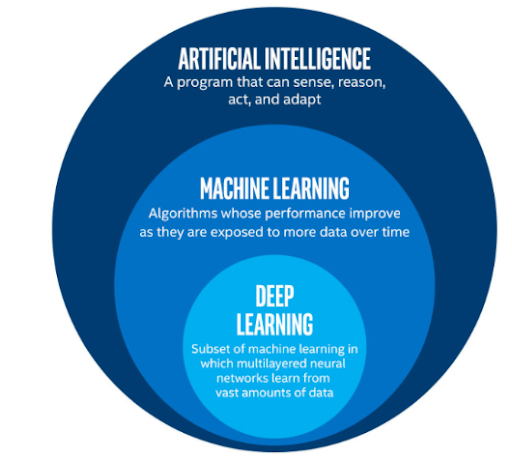

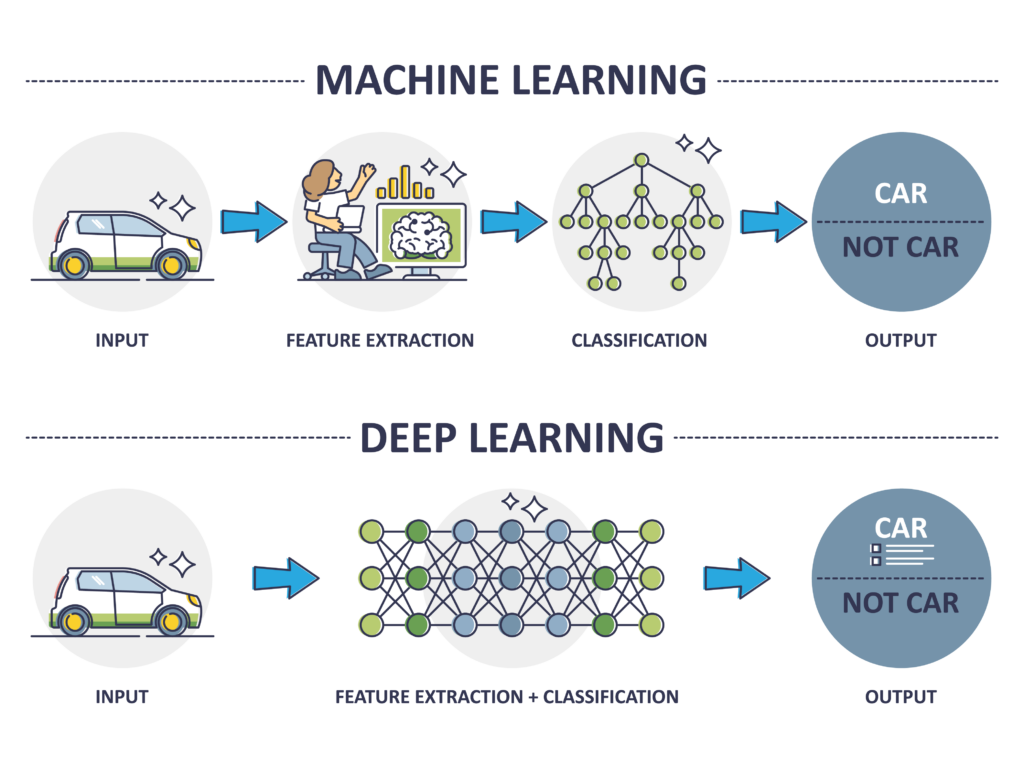

Deep learning is a type of machine learning that involves training artificial neural networks on a large dataset.

Deep learning algorithms learn by example, and are able to learn and make intelligent decisions on their own by analyzing data. They are able to identify patterns and features in the data, and can make decisions based on this analysis. Deep learning is used in a variety of applications, such as image and speech recognition, natural language processing, and even playing games. It has led to significant advances in many fields, and is a key part of the current development of artificial intelligence.

Deep learning has been successful in a wide range of applications, including:

Image and video analysis: Deep learning has been used to develop object recognition systems, facial recognition systems, and image and video captioning systems.

Natural language processing: Deep learning has been used to develop language translation systems, text summarization systems, and chatbots.

Speech recognition: Deep learning has been used to develop speech recognition systems that can transcribe spoken words into written text.

Medical diagnosis: Deep learning has been used to develop systems that can diagnose diseases from medical images and predict patient outcomes.

Fraud detection: Deep learning has been used to identify fraudulent transactions in financial systems.

Robotics: Deep learning has been used to develop control systems for robots that can learn to perform tasks through trial and error.

Game playing: Deep learning has been used to develop game-playing AI that can beat human opponents at complex games like chess and Go.

These are just a few examples of the many applications of deep learning.

There are several trends in deep learning that are currently making a big impact in the field:

Self-supervised learning: This is a type of learning where the model is able to learn from large amounts of unlabeled data, and then use this knowledge to improve performance on a specific task by learning from a small amount of labeled data.

Generative models: These are models that are able to generate new data that is similar to the training data. This has applications in a variety of fields, such as generating images, text, and even music.

Explainable AI: There is a growing need for AI systems to be able to explain their decision-making process. This is especially important in fields such as healthcare and finance, where the consequences of incorrect decisions can be significant.

Transfer learning: This is the ability of a model to learn from one task and apply that knowledge to a related task. This can significantly reduce the amount of labeled data and computational resources needed to train a model for a new task.

Edge computing: There is a growing trend towards deploying deep learning models on devices at the edge of the network, such as smartphones and IoT devices. This allows for real-time decision-making and reduces the need to transmit large amounts of data to the cloud for processing.

There are several ways in which deep learning can be improved:

Increase the amount and quality of training data: Deep learning algorithms rely on large amounts of high-quality training data in order to learn effectively. Increasing the amount and quality of training data can lead to improved performance.

Develop better optimization algorithms: The training process for deep learning models is computationally intensive and can be time-consuming. Developing better optimization algorithms that can train deep learning models more efficiently could greatly improve the speed and effectiveness of these models.

Improve generalization: Deep learning models can sometimes overfit to the training data, leading to poor performance on unseen data. Improving the ability of these models to generalize to new data could greatly improve their performance.

Increase interpretability: Deep learning models can sometimes be difficult to interpret, which can be a problem in fields such as healthcare and finance where the consequences of incorrect decisions can be significant. Developing techniques to improve the interpretability of these models could make them more widely adopted in these fields.

Use hybrid approaches: Combining deep learning with other machine learning techniques, such as decision trees and support vector machines, can lead to improved performance and increased interpretability.

There are a few limitations to deep learning that are worth considering:

Data requirements: Deep learning algorithms require a large amount of labeled data to train on, which can be expensive and time-consuming to obtain.

Lack of interpretability: Deep learning models can be difficult to interpret, as they often consist of many layers of non-linear transformations. This lack of interpretability can make it hard to understand why a model is making certain predictions.

Overfitting: Deep learning models have a tendency to overfit to the training data, which means that they may perform poorly on unseen data.

Computational resources: Training deep learning models can be computationally intensive and may require specialized hardware such as graphics processing units (GPUs).

Lack of domain knowledge: Deep learning algorithms do not have an inherent understanding of the domain they are being applied to and rely on the data to learn patterns. This can be a limitation if the data is not representative of the problem at hand.

Despite these limitations, deep learning has achieved significant success in many applications and is an active area of research and development.

There are a few ways that deep learning could be improved:

Better optimization algorithms: The training of deep learning models can be difficult, as the models often have a large number of parameters and may be prone to overfitting. Developing better optimization algorithms that can more efficiently and effectively train deep learning models could improve the performance of these models.

More effective regularization: Regularization is a technique used to prevent overfitting in deep learning models. Developing more effective regularization methods could help to improve the generalization performance of these models.

Better handling of unbalanced datasets: Deep learning models can perform poorly on unbalanced datasets, where there are significantly more examples of one class than another. Developing techniques to better handle unbalanced datasets could improve the performance of deep learning models on these types of datasets.

More interpretable models: As mentioned earlier, deep learning models can be difficult to interpret, which can make it hard to understand why a model is making certain predictions. Developing techniques to create more interpretable models could help to improve the transparency and accountability of these models.

Integration with domain knowledge: Incorporating domain knowledge into deep learning models could improve their performance on specific tasks. For example, a deep learning model for medical diagnosis could be improved by incorporating medical knowledge about the relationships between different diseases and symptoms.

Overall, there is still much room for improvement in deep learning, and researchers are actively working on developing new techniques to address these and other challenges.

There are several exciting developments in deep learning that are likely to shape the future of the field:

Increased use of unstructured data: Deep learning models are traditionally used with structured data, such as images and text. However, there is a growing trend towards using deep learning with unstructured data, such as video, audio, and graphs. This will allow for a wide range of new applications, such as improved speech recognition and video analytics.

Improved interpretability and transparency: There is a growing need for deep learning models to be more interpretable and transparent, especially in fields such as healthcare and finance where the consequences of incorrect decisions can be significant. Researchers are working on developing new techniques to improve the interpretability of deep learning models.

Adoption of federated learning: Federated learning is a technique where a model is trained using data from multiple devices, without the data being centralized. This has the potential to greatly increase the amount of data available for training, and could lead to significant improvements in the performance of deep learning models.

Development of hybrid approaches: There is a trend towards combining deep learning with other machine learning techniques, such as decision trees and support vector machines. This can lead to improved performance and increased interpretability.

Increased use of deep learning in industry: Deep learning is already being used in a wide range of industries, and this trend is likely to continue as more and more companies adopt this technology. This will lead to the development of new applications and the creation of new jobs in the field.

Leave your comments

Post comment as a guest