Comments

- No comments found

Artificial Intelligence (AI) has become ubiquitous by revolutionizing numerous industries.

AI everywhere is an era that we are rapidly entering whereby the end users are increasingly accustomed to mass personalisation at scale otherwise they will switch to the offerings provided by business rivals who may be using AI to create customised services and user experiences.

The launch of the 5th Gen Intel Xeon Scalable Processor on the 14th Dec 2023 has been announced during an era of rapid advancement in the AI sector due in particular to business and public interest in models such as Generative AI have amassed many users in both the public end user level and also the business community. #IntelAmbassaador

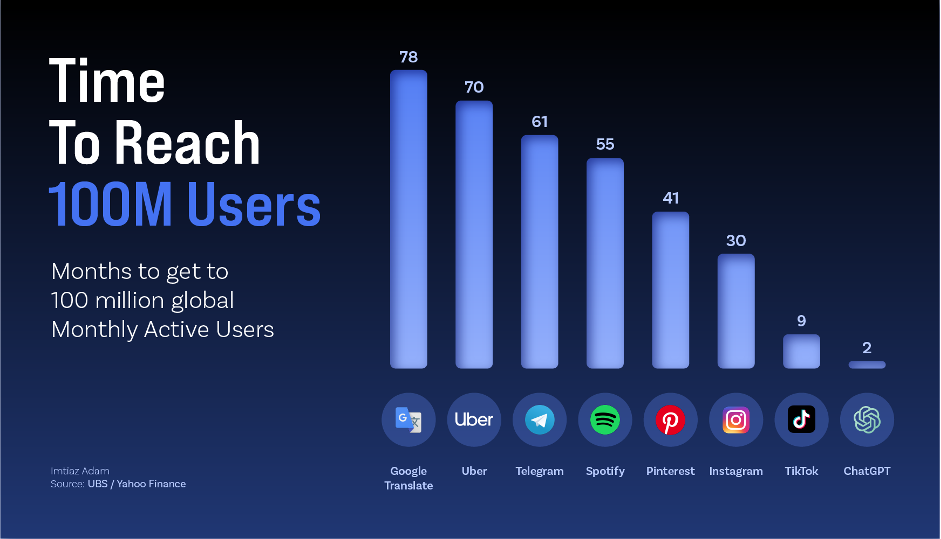

Source: UBS / Yahoo Finance

The chart above shows that ChatGPT from Open AI has taken the world by storm, amassing 100m users in a mere 2 months. Threads from Meta (Instagram) attained 100m users even faster, however, this chart illustrates how rapidly Generative AI is being adopted by users.

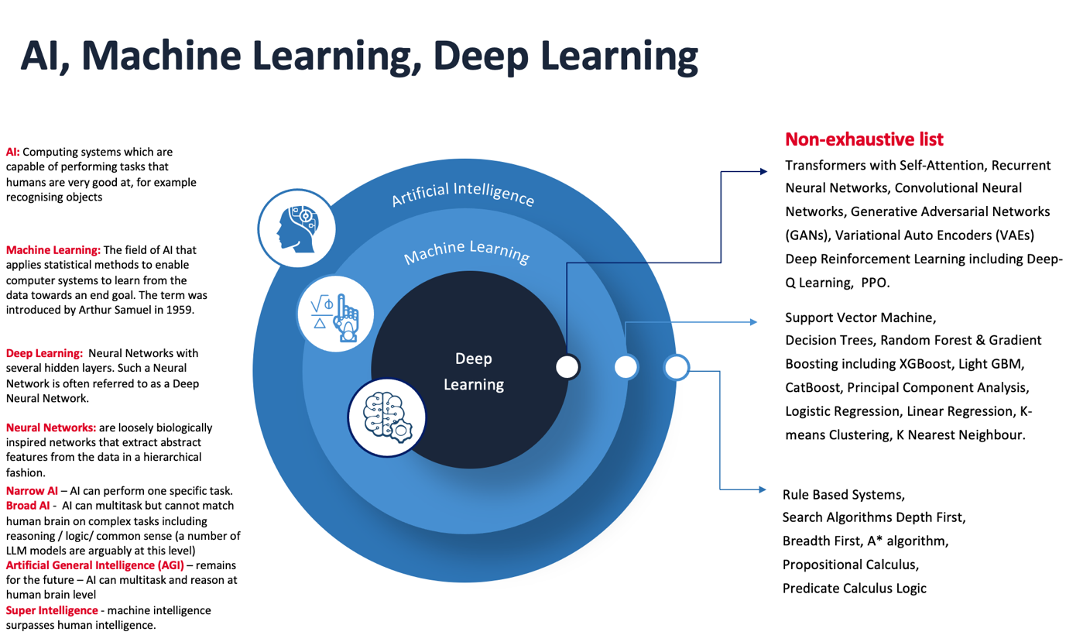

Taking a step back and reminding ourselves of the journey and some of the key types of AI are set out below:

Source: Imtiaz Adam

Much of the current excitement in AI is being driven by Generative AI, in particular those models applying Transformers with Self-Attention (often combined with Deep Reinforcement Learning). This has allowed the end user and businesses to create content and also for businesses to develop better state-of-the-art virtual agents including chatbots. However, such models are also computationally expensive resulting in high energy consumption and hence a meaningful carbon footprint too.

If we are able to find ways to efficiently scale Generative AI then there are tangible economic benefits. For example, McKinsey estimate that applying Generative AI towards customer care related functions may result in increased productivity ranging from 30% to 45% of existing function costs and in relation to R&D enhance productivity in a range of 10% to 15% of overall costs. Furthermore, the same McKinsey report also estimates that Generative AI may result in higher productivity of the marketing function with a value between 5% and 15% percent of total marketing spending.

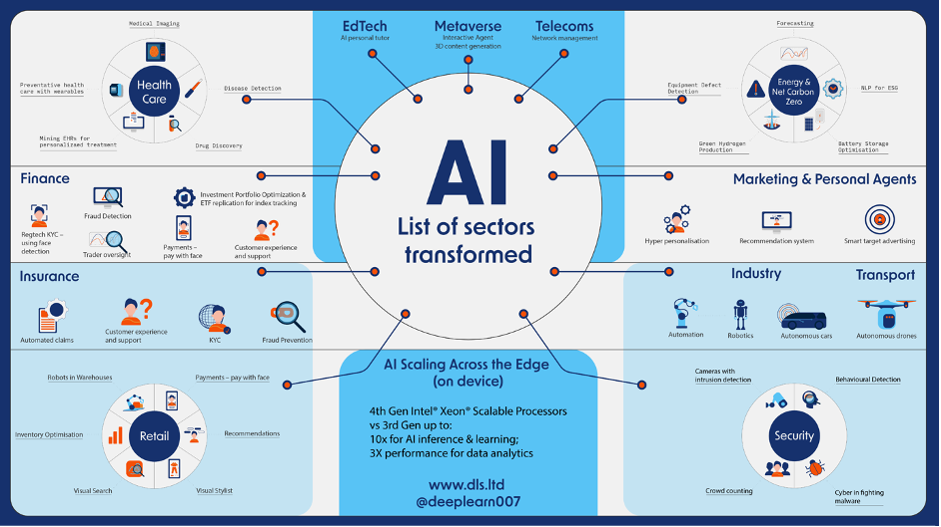

Generative AI can assist companies with sentiment analysis, document analysis and summarization and text to image creation. Examples of how AI may transform different sectors of the economy is set out in the following section below.

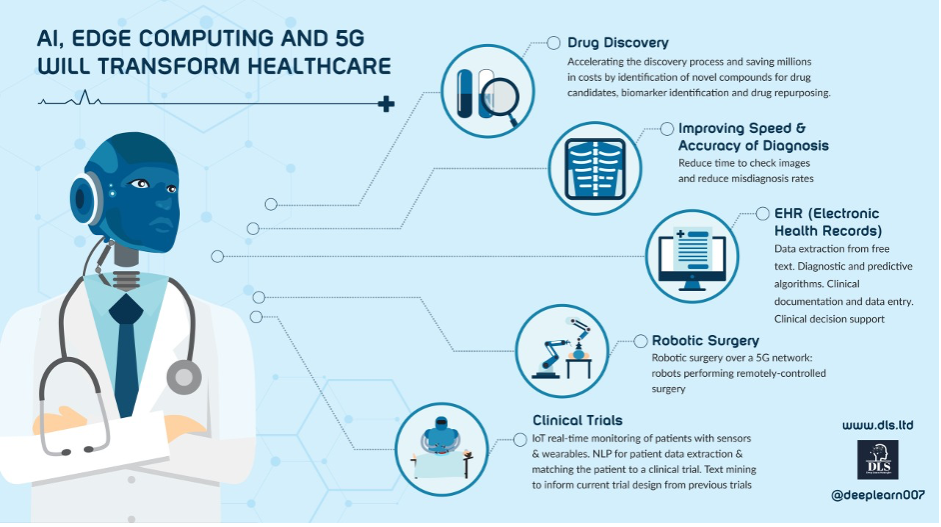

Healthcare: medical imaging, remote monitoring, natural language to analyse electric health records (EHRs), de novo drug discovery and the delivery of personalised medicine;

Education: personal tutors to provide customised educational support to meet the needs of the individual student;

Marketing: Generative AI for personalised content creation, tailored content targeted towards those more likely to have an interest in the products or services and personalised offers and recommendations;

Transportation: navigation for autonomous vehicles, vehicle health checks and monitoring;

Construction: Generative AI for design and digital twins;

Security: Intruder detection, predictive analytics, crowd control warnings;

Cyber Security: Malware threat detection and protection;

Manufacturing: Predictive analytics including for unplanned outage detection, automated defective parts analysis;

Financial Services & Investment: Fintech solutions for automated credit analysis, equity research, ESG classification, portfolio construction, risk management, factor investing, insurance automated claims management, underwriting risk assessment and pricing;

Customer Relationship Management (CRM) and customer experience (CX): chatbots for customer engagement

Energy: Drones with computer vision to check for defects in solar panels and wind turbine blades, weather forecasting, renewable energy production forecasting, energy demand forecasting, battery storage optimisation, smart grids

Smart Cities: urban traffic planning, smart and intelligent buildings to optimise energy consumption;

Retail: personalised recommendations, inventory management, product demand forecasting, supply chain optimisation;

Accounting: Fine-tuned LLMs may read and analyse specific documents and spreadsheets, and assist with invoicing documents.

Legal sector: natural language for research assistance, case management, invoice management, contract drafting.

A recap of how AI is Transforming the Sectors of the Economy and see AI Everywhere for how Intel 5th Gen Xeon Scalable Processors are advancing this further.

A substantial amount of latency would ruin the customer experience (CX) and high computational resources may simply result in Generative AI models proving too costly to adopt at scale. Latency is the time taken for a client device (for example a mobile phone, tablet, laptop or any other internet connected device facing the user) and the time that it takes for a signal (typically containing information) to be sent back from a server device (often a remote cloud-based server where the data and analytics reside). This can result in a poor and unsatisfactory user experience or the even potentially dangerous situations where the response is needed by the client side for key decision making.

The latest offering generation of Intel Xeon Scalable Processors can address this issue and help businesses and the public at large adopt Generative AI models being powered by LLMs on a more efficient basis.

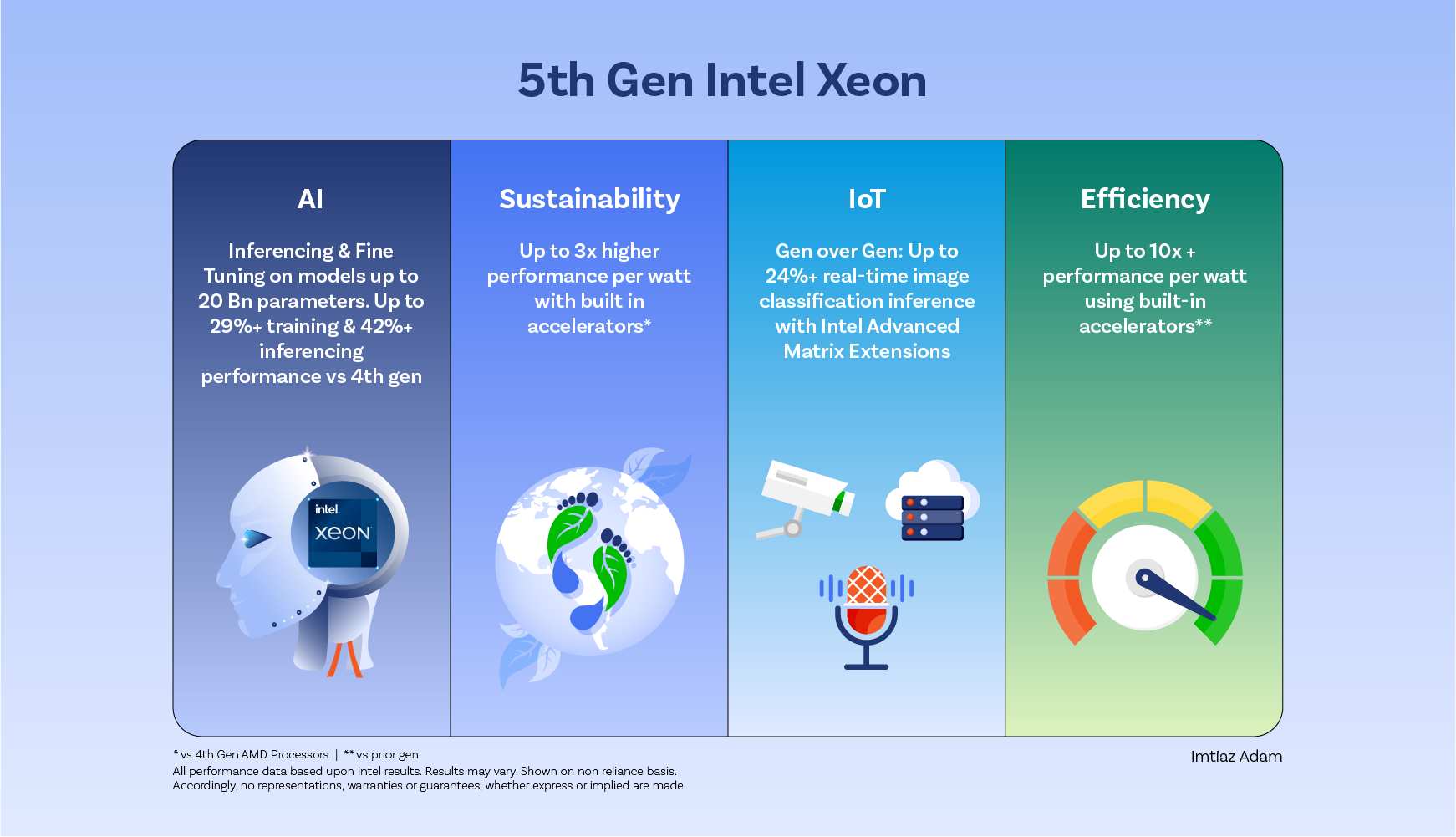

Examples from the Intel in relation to the 5th Gen Xeon Scalable Processors and AI Everywhere are provided below:

Intel Matrix Extensions (Intel AMX) 5th Gen Scalable processors make generative AI more accessible on the CPU allowing the user to do more before they need to access an accelerator.

With AI acceleration in every core, 5th Gen Intel® Xeon® processors are ready to handle demanding AI workloads—including inference and fine tuning on models up to 20 billion parameters —before the need to add discrete accelerators.

The SLAs enable real-time UX with less than 100ms token latency on LLMs under 20 billion parameters.

The specific performance enhancements entail: Up to 13% average first token speedup and up to 22% average second token speedup on GPT-J vs 4th Gen Intel Xeon processors.

Up to 2.3X average first token speedup and up to 64% average second token speedup on GPT-J relative to 3rd Gen Intel processors.

Up to 12% speeding up for first token latency and

Up to 2.1x speedup for first token latency and up to 48% speedup for second token latency on LLaMA-2 13B vs. 3rd Gen Intel Xeon processors.

5th Gen Intel Xeon Scalable Processors deliver fast, personalized product or content recommendations that don’t slow down the user experience with a Deep Learning-based recommender system that accounts for real-time user behavior signals and context features, such as time and location. 5th Gen Intel® Xeon® Scalable processors feature Intel® Advanced Matrix Extensions (Intel® AMX), a built-in accelerator that speeds up Deep Learning inference and accelerates small model training on the CPU. Performance improvements include:

Up to 2.34x higher batched inference performance on DLRM (INT8) vs. 4th Gen AMD EPYC.

Up to 24% higher batched inference performance on DLRM vs. 4th Gen Intel Xeon processors.

Smoother experiences with faster responses

Enable more responsive smart assistants, chatbots, predictive text, language translation, and more with a performance leap in natural language processing (NLP) inference. 5th Gen Intel® Xeon® Scalable Processors.

Up to 9.9x higher real-time inference performance on BERT-Large vs. 3rd Gen Intel Xeon processors with FP32.

Up to 7x higher real-time inference performance on DistilBERT vs. 3rd Gen Intel Xeon processors with FP32.

With Intel® oneAPI Deep Neural Network Library (oneDNN) software optimizations already integrated into the mainstream distributions of TensorFlow and PyTorch, developers can more easily access the benefits of built-in AI acceleration. Intel® Software Development Tools give developers the freedom to migrate code across different hardware architectures and vendors with comparable performance, increasing productivity and future-readiness without costly and time-consuming challenges.

For more intensive AI needs, add purpose-built Intel® Gaudi® AI accelerators to expand your CPU-based foundation.

Up to 1.19x (BF16) and 1.23x (INT8) vs. 4th Gen and up to 9.9x (BF16) and 9.2x (INT8) vs. 3rd Gen Intel® Xeon® processors. See A19 at intel.com/processorclaims: 5th Gen Intel Xeon Scalable processors. Results may vary.

Up to 1.41x (BF16) and 1.35x (INT8) vs. 4th Gen and up to 7x (BF16) and 2.9x (INT8) vs. 3rd Gen Intel® Xeon® processors. See A24 at intel.com/processorclaims: 5th Gen Intel Xeon Scalable processors. Results may vary.

With Intel® oneAPI Deep Neural Network Library (oneDNN) software optimizations already integrated into the mainstream distributions of TensorFlow and PyTorch, developers can access the benefits of built-in AI acceleration.

Up to 1.24x (BF16) and 1.24x (INT8) vs. 4th Gen and up to 8.7x (BF16) and 5.5x (INT8) vs. 3rd Gen Intel® Xeon® processors. See A20 at intel.com/processorclaims: 5th Gen Intel Xeon Scalable processors. Results may vary.

The 5th Generation Intel Xeon Scalable Processor enables high speed Machine Learning on a CPU.

Classical Machine Learning plays a crucial role in high-performance computing (HPC) and AI applications, from life sciences to finance to academic research. With large memory, fast cores, and Intel® Advanced Vector Extensions 512 (Intel® AVX-512), 5th Gen Intel® Xeon® Scalable processors deliver excellent Machine Learning training and inference performance.

With the Intel® AI software portfolio, developers can accelerate end-to-end Machine Learning and data science pipelines. These tools include optimized frameworks, a model repository, Intel® Extension for Scikit-learn and Intel® Optimization for XGBoost for machine learning, accelerated data analytics through the Intel® Distribution of Modin, optimized core Python libraries, and samples for end-to-end workloads.

Furthermore, Intel claim that the 5th Gen Xeon Scalable Processor offers a wider range for the entire AI pipeline compared to NVIDIA whereby the user may:

Navigate the entire AI pipeline with Intel® Xeon® processors that excel at a wider range of AI tasks than NVIDIA GPUs, from data preprocessing to inference.

Train small and medium deep learning models on a CPU in just minutes. With Intel® Advanced Matrix Extensions (Intel® AMX), the user receives a built-in matrix multiplication engine that offers discrete accelerator performance without the added hardware and complexity of a GPU.

And it is noted that the majority of data center AI inference deployments today run on Intel® Xeon® processors indicting the level of trust.

Furthermore, Intel also claim that with large memory, fast cores, and Intel® Advanced Vector Extensions 512 (Intel® AVX-512), Intel Xeon processors deliver better machine learning training and inference performance than NVIDIA GPUs.

Furthermore, it is anticipated that the edge (in particular the Internet of Things, IoT) will continue to grow and scale during 2024 and hence hardware resources that allow for AI decision making on the device will be key. The next article in this series will take a closer look at the edge and the potential of AI and the IoT to assist in sustainability and the fight against climate change. However, it may be noted that the Intel 5th Gen Xeon Scalable Processor may yield up to 24% higher real-time image classification inferencing with Intel Advanced Matrix Solutions.

In summary the 5th Gen Intel Xeon Scalable Processor provides businesses and end-users with the potential to fully access and scale the vast potential offered by Generative AI and AI models in general in an efficient manner including the computational and energy costs (hence the carbon footprint) with significant performance increases relative to the prior generation of Intel Xeon Processors.

NB all claims on performance are taken from the following source and results may vary:

5th Gen Intel Xeon Processors #5thGenIntelXeon

And data contained in this article is provided on a non-reliance basis and no representations, warranties or guarantees, whether express or implied are provided in relation to the content in this article. up-to-

Imtiaz Adam is a Hybrid Strategist and Data Scientist. He is focussed on the latest developments in artificial intelligence and machine learning techniques with a particular focus on deep learning. Imtiaz holds an MSc in Computer Science with research in AI (Distinction) University of London, MBA (Distinction), Sloan in Strategy Fellow London Business School, MSc Finance with Quantitative Econometric Modelling (Distinction) at Cass Business School. He is the Founder of Deep Learn Strategies Limited, and served as Director & Global Head of a business he founded at Morgan Stanley in Climate Finance & ESG Strategic Advisory. He has a strong expertise in enterprise sales & marketing, data science, and corporate & business strategist.

Leave your comments

Post comment as a guest