Comments

- No comments found

Under the right circumstances, data can help organizations build a competitive advantage.

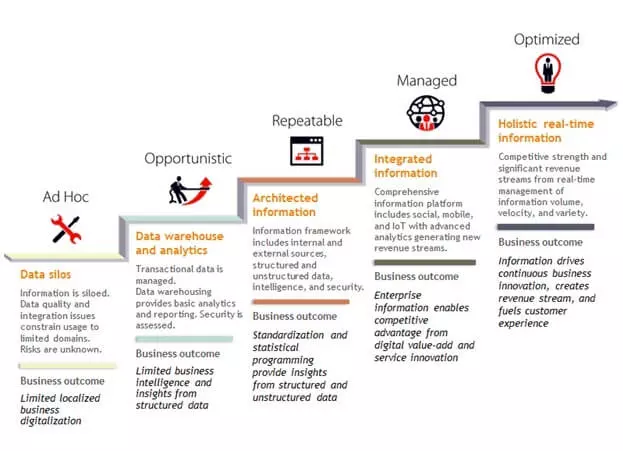

Data enables companies to visualize relationships between what is happening in different locations, departments, and systems. How large is the increase in data volumes? Can data be compared to oil; in other words, is data “the oil of business”? Unlike oil, data is constantly being created and growing exponentially, while oil is a finite resource. Innovative companies are leveraging innovative technologies such as artificial intelligence (AI) to exploit data into insights.

Data allows organizations to effectively determine the cause of problems. It also allows organizations to measure the effectiveness of a given strategy.

Today, according to IDC (Intelligence Data Corporation), the amount of data generated each year in the world should increase to 175 ZO in 2025 thanks to the development of multi-connectivity and sensors. This increase can mainly be attributed to the development of the Internet of Things (IoT). According to ifpro.fr, to give you an idea, one single zettabyte represents more than 40,000 billion DVDs stored on different supports and interconnected devices. With the growth of the IoT, every day, we interact with connected devices 4800 times – or once every 18 seconds. Hence, data is not the oil of business for a simple reason: since the end of the 1980s, the consumption of oil has increased faster than the number of discoveries. In contrast, the “consumption” of data (ie. the use of data by data tools) is still lower than the data tetras that represent the data with infinite potential today.

So how can you gain a competitive advantage from data over tetra data?

The first principle is the understanding that data must serve a target use case, a target functionality that corresponds to a real need on the market or in organisations. It is the knowledge of the value of data. Mobileye, a provider of advanced automatic driving assistance systems, has developed anti-collision and anti-line-crossing warning systems for cars. Its customers are car manufacturers who test the system themselves. And precisely these ex-post-tests generate data of value for the system upstream, leading to reliability levels of 99.9%.

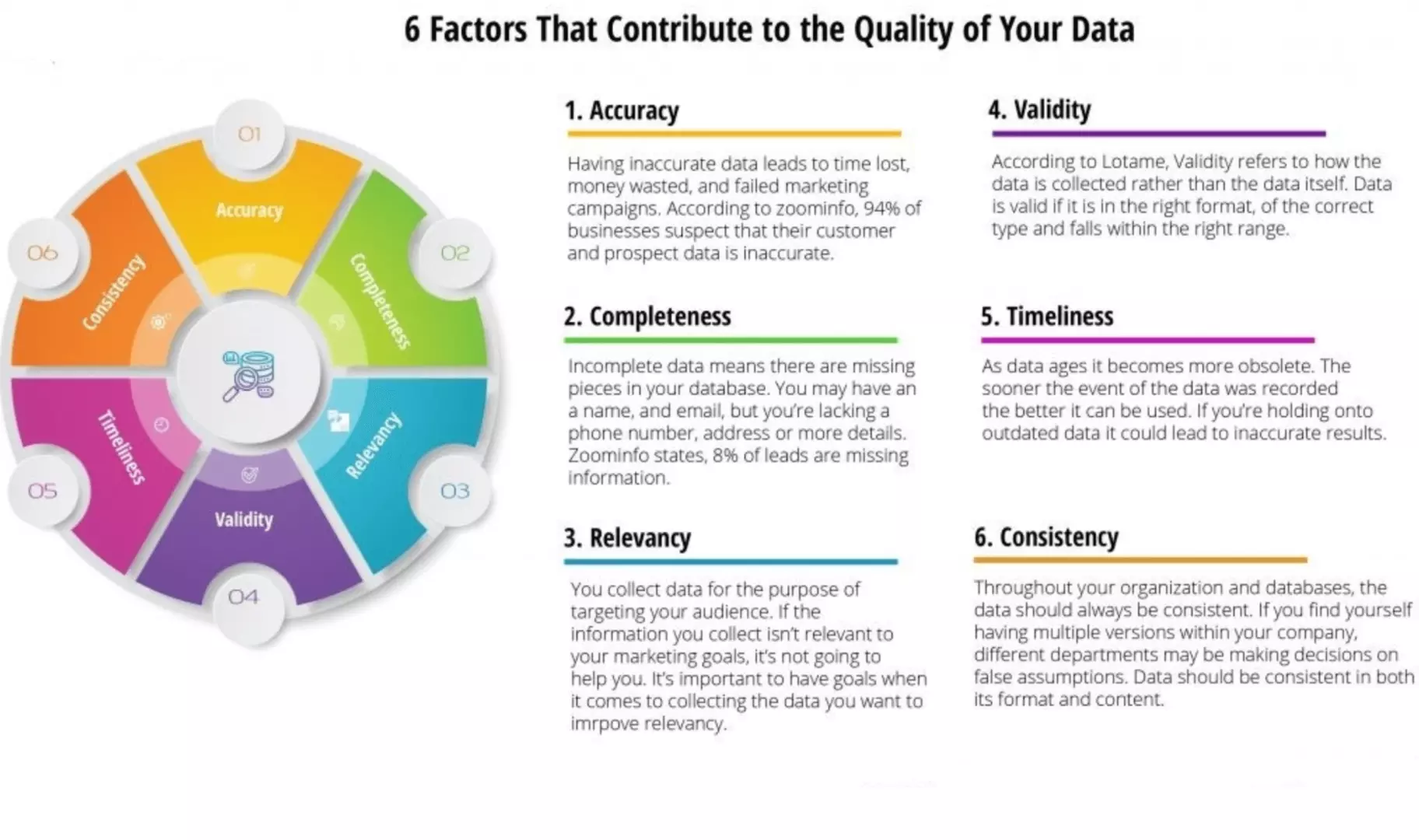

The second principle is the idea that it is not always necessary to accumulate insane amounts of data. Again, quality is not quantity. There is a point at which the marginal value of data decreases. The optimal quantity of data therefore constantly needs to be evaluated and be set at a point where its marginal value is not decreasing. The success of Zynga, which launched “Farmville”, results precisely from the fact that the data available to Zynga did not become obsolete from one week to another. Unfortunately, Zynga’s success story has a bad ending. After having launched a few other successful games, such as “Farmville 2” and “Cityville”, the company lost more than half of its user base. It was then superseded by Supercell (Clash of Clans and Epic Games (Fortnite)). After peaking in 2012 at $10.4 billion, Zynga’s valuation was then divided by more than two in the following 6 years. The data used as input to Zynga’s games is a great example of data that has become obsolete.

The third principle postulates that data must be exclusive to the company and not replicable. Adaviv, a Boston-area startup, has developed a crop management system to monitor single plants continuously. It uses AI with visual logics to track plant indicators that are invisible to the naked eye with the help of biometrics. Adaviv’s system can detect early warning signs of diseases or nutrient deficiencies that ultimately lead to decreasing yields. The input data that is generated cannot be initated and is thus exclusive to the company. As the number of Adaviv users increased, the tool could gather more data variations and produce more reliable information for its customers. The starting point is data. Another example is the streaming radio Pandora. Pandora’s “music genome project” (MPG) is a patented system for the classification of songs on the basis of 450 attributes and the analysis of millions of tracks to define cost-based radio strategies. Pandora offers tailor-made music selections and is non-replicable because the data used is totally exclusive and competitors can hardly replicate it. Such data enables the development of intelligent systems like the MPG. And the more the data itself evolves, the stronger the ability to generate knowledge through the appropriate tools.

The fourth principle is the improvement of the service for users by exploiting the data quality and the speed at which the generated knowledge can be integrated into the products. In practice, both amount to the same thing. In the US, Spotify and Apple Music have supplanted Pandora. But Spotify’s success extends to the entire world. It has resulted from the gradual exploitation of a rich database, which allowed Spotify to propose playlists with an attractive network effect, allowing users to search for and listen to other users’ radio stations. Here, rapid learning cycles make it difficult for competitors to catch up, especially when products have multiple upgrade targets over the course of a typical customer contract.

As demonstrated, there is a first round of improvement of data that takes place before it is used by an innovative tool. Thanks to machine learning and deep learning, AI can enable a second round of improvement. Thanks to its interpretation of the post result, AI confers undeniable advantages in the design of future projects and in the continuous improvement of the ex-ante data exploitation process, as well as the improvement of first products or services. In addition to these managerial points, we should not forget the human aspects. They apply to both technology and data. One of them is the art of persuasion: faced with the rise of data analysis, companies have hired the best data scientists they could find. But most of the time, they still have not been able to get the full value out of their data science initiatives. This is because to create a valuable project, a team must ask the right questions, process the relevant data, obtain results, and finally understand and communicate about what these results mean for their business. It is very rare for one person to master both, data analysis and its interpretation. Most data scientists are trained in the first aspect, but not the second one.

Pascal de Lima (PhD economics - Sciences Po) is Chief economist of CGI Business Consulting and guest speaker in several schools and conferences. He is a French economist and knowledge manager (KM). He applies his knowledge of economics to the field of KM to solve management consulting challenges. He lectured economics at Sciences Po in Paris and has also taught economics in several of France's top universities (HEC, ESSEC, Sup de Co, Engineering Schools and PREPA...) for a total of 18 years. As an essayist, he wrote more than 200 Op-Eds for major media outlets in France, 10 books and 5 referenced academic articles. He regularly gives lectures at international economics conferences. He specializes in economic foresight. His work is centered around monitoring and prospective thinking with primary focus on the assessment of the economic, social and environmental impact of innovations. After 14 years in the field of management consulting for the financial and banking sector (Ernst & Young, Cap Gemini, Chief Economist & KM at Arthur D.Little and Altran), he founded Economic Cell in 2013, an idea laboratory and consultancy whose purpose is to study market evolutions in light of economic transformations brought about by innovation. In 2017, he joined Harwell Management as Chief Economist, and in 2020, has become a teacher at Aivancity. He holds a PhD degree in Economics from the Paris Institute of Political Studies (Sciences Po), a Masters in Industrial Economics from Panthéon-Sorbonne Paris 1 and a Masters in Financial engineering from a top French business school.

Leave your comments

Post comment as a guest