Comments

- No comments found

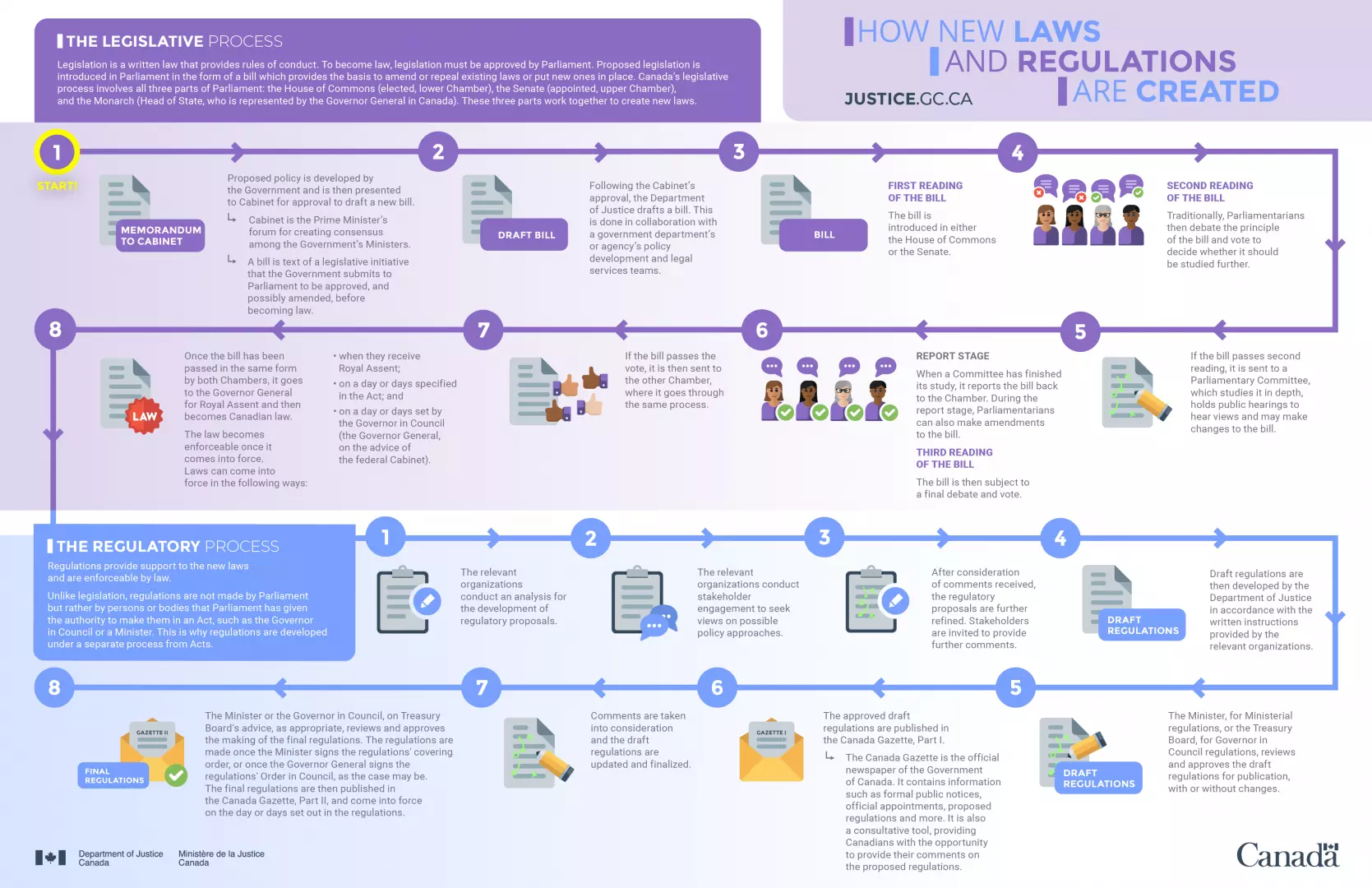

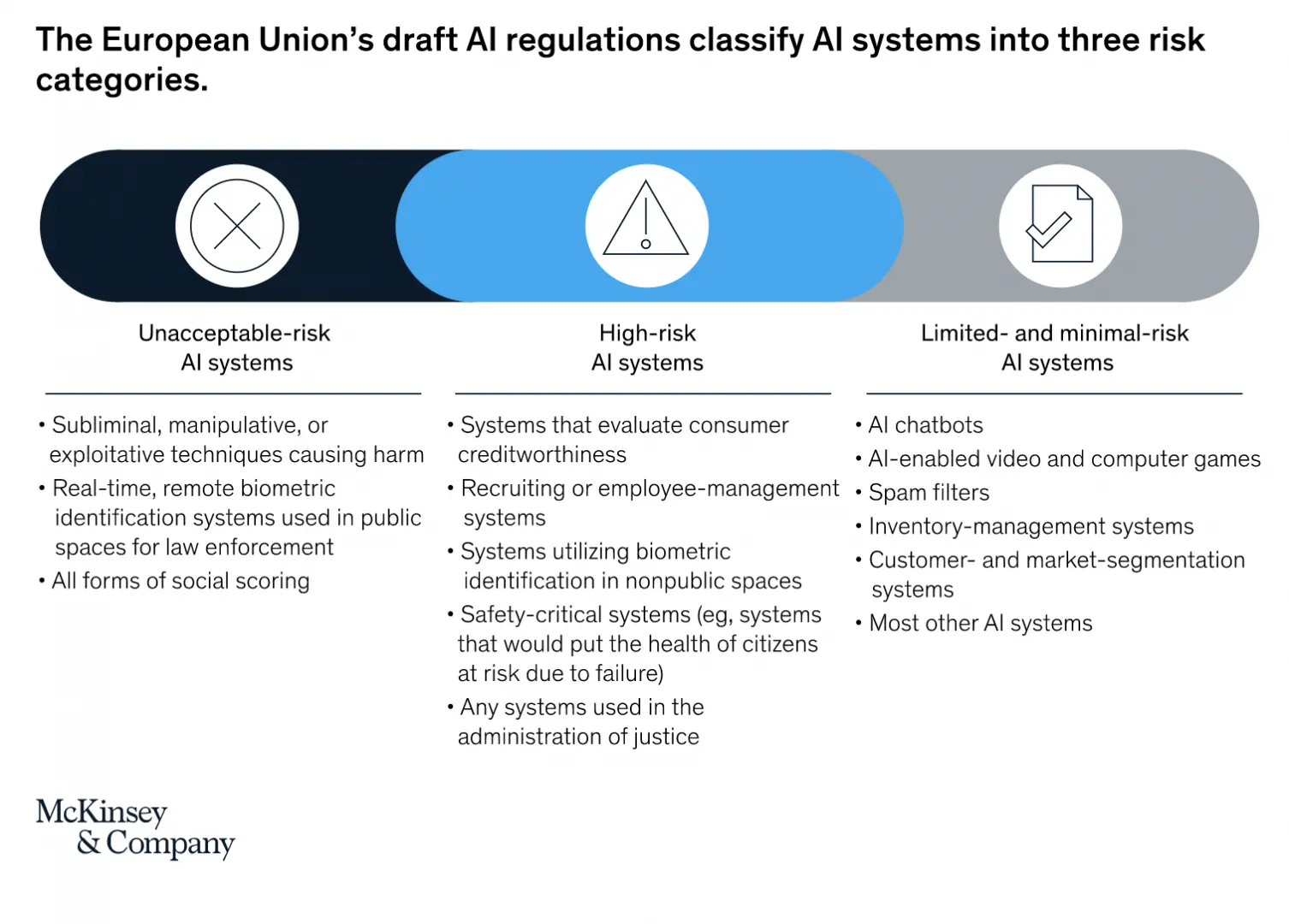

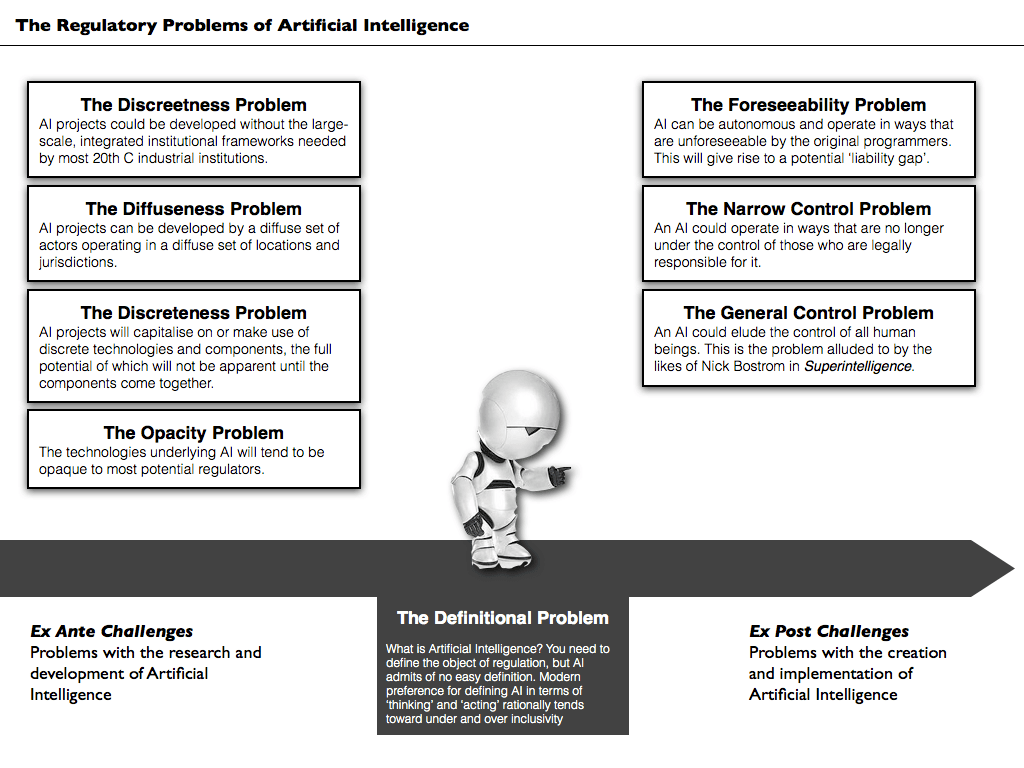

The current artificial intelligence (AI) systems are regulated by other existing regulations such as data protection, consumer protection and market competition laws.

It is critical for governments, leaders, and decision makers to develop a firm understanding of the fundamental differences between artificial intelligence, machine learning, and deep learning.

Artificial intelligence (AI) applies to computing systems designed to perform tasks usually reserved for human intelligence using logic, if-then rules, and decision trees. AI recognizes patterns from vast amounts of quality data providing insights, predicting outcomes, and making complex decisions.

Machine learning (ML) is a subset of AI that utilises advanced statistical techniques to enable computing systems to improve at tasks with experience over time. Chatbots like Amazon’s Alexa and Apple’s Siri improve every year thanks to constant use by consumers coupled with the machine learning that takes place in the background.

Deep learning (DL) is a subset of machine learning that uses advanced algorithms to enable an AI system to train itself to perform tasks by exposing multilayered neural networks to vast amounts of data. It then uses what it learns to recognize new patterns contained in the data. Learning can be human-supervised learning, unsupervised learning, and/or reinforcement learning, like Google used with DeepMind to learn how to beat humans at the game Go.

Artificial intelligence (AI) is stepping up in more concrete ways in blockchain, education, internet of things, quantum computing, arm race and vaccine development.

During the Covid-19 pandemic, we have seen AI become increasingly pivotal to breakthroughs in everything from drug discovery to mission critical infrastructure like electricity grids.

AI-first approaches have taken biology by storm with faster simulations of humans’ cellular machinery (proteins and RNA). This has the potential to transform drug discovery and healthcare.

Transformers have emerged as a general purpose architecture for machine learning, beating the state of the art in many domains including natural language planning (NLP), computer vision, and even protein structure prediction.

AI is now an actual arms race rather than a figurative one.

Organizations must learn from the mistakes made with the internet, and prepare for a safer AI.

Artificial intelligence deals with the area of developing computing systems which are capable of performing tasks that humans are very good at, for example recognising objects, recognising and making sense of speech, and decision making in a constrained environment.

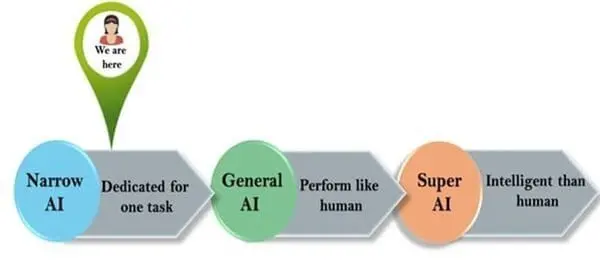

There are 3 stages of artificial intelligence:

1. Artificial Narrow Intelligence (ANI), which has a limited range of capabilities. As an example: AlphaGo, IBM's Watson, virtual assistants like Siri, disease mapping and prediction tools, self-driving cars, machine learning models like recommendation systems and deep learning translation.

2. Artificial General Intelligence (AGI), which has attributes that are on par with human capabilities. This level hasn't been achieved yet.

3. Artificial Super Intelligence (ASI), which has skills that surpass humans and can make them obsolete. This level hasn't been achieved yet.

We need to regulate artificial intelligence for two reasons.

First, because governments and companies use AI to make decisions that can have a significant impact on our lives. For example, algorithms that calculate school performance can have a devastating effect.

Second, because whenever someone takes a decision that affects us, they have to be accountable to us. Human rights law sets out minimum standards of treatment that everyone can expect. It gives everyone the right to a remedy where those standards are not met, and you suffer harm.

As of today, there is no international artificial intelligence law nor specific legislation designed to regulate its use. However, progress has been made as bills have been passed to regulate certain specific AI systems and frameworks.

Artificial intelligence has changed rapidly over the last few decades. It has made our lives so much easier and saves us valuable time to complete other tasks.

AI must be regulated to protect the positive progress of the technology. Legislators across the globe have to this day failed to design laws that specifically regulate the use of artificial intelligence. This allows profit-oriented companies to develop systems that may cause harm to individuals and to the broader society.

National and local governments have started adopting strategies and working on new laws for a number of years, but no legislation has been passed yet.

China for example has developed in 2017 a strategy to become the world’s leader in AI in 2030. In the US, the White House issued ten principles for the regulation of AI. They include the promotion of “reliable, robust and trustworthy AI applications”, public participation and scientific integrity. International bodies that give advice to governments, such as the OECD or the World Economic Forum, have developed ethical guidelines.

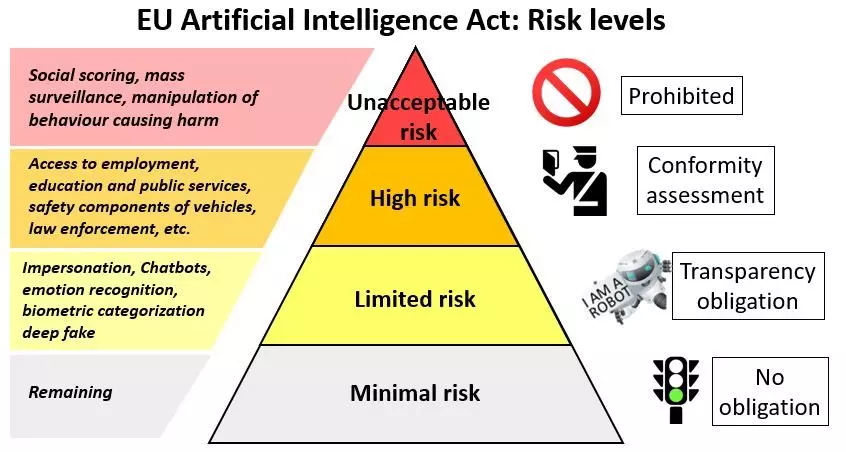

The Council of Europe created a Committee dedicated to help develop a legal framework on AI. The most ambitious proposal yet comes from the EU. On 21 April 2021, the EU Commission put forward a proposal for a new AI Act.

Police forces across the EU deploy facial recognition technologies and predictive policing systems. These systems are inevitably biased and thus perpetuate discrimination and inequality.

Crime prediction and recidivism risk are a second AI application fraught with legal problems. A ProPublica investigation into an algorithm-based criminal risk assessment tool found the formula more likely to flag black defendants as future criminals, labelling them at twice the rate as white defendants, and white defendants were mislabeled as low-risk more often than black defendants. We need to think about the way we are mass producing decisions and processing people, particularly low income and low-status individuals, through automation and their consequences for society.

An effective, rights-protecting AI regulation must, at a minimum, contain the following safeguards. First, artificial intelligence regulation must prohibit use cases, which violate fundamental rights, such as biometric mass surveillance or predictive policing systems. The prohibition should not contain exceptions that allow corporations or public authorities to use them “under certain conditions”.

Second, there must be clear rules setting out exactly what organizations have to make public about their products and services. Companies must provide a detailed description of the AI system itself. This includes information on the data it uses, the development process, the systems’ purpose and where and by whom it is used. It is also key that individuals exposed to AI are informed about it, for example in the case of hiring algorithms. Systems that can have a significant impact on people’s lives should face extra scrutiny and feature in a publicly accessible database. This would make it easier for researchers and journalists to make sure companies and governments are protecting our freedoms properly.

Third, individuals and organisations protecting consumers need to be able to hold governments and corporations responsible when there are problems. Existing rules on accountability must be adapted to recognise that decisions are made by an algorithm and not by the user. This could mean putting the company that developed the algorithm under an obligation to check the data with which algorithms are trained and the decisions algorithms make so they can correct problems.

Fourth, new regulations must make sure that there is a regulator that can make companies and the authorities accountable and that they are following the rules properly. This watchdog should be independent and have the resources and powers it needs to do its job.

Finally, AI regulation should also contain safeguards to protect the most vulnerable. It should set up a system that allows people who have been harmed by AI systems to make a complaint and get compensation. Workers should have the right to take action against invasive AI systems used by their employer without fear of retaliation.

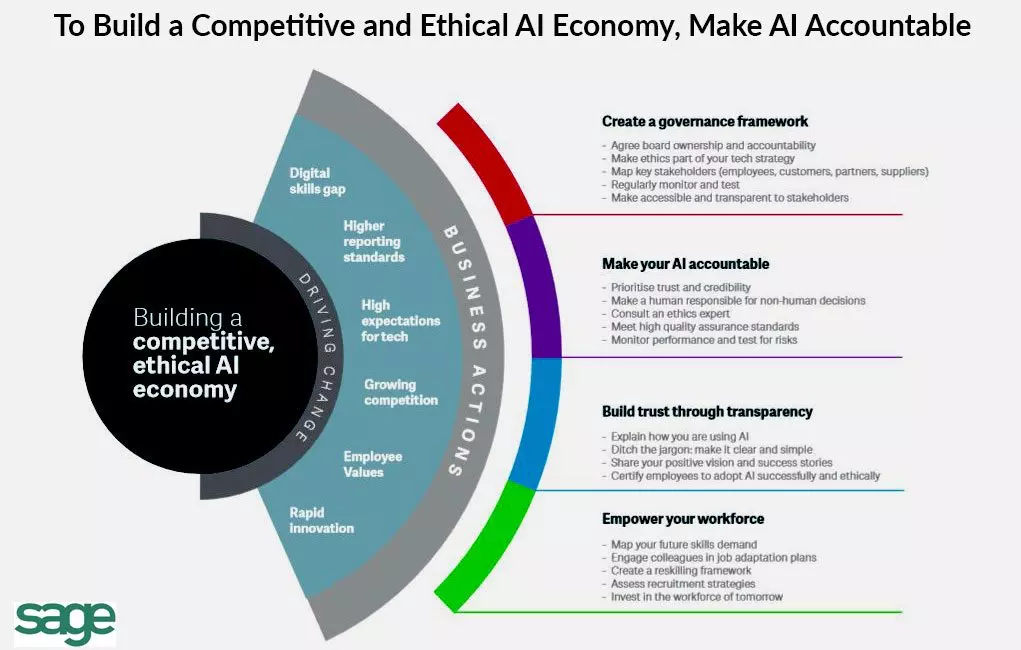

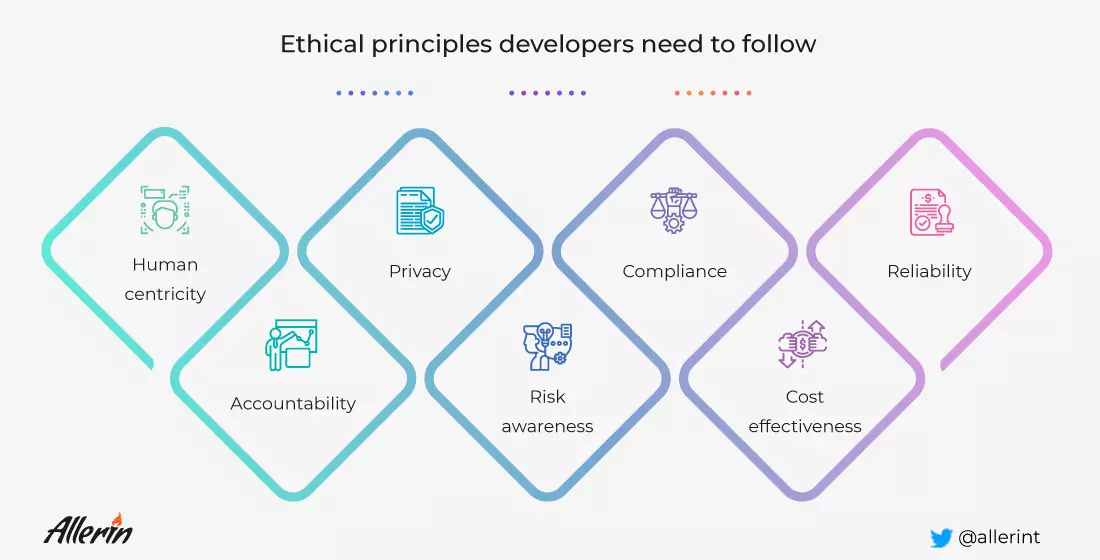

A trustworthy artificial intelligence should respect all applicable laws and regulations, as well as a series of requirements; specific assessment lists aim to help verify the application of each of the key requirements:

Human agency and oversight: AI systems should enable equitable societies by supporting human agency and fundamental rights, and not decrease, limit or misguide human autonomy.

Robustness and safety: Trustworthy AI requires algorithms to be secure, reliable and robust enough to deal with errors or inconsistencies during all life cycle phases of AI systems.

Privacy and data governance: Citizens should have full control over their own data, while data concerning them will not be used to harm or discriminate against them.

Transparency: The traceability of AI systems should be ensured.

Diversity, non-discrimination and fairness: AI systems should consider the whole range of human abilities, skills and requirements, and ensure accessibility.

Societal and environmental well-being: AI systems should be used to enhance positive social change and enhance sustainability and ecological responsibility.

Accountability: Mechanisms should be put in place to ensure responsibility and accountability for AI systems and their outcomes.

Leave your comments

Post comment as a guest