Comments

- No comments found

Many advances in artificial intelligence (AI) are fascinating, but some recent use cases are downright creepy.

AI is being increasingly used to make important decisions. Major tech companies are the primary ones driving AI advances, and their algorithms impact billions of people. Unfortunately, these companies have zero accountability.

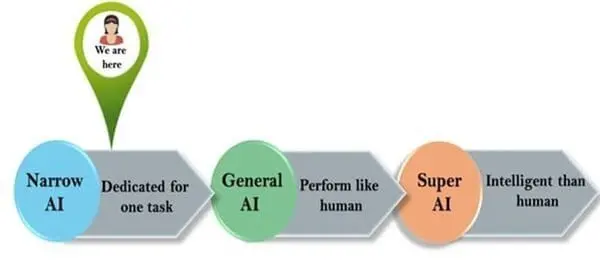

Source: Great Learning

YouTube (owned by Google) is helping to radicalize people into white supremacy. Google allowed advertisers to target people who search racist phrases like “black people ruin neighborhoods” and Facebook allowed advertisers to target groups like “jew haters”. Amazon’s facial recognition technology misidentified 28 members of congress as criminals, yet it is already in use by police departments. The newsfeed/timeline/recommendation algorithms of all the major platforms tend to reward incendiary content, prioritizing it for users.

While it can be easy to focus on regulations that are misguided or ineffective, we often take for granted safety standards and regulations that have largely worked well.

Here are 7 terrifying artificial intelligence use cases:

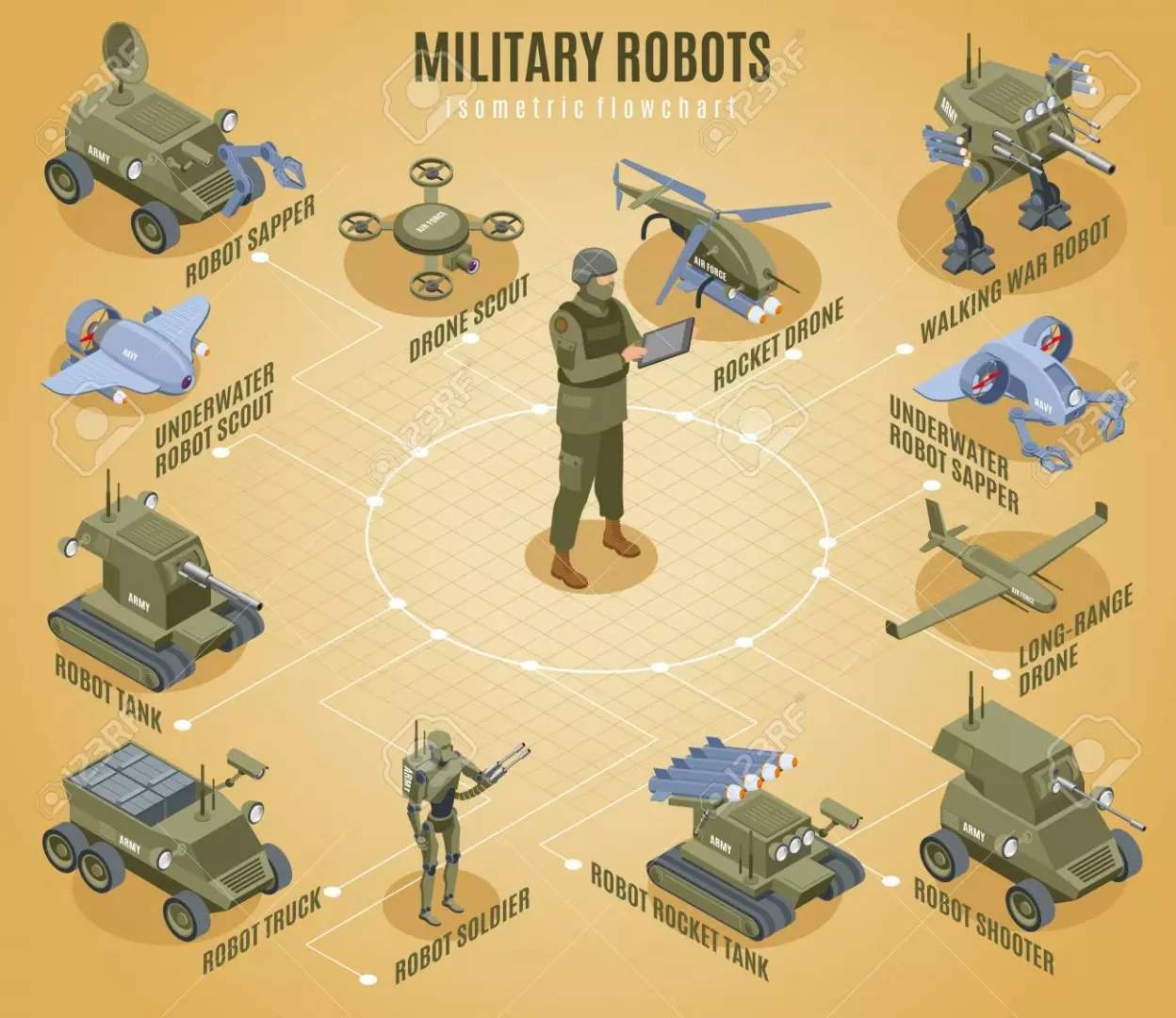

Source: Army.ml

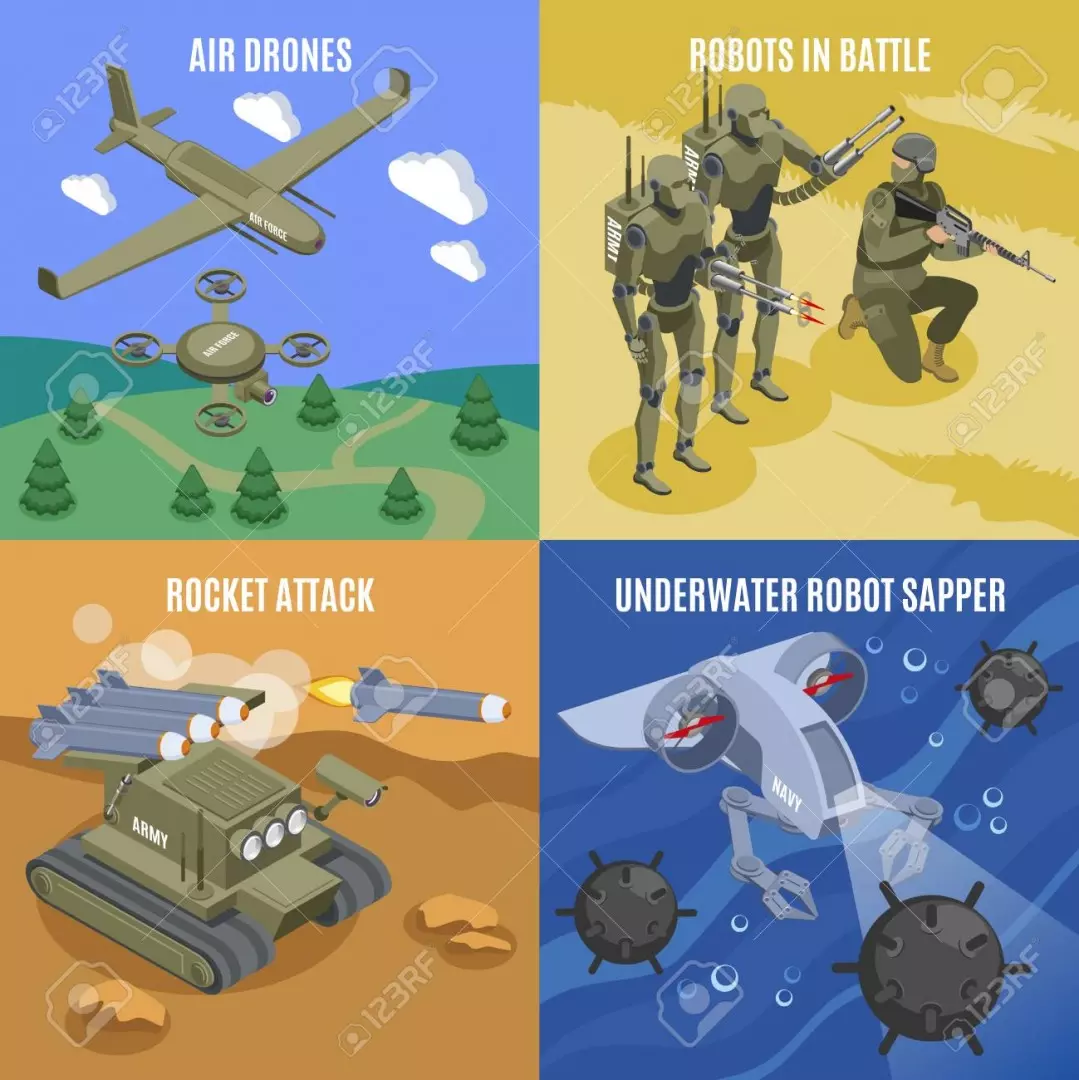

Military robots are autonomous robots designed for military applications from transport to search & rescue and attack. One of the scariest potential uses of AI and robotics is the development of a robot soldier. Although many have moved to ban the use of so-called "killer robots," the fact that the technology could potentially power those types of robots soon is upsetting, to say the least. In an experiment conducted by the scientists of Intelligent Systems in Switzerland, robots were made to compete for a food source in a single area. The robots could communicate by emitting light and, after they found the food source, they began turning their lights off or using them to steer competitors away from the food source.

Source: 123RF

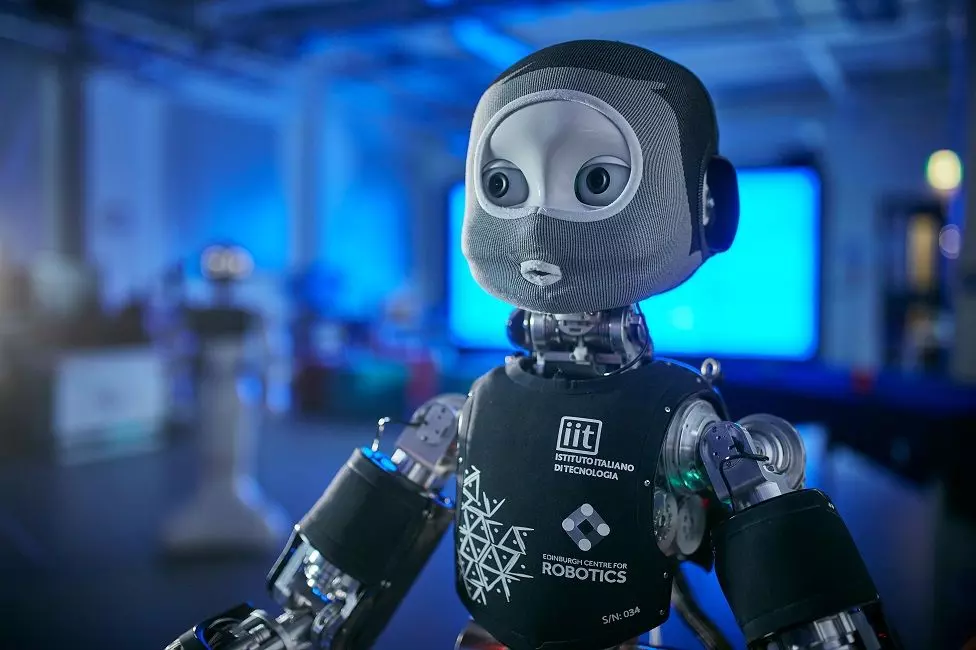

Source: National Police Chiefs' Council

Artificial intelligence could change policing. Police in certain cities around the US are experimenting with an AI algorithm that predicts which citizens are most likely to commit a crime in the future. Hitachi announced a similar system back in 2015. Maybe the film Minority Report wasn't completely off base in its representation of the future?

Source: BBC

One of the biggest industries that AI could potentially benefit is healthcare. AI is already in use in many fields of medicine, even helping doctors decide on treatment. But, what if that AI system misses a critical aspect of your medical history or makes the wrong recommendation? Cases of AI being discriminatory in healthcare have been frequently documented in the past. Currently, AI-based suggestions are not considered to be superior to an experienced physician’s calls. However, it is very likely that, at some point in the future, AI will be the standard-setter in terms of human bodily diagnostics, treatment suggestions, and implementation.

Source: Daily Bits

Researchers at the University of Texas at Austin and Yale University used a neural network called DISCERN to teach the system certain stories. To simulate an excess of dopamine and a process called hyperlearning, they told the system to not forget as many details. The results were that the system displayed schizophrenic-like symptoms and began inserting itself into the stories. It even claimed responsibility for a terrorist bombing in one of the stories.

Source: Autonomous Motion

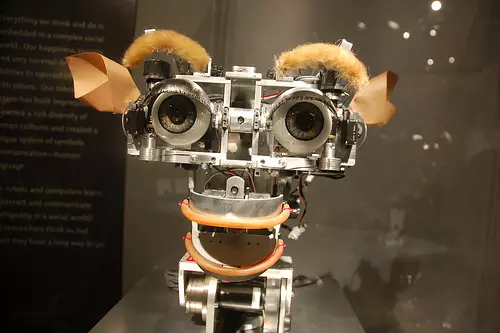

In many cases, robots and AI systems seem inherently trustworthy--why would they have any reason to lie to or deceive others? Well, what if they were trained to do just that? Researchers at Georgia Tech have used the actions of squirrels and birds to teach robots how to hide from and deceive one another. The military has reportedly shown interest in the technology.

Source: The Sun

Among the many ethical concerns posed by robots and the AI systems that power them is the idea that humans could love, or at least copulate with, a robot companion. Companies are already trying to make "sex robots" a reality, and opponents are campaigning against it fervently. A machine will never replace genuine humanfeelings and emotions even if it is a sophisticated one. Building a relationship with a robot is weird. The choices we make, the actions we take, and the perceptions we have are all influenced by the emotions we are experiencing at any given moment.

Source: Times Higher Education

Nautilus is a supercomputer that can predict the future based on news articles. It is a self-learning supercomputer that was given information from millions of articles, dating back to the 1940s. It was able to locate Osama Bin Laden within 200km. Now, scientists are trying to see if it can predict actual future events, not ones that have already occurred.

Artificial intelligence (AI) is a great technological advancement and promises to eventually dominate almost every domain. AI is seeping into several industries, revolutionizing the way they conduct their business and manage their workforce.

It may undoubtedly prove beneficial for the future but a complete AI takeover is also highly likely, if due measures aren’t taken now. Today, the use of AI has spread to almost every field of human activity. AI is like a mischievous kid that can’t be left alone and constantly needs adult supervision, and carelessness in controlling the technology can lead to an AI takeover.

Leave your comments

Post comment as a guest