Comments

- No comments found

Ethical artificial intelligence (AI) focuses on values, principles, and techniques that promote moral conduct and regulatory framework, which benefit humanity as a whole.

It also prevents the malicious use of AI technology in deepening inequalities and divides.

A roadmap to trusting artificial intelligence (AI) is important. Lack of trust is why many companies have not adopted AI into their business frameworks. The fear of the unknown runs deep – just as I felt on Vatnajökull – and the unknown behind technology is oftentimes immense, teeming with frightening possibilities. However, when we are faced with change, our views have the potential to widen, inspiring us to become more compassionate and forward-thinking. This, to me, has marked the advancement of humankind throughout history.

The possibilities for AI improving our lives are endless, especially if we are open to creating an impact. We can trust AI as much as ourselves. We have the privilege to make technology that reflects the best of our humanity. The next century will see even accelerated advances in tech – some we have yet to discover and others that are already underway like greater connectivity and the expansive impact of big data.

To put it simply: AI is the future, and the future won’t wait. That is why creating robust, fair, and ethical AI is a priority for technology leaders. I see the path to ethical AI built upon three basic tenets.

AI trustworthiness and governability are among the most critical issues in ensuring that AI is sustainable and focused on being humane. The latter (humane AI) probably seems more challenging to define. How does a machine achieve humanity? While AI cannot compete with the workings of the human mind (even if it can surpass human intelligence) and our emotional world, its ability to draw upon a wealth of information to form connections is unmatched. Its goal is not to compete with human intelligence; instead, to simply augment it. But, as with anything, bias is possible. Inaccurate data, substandard creation, and execution of AI processes all result in undesired outcomes. And with those undesired outcomes, there is a matter of ethics.

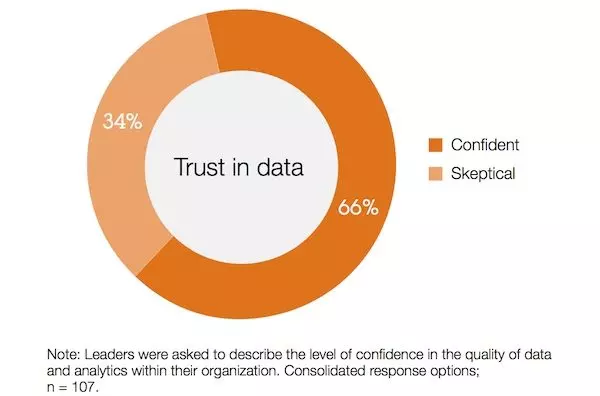

So, how do business leaders gain the confidence to utilize AI for the better?

First, they must be dedicated to data quality. AI cannot reflect the needs and demands of an ever-expanding market (and the people who comprise it) if the data by which it’s built upon is not quality data. One cannot create a house without the foundation; it will simply fall apart. Similarly, AI modules that draw from available data cannot reach accurate conclusions without pure data. Partnering with talent, such as data scientists, during the development and implementation of AI is critical.

Secondly, cybersecurity and privacy must always be a priority during every stage of AI’s life. Governance examines the regulations surrounding the fairness of AI adoption throughout every sector. Companies should not only try to comply with such standards, but, incorporate what ethical AI looks like. The IBM Cloud Pak for Data, for example, is a good example of a platform that addresses governance. To quote the Dalai Lama: “A lack of transparency results in distrust and a deep sense of insecurity.” AI shouldn’t feel like walking through the woods at midnight. Compliance and proper governance come by way of greater visibility into automated processes and clear documentation. See more, trust more.

Gartner created a model to better manage AI risk called the Model Operations, Security and Trustworthiness (MOST) Framework. It identifies an organization’s threat vectors, the types of potential damage from those threats and possible risk management measures.

Lastly, in terms of trust, consider what is important in the trusted relationships you have in your own life. How do you go from knowing someone to trusting them? Oftentimes, they’ve earned your trust by showing up, following through or keeping a promise. The intent of AI is to deliver greater value across the organization. Chart that value along your organization’s AI journey.

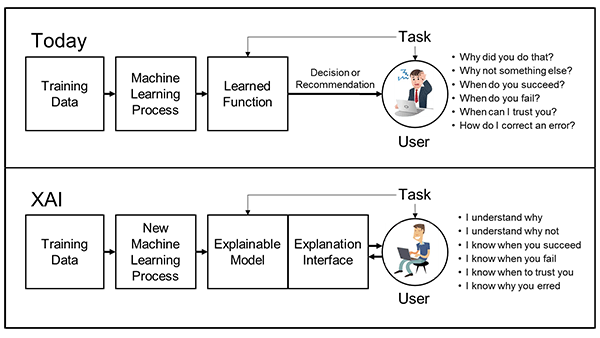

Business leaders may wonder why the need to understand AI is so important, citing reasons like budget or lack of talent for why explainability cannot be pursued for their particular business. Building transparent AI models and frameworks is something to work out with stakeholders, but there are options besides relying on available talent. IBM seeks to bring the trustworthiness of AI back into focus, offering expert advice to technology leaders or CEOs who may need it. There are leaders out there, like IBM, to help you build more explainable, governable, and ethical AI for the benefit of everyone involved.

Explainable AI mitigates risks. It is capable of selecting better data rather than repeating bias. Tracing AI reasoning is made simpler through explainability, where programmers can determine why AI made a particular decision. It is not needed for all forms of AI, but it is indispensable for more complex and advanced models. For example, AI that uses deep neural networks may be more nebulous; therefore explainability is essential.

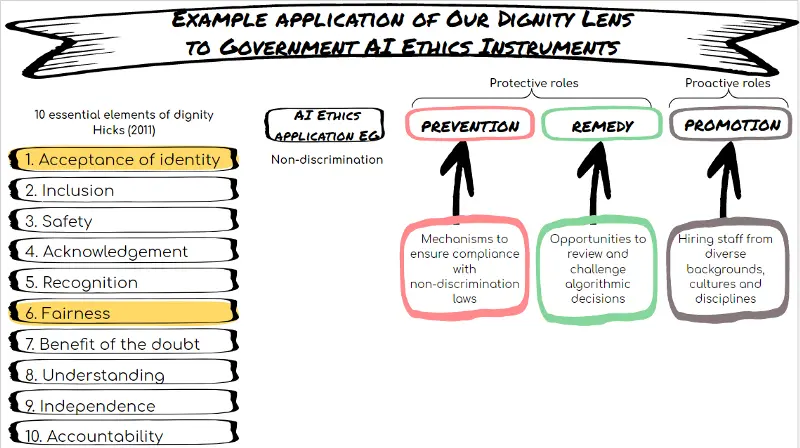

Source: Centre For Public Impact

As spectacular as AI’s impact may be, at its core, it is bits and bytes, zeros and ones. This is one reason security and privacy of data are at the core issue of governing AI. Once systems are put into place to manage data throughout the entirety of an organization, however, AI becomes governable and less scary. We then not only begin to trust AI, but we also begin to trust our businesses and mission, and in turn, customers trust us.

AI trustworthiness starts with us as people. It is like a cyclical journey, where we trust each other, the technology we make, and the trust is then returned to us. This circle of trust honors something I hope we never lose sight of: human dignity. The term is defined by Human Rights Careers, a rel="nofollow" website that encourages careers in human rights and related areas, as “....the belief that all people hold a special value that’s tied solely to their humanity.”

I believe our innate quality to question, explore, adapt and protect will drive ethical AI. Trusting “us” leads to trusting the technology we use to better our lives, improve our businesses and evolve as a society.

Understanding the predictions of AI, but more importantly, the “why” behind such predictions, helps us navigate advancing technology in the world. And it is from this understanding that AI is then governable, sustainable, innovative, humane. Growth thrives at the intersection of tech and humanity.

Learning why something happened and where there is an area to improve expands our capability as people. AI must also be teachable, able to teach itself as well as us. When AI can improve upon its processes, recognize and “learn” from patterns at scale, it complements our skills and abilities as people. That should always be the goal: for technology not to detract from what makes us unique, interesting or empathetic, but to enhance and add greater value to what is already there. Then, and only then, will we reach higher ground.

From time to time, IBM collaborates with industry thought leaders to share their opinions and insights on current technology trends. The opinions in this article are my own, and do not necessarily reflect the views of IBM & BBN Times.

Helen Yu is a Global Top 20 thought leader in 10 categories, including digital transformation, artificial intelligence, cloud computing, cybersecurity, internet of things and marketing. She is a Board Director, Fortune 500 Advisor, WSJ Best Selling & Award Winning Author, Keynote Speaker, Top 50 Women in Tech and IBM Top 10 Global Thought Leader in Digital Transformation. She is also the Founder & CEO of Tigon Advisory, a CXO-as-a-Service growth accelerator, which multiplies growth opportunities from startups to large enterprises. Helen collaborated with prestigious organizations including Intel, VMware, Salesforce, Cisco, Qualcomm, AT&T, IBM, Microsoft and Vodafone. She is also the author of Ascend Your Start-Up.

Leave your comments

Post comment as a guest