Comments

- No comments found

Artificial intelligence is at the top of many lists of the most important skills in today's job market.

In the last decade or so we have seen a dramatic transition from the “AI winter” (where AI has not lived up to its hype) to an “AI spring” (where machines can now outperform humans in a wide range of tasks).

Having spent the last 25 years as an AI researcher and practitioner, I'm often asked about the implications of this technology on the workforce.

I'm quite often disheartened by the amount of disinformation there is on the internet on this topic, so I've decided to share some of my own thoughts.

The difference between what I am about to write, and what you may have read before elsewhere is due to an inherent bias. Rather than being a pure “AI” practitioner, my PhD and background is in Cognitive Science - the scientific study of how the mind works, spanning such areas as psychology, neuroscience, philosophy, and artificial intelligence. My area of research has been to look explicitly at how the human mind works, and to reverse engineer these processes in the development of artificial intelligence platforms. Hence, I probably have a better understanding than most of the differences and similarities between human and machine intelligence and how this may play out in the workforce (i.e. what jobs will and will not be replaceable in the future).

So let's begin.

A good place to start this discussion is with the work of Katja Grace and colleagues at the Future of Humanity Institute at the University of Oxford. A few years ago they surveyed the world's leading AI researchers about when they believed machines would outperform humans on a wide range of tasks. These results are below:

Evidently there were different predictions made as to when different types of work will be able to be performed by machines. But in general, there is consensus that there will be major shifts in the workforce in the next 20 years or so.

In the paper, they define “high-level machine intelligence” being achieved when unaided machines can accomplish every task better and more cheaply than human workers. Aggregating the data, on average experts believe there is a 50% chance that this will be achieved within 45 years. That is, the leading experts in AI believe that there is a 50% chance that humanity will be fully redundant in 45 years.

This prediction is unimaginable to most of us. But is it realistic? In the next sections I will answer this question looking at the different “types” of work. But firstly, I will explain a little about recent AI advancements.

Up until recently we were very much in an AI “winter” (a term coined by relating it to a nuclear winter), where there were distinct phases of hype, followed by disappointment and criticism. The disillusionment was reflected in pessimism by the media, and severe cutbacks in funding, resulting in reduced interest in serious research.

This lull has changed in the last decade or so, with the success of “deep learning” - an AI paradigm that was inspired by how the brain processes information (in short, artificial neural networks that that process information in parallel, as opposed to the typical serial processing we see in most computer CPUs).

Deep learning and neural networks have been around for some time. However, it is only recently that our computers have been powerful enough to run these algorithms on real-world problems, in real-time. For example, visual object recognition systems of today (e.g., facebooks ‘face recognition system) use what are called “convolutional neural networks” that mimic how the human visual cortex works. Papers describing this approach started appearing in the early 80’s such as with Fukushima’s Neocognitron. However, it was not until 2011 that our computers were able to run these algorithms at an appropriate speed to make them useful in practice.

What happened around only 10 years ago, was it was discovered that neural networks could run on computer graphics cards (GPUs - graphics programming units) … as these cards were specifically designed to process large amounts of information in parallel - exactly what is needed for artificial neural networks. Most AI researchers these days still use high-performance graphics cards, with there being exponential growth in the capabilities of these cards over time. That is, graphics cards today are 16x more powerful than what they were 10 years ago, and 4x what they were 5 years ago… that is they double in computational power every 2.5 years. And with growing interest in the area, we are sure to see ongoing rapid advancements in this technology.

Beyond the fact that computers can now run these large scale networks in real-time, we also have a wealth of large data sources to train them on (n.b., neural networks learn from examples), and available programming platforms such as TensorFlow developed by Google that are openly available to anyone with an interest in machine learning.

As a result of the availability and success of deep learning approaches, AI has officially moved from its supposed winter, to a new season - spring.

What does all this mean for the workforce? Let’s continue...

Perhaps one of the “low hanging fruits” of robotics and AI is in automation… replacing repetitive manual labour with machines that can perform the same kind of task cheaply and more efficiently.

An example of this is in Alibaba’s smart warehouse, where robots perform 70% of the work:

I think the important thing to note when we think of AI replacing human workers, is that they do not have to do the same exact work, in order to make humans redundant.

Consider how Alibaba and Amazon have disrupted the retail sector, with an increasing number of shoppers heading for their screens to make their purchases rather than entering brick-and-mortar retail stores. The outcome is the same (i.e. a consumer making a purchase and receiving a product), but the process itself can be restructured in a way that uses automation to make the process cheaper and more efficient.

For example, Amazon (Prime Air), is trialing a drone delivery system to “safely delivery packages to customers in 30 minutes or less” disrupting the standard way humans would make similar deliveries:

We are seeing much progress in the ability of machines to perform manual tasks in a wide range of areas, both in and outside of the factory. Take for example the task of laying bricks. Fastbrick, has recently signed a contract in Saudi Arabia to build 55,000 homes by 2022, using automation:

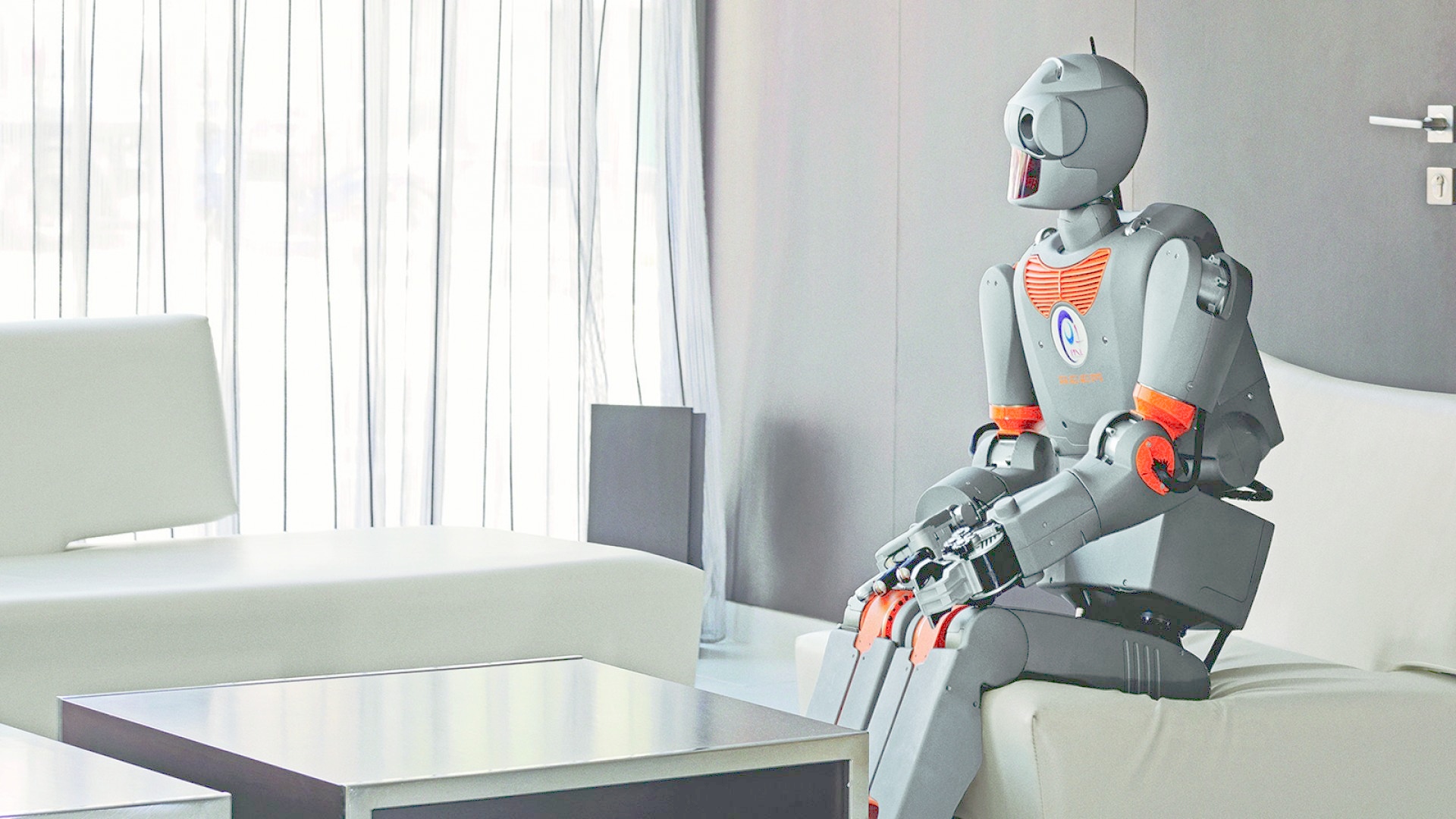

As a glimpse of the future, companies such as Boston Robotics are capable of building robots with similar physical structures to humans, performing tasks that average humans can’t:

The point here is that robots in the near future will no doubt be able to replace low-skilled workers, in menial and repetitive tasks, either by performing it in a similar manner, or changing the nature of the work itself, solving the same task in a different but more efficient manner.

I was speaking with a leader only last week, who was about to replace his airport baggage handling staff with machines. And he simply said, the robots will be cheaper and do a better job… so why wouldn’t he.

And the truth is, this is how these decisions are being made. To gain or maintain a competitive advantage, automation is indeed a rational choice. But what does this mean for the unskilled labourers?

What we currently know is that the gap between the rich and the poor is growing rapidly (e.g., the 3 richest people in the world possess more financial assets than the lowest 48 nations combined):

In the bottom percentiles the number of hours worked has decreased substantially, with the main reason being the demand and supply of skills.

Many argue that although machines will no doubt take on the low-skilled jobs, these workers will simply move to positions where more ‘human-like traits’ are required (e.g., emotional intelligence and creativity). Will will delve into these areas, to test this assumption in the following sections. But from research such as the above, the current trend has been so far to replace workers without creating the equal number of opportunities elsewhere.

A level up from automation, are jobs or aspects of jobs that require decision-making and problem-solving.

In terms of decision-making, AI is incredibly well-suited for statistical decision-making tasks. That is, given a description of the current situation, categorising the data into appropriate classes. Examples of this include speech-to-text recognition (where the auditory stream is classified into distinct words), language translation (converting one representation to another), object detection (e.g., finding objects or detecting faces in an image), medical diagnoses (e.g., detecting the presence of cancerous cells), exam grading, and modelling consumer behaviour etc. etc. These systems are perfect for scenarios where there is a lot of data that can be used for training the systems, and there are numerous examples (such as those I just listed) where machines now outperform their human counterparts.

I place problem-solving here in a slightly different category to decision-making. Problem-solving is more about how to get to a desired state given the current state, and may involve a number of steps to get there. Navigation is a perfect example of this. And we have seen how well technologies such as google maps have been integrated into our daily lives (e.g., calculating the fastest route given current traffic conditions, and modifying the recommendation should conditions change).

Deep learning has also had a major impact in AI approaches to problem-solving. Take for example chess. In 1997 Deep Blue, a chess-playing computer developed by IBM beat Garry Kasparov, becoming the first computer system to defeat a reigning world champion. This system used a “brute force” approach, thinking ahead, and evaluating 200 million positions per second. This is quite distinct to how humans experts play chess, that play through “intuition” rather than thinking through all possible exhaustive moves.

With the advent of deep learning, AI problem-solving has become more human-like. Google’s AlphaZero for instance has beaten the world’s best chess-playing computer, teaching itself how to play in under 4 hours. Rather than using “brute force” AlphaZero uses deep learning (plus a few other tricks) to extract patterns of patterns that it can use to evaluate its next move. Thus, this is similar to human intuition, where it has a “feeling” how good a move is based on the global context. Similar to human intuition, one drawback of this approach is that it is often impossible to understand "how" the decision was made (as it is due to the combination of millions of features at different levels).

Besides chess, Google has also beaten the world champion at the ancient Chinese game of go. This was a major achievement, as it was foreseen by AI researchers as an incredibly difficult task. In a game of chess, there are on average approximately 35 legal positions that a player can make on each move. By comparison, the average branching factor for Go is 250, making a brute force search intractable. In 2016, AlphaGo won 4-1 against Lee Sedol, widely considered to be the greatest player in the past decade. AplhaGo’s successor, AlphaGo Zero, described in Nature, is “arguably the strongest Player in history.”

So in short… Again, there is much growing research and success in computer decision-making and problem solving.

When talking about the future of work, there is often an argument that, although machines will replace many jobs, there will always be a “space between” what AI and humans can do. Accordingly, human work will simply move to areas that involve creativity and emotional intelligence - competencies that machines will never be good at. Let’s explore this argument, as it was the topic of my own PhD.

My own PhD work (gosh, around 20 years ago now), was inspired by Douglas Hofstadter and the Fluid Analogies Research Group (FARG)- a team of AI researchers investigating the fundamental processes underlying human perception and creativity.

Many of the models that FARG implemented seem trivial by today’s standard, but illustrate the core processes underlying human creativity.

One of the many examples of “creativity” that they looked at, was the game “JUMBLE” - a simple newspaper game, where you were required to unscramble the given letters into a real word. Consider the scrambled letters “UFOHGT”

Now, you are probably asking yourself what this trivial anagram task has to do with creativity. And the answer is EVERYTHING.

While trying to solve this problem, think about HOW you solve it.

Unlike Deep Blue, in solving anagrams, you will not search through every combination. But instead you will “create” word-like candidates - letter strings that follow the statistical properties of what words generally look like. E.g., you would not start with the letters “HG” together or “FG,” as statistically speaking, these are not how typical English words start.

You might instead start by chunking the letters “OU” “FT” and “GH” together, and arrange them in a sequence to create the word “GHOUFT”. But you discover that this is a non-word, and you pull it apart and try again.

Over time, you will try different combinations of word-like candidates until you come up with a real English word.

The “creative” aspect of this task lies in the fact that you generated a range of “word-like” options based on the statistical properties of english.

A demo of this (one of the many demos from my thesis) can be found below:

In short, most creativity can be viewed in this manner…. that there exists statistical regularities of things that “belong together”, and the creative process involves searching through a range of options until you find a global solution that is suitable. For example, music is not a random sequence of notes… but has inherent structure, with music composition exploring different combinations of notes that conform to these rules.

With the advent of deep learning, AI now is very good at extracting such statistical regularities from domains, and generating novel examples that follow the statistics of the domain.

An example of this is from Sony’s CSL Research Lab that can listen to music, extract the statistical regularities, and generate its own songs in the given style. As an example, the below song was generated “in the style of” the Beatles:

An example perhaps more illustrative of current advances is Google’s “drawing bot” that is capable of generating photo-realistic images, given a text description. This system was trained on captioned images, and once trained could generate its own images given a text based description.

For example, the following drawing was generated from the query “this bird is black and yellow with a short beak” (i.e. this bird does not exist in real life, and was “generated” by the algorithm rather than being retrieved):

This system can generate a range of images, including “ordinary pastoral scenes” such as grazing livestock, through to more abstract concepts such as a “floating double-decker bus.”

Another example of this is in computer programming - as this is something schools are focussing on, that they believe will be an essential skill of the future.

Enter Bayou...

Researchers from Rice University have created and are refining an application that writes code given a short verbal description from the user.

The software uses deep learning to "read the programmer's mind and predict the program they want."

So, in short... There is major disruption about to potentially occur in this area as well. The future (and as such what we need to be teaching kids to prepare them for it), is very uncertain.

So - to answer the question that I started above. Yes, in the short-term there may be spaces between what humans and machines are capable of, but in the near future these spaces will get smaller and smaller.

In the long-term, I certainly believe creativity is an area that could and will be outsourced to machines (particularly in the technical space of creating new ideas and solutions).

The final bastion that seems to protect humans (and our jobs) from complete redundancy, is our human emotions and emotional intelligence. Many people argue that this is a defining feature of humans that truly segregates us from machines.

Or does it?

If you believe in evolution, you should believe that humans have emotions for a reason - there is some evolutionary benefit.

Thought of in this way, most emotions are definitely here for a reason. They are our internal guidance system that tells us if we are getting things right or wrong. For example, pain and fear are incredibly important, as they prevent us from taking risks that could lead to harm. No doubt such “emotions” are useful for machines to have as well, and we already see early analogues of them in machines of today (e.g., “bump sensors”, or sensors to prevent your robot vacuum cleaner from falling down stairs - sensors that prevent them from doing things that could be self-harming).

Ok, so pain is an obviously important signal for machines to have, but what about something more complex like “happiness” - what could be the evolutionary benefit of that?

Well, I am glad you asked, as it has been part of my own research to look at pleasure centres in the brain, and develop their analogue in robots - yes, indeed “happy” robots.

You can check out some of my older research on this topic in the video below. In short, one of the many purposes of “happiness” is that it drives learning (i.e. we are naturally curious, and are as a result active participants in our own learning).

So, hopefully, watching the above video, you will understand the role of emotions, and how they are central to intelligence. So, I do definitely believe that in the near future machines will have their own emotions and drives that will increase in complexity over time. There is no real bastion that will be left standing in the end.

I have made many strong claims in the above text (i.e. that in the near future, all human jobs are in jeopardy), and I am sure that there will be more than a few people that may be skeptical at this point. Possibly because the advances in current AI are not visible in our lives - they are currently hidden away in our factories, mobile phones, and online shopping recommendations. But if you look under the hood, the technology is there, and progressing at an alarming rate.

A possible metaphor is that of the boiling frog - i.e. if you put a frog in boiling water it will jump out immediately, but if you put a frog in cool water that is brought slowly to boil it will not perceive the threat and be boiled alive (not scientifically true, but a nice metaphor).

As humans, we are used to slow and gradual change. In contrast, we are unfamiliar with exponential growth, in that something that we perceive as changing slowly today, may rapidly change tomorrow. As a result, rapid overnight changes are not something we naturally fear. But all the research suggests that advances in AI are following this exponential pattern, and there is a tipping point in the near future where changes will be rapid and unpredictable.

Ray Kurzweil, in his book “the age of the spiritual machines” charts evidence of this exponential growth, in terms of the increase of calculations per second of computers over time. Given the current GPUs we are currently using for AI, the predictions he made with respect to where we are in 2018 are remarkably accurate.

What is scary about this graph is that if these trends continue, the average PC will have the computational power of the human brain by around 2030, and the computational power of the entire human population in around 2050.

I am not necessarily saying that these predictions are fully accurate, but I do believe that as individuals we are underestimating the rapid changes to our lives that are about to occur.

If you view AI as a species that is evolving, it is evolving at a pace unlike anything we have ever witnessed before, and in the last 10 years, progress has been remarkable.

As an interesting example of this, check out Google Duplex:

There is no doubt that there is a tsunami of change that is about to hit our shores. A tsunami that few people are expecting, with a ferocity and timescale potentially more threatening to our species than climate change.

The danger I believe, is not in the technology itself, but in how we are using it.

If we use AI unchecked for corporate competitive advantage, there is no doubt companies will choose the cheapest and most efficient option, and the employees at the lowest levels will be the first to be hit hard. But over time, it is highly likely that all of our opportunities at all levels will be washed away. And very soon.

But it is a tsunami. I do believe we can channel for the greater good if we choose to. If we don’t use it for corporate advantage but instead use it to solve the biggest issues facing humanity such as education, poverty, famine, disease and climate change.

I also fear that this issue will be similar to climate change… that the leaders at the top will be reluctant to take action (e.g., it is unfathomable that some current world leaders are still denying that global warming is an issue, despite there being a 97% consensus by climate specialists).

So… what can be done?

They say that the Holocaust was allowed to occur in Nazi Germany because the good people sat back and did nothing. Some later justified their inaction claiming that they did not know where the trains were heading. Today, we do not have this excuse…. in terms of both climate change and AI, we know exactly where these trains are heading…. and these trains contain our children.

I think the major problem we face in stopping or redirecting these trains (i.e. pressuring the government to intervene) is what in the psychological literature is known as “bystander apathy” - the fact that people in a crowd are less likely to step in and help than individuals witnessing an atrocity alone.

With bystander apathy, people only step in to help when:

1) they notice that something is going on

2) interpret the situation as being an emergency

3) feel that they have a degree of responsibility (i.e. there is no-one else who is better suited)

4) and know what to do to help.

So if it is really up to the people to upward manage our governments (to make sure companies act sustainably and in a way that is beneficial to humanity) - how do we avoid our own bystander apathy?

So if it is really up to the people to upwardly manage our governments (to make sure companies act sustainably and in a way that is beneficial to humanity) - how do we avoid our own bystander apathy?

Perhaps we need some kind of global union, that is driven by good science, that aims to educate its members as to the upcoming emergencies that we need to act on. A union that people trust - a network full of local influencers, that when the time comes, can motivate others to take action, at once, in one voice, with clear instructions as to “what needs to be done” (e.g., global strikes come to mind). So the politicians hear the messages, and have no other option than to do what is right for humanity.

I am really not sure - I just know that what we are doing so far isn’t working out so well. And this does scare me terribly. I would love to hear your ideas.

Dr Scott Bolland has spent the last 25 years working in the field of Cognitive Science focussing on Artificial Intelligence platforms that mimic how the human mind works in areas such as learning, problem-solving and creativity.

He now splits his time between his 2 companies:

New Dawn Technologies - that focuses on ethical AI applications that are aimed at helping humanity

Aha Entertainment - teaching schools, businesses and teams about positive psychology - the science of human flourishing.

Dr Scott Bolland is an executive coach, international speaker, facilitator and futurist. His PhD and background (of 25 years) are in the area of Cognitive Science - the scientific study of how the mind works, spanning areas such as psychology, neuroscience, philosophy and artificial intelligence. His passion is playing in the intersection between these areas, in particular how to best prepare individuals, teams, schools and organisations to flourish in the digital age.

Leave your comments

Post comment as a guest