Comments

- No comments found

Automation is perceived as a cost-saving shortcut, but until we can design better conversations with machines, firms can’t rely on robots to build trust in their services.

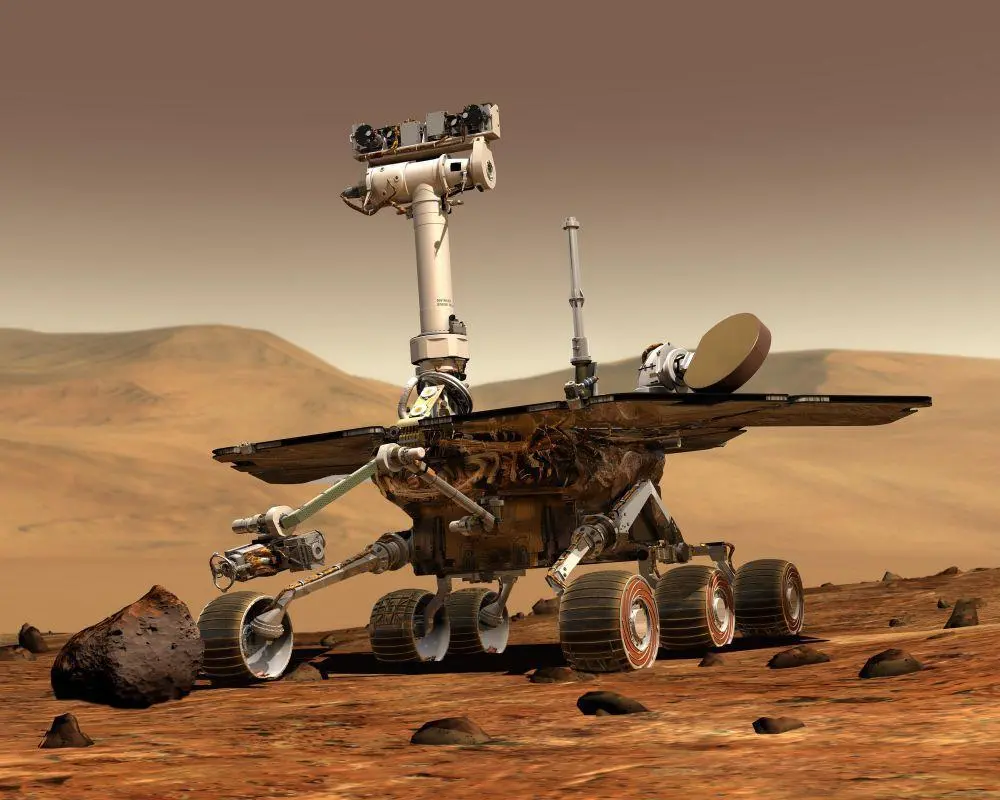

The robots are coming for us. The University of Oxford predicts that 47% of jobs worldwide will be automated by the year 2033, while research by the Organization for Economic Cooperation and Development (OECD) claims that almost 10% of jobs across its member countries are fully automatable. With robots moving from factories to offices, we need to make sure they’re used to improve our services, not just make them cheaper. In essence, we need to design better conversations with machines.

Smart assistants that use artificial intelligence (AI) have been sci-fi’s holy grail for decades, but it’s easy to feel underwhelmed with the technology now it’s here. To be frank, Alexa doesn’t come across as the smartest cookie in the jar, nor does she make the best company over a glass of wine in the evening. So where do we go from here?

Having studied human-machine interaction at university, I’ve watched developments in AI from innovative companies like Amazon and Google with interest over the past few years. How are the big players tackling the challenges we faced with tech more than a decade ago? How close are smart assistants to being able to understand the subtle ambiguities of natural language in 2017? Have today’s robots been taught to understand the sometimes vague needs and desires of consumers?

I’m sorry, I didn’t understand the question that I heard.

Amazon and Google’s product offerings may be the pinnacle of digital technology, but anyone who has interacted with Alexa or Home will know smart assistants are still wrought with frustration.

During periods of uncertainty short-term thinking sets in, budgets are tightened and operational costs rise. Across the services sector, firms are looking for new ways to find efficiencies, and that’s why more and more of our clients have been talking to us about robots. Machines may well be the answer – at least in part – but they need to improve fast if they’re going to live up to the hype that surrounds them.

Imagine a hospital where you can’t see a GP because the robot secretary can’t understand your accent, or a bank where you can’t access your savings because there’s a typo in their records. Minor misunderstandings like this can easily be resolved by humans, but when we rely on machines to do the work, we have to play by their rules. Or at least design around their limitations. So how can we make robots not only usable, but natural and intuitive too?

While most people will be familiar with Siri or Alexa, you don’t need to be Apple or Amazon to start using this technology. Mycroft AI is an open source alternative that enables anyone with a Raspberry Pi to cobble together their own personal assistant. Andrew Vavrek, the project’s community manager, believes it's never been cheaper or easier for firms to build this technology into their services. He says:

We're in a new era of development, with a growing focus on manufacturing devices to adapt to users rather than the other way around. Enabling this requires data collection, machine learning, and improving intent analysis of utterances. Essentially, our machines will continue to grow and learn along with us as we use them. And as the machines improve, so do the services we can build with them.

At the moment, digital assistants never seem to know whether they’re being talked to or not. There’s nothing more uncanny than when Alexa eerily chimes into conversations with inane statements like: “That’s good to know.” These accidental wake-ups are only minor inconveniences, but each time they happen, people become more inclined to reach for the power plug.

Vavrek believes that trust is a key component for our relationships with machines, and if we want machines to build that trust we need to stop thinking visually:

As we learn how to design better conversations, poking and prodding at screens will become obsolete and soon we’ll look back at keyboards and mice with nostalgia.

For the past two decades, UX designers have been streamlining graphical user interfaces to make them simpler, flatter and more responsive. But these tricks only work for simple interactions. When it comes to designing more complex interactions with machines, we need to think carefully about how to use AI’s learning and improvisation abilities to better understand how people naturally speak.

The future of AI will heavily depend upon designers and engineers identifying where machine learning processes can make the most impact. As the technology continues to evolve over the next couple of years, it will adapt to provide an ever increasing number of services. For now though, AI needs to learn to crawl before it can run.

Consumers want to feel like they’re being understood, not just listened to. Technology may seem like it’s advancing quickly, but so far few service providers have been able to deliver truly meaningful human-machine interactions. Get in touch if you’d like to co-design one in your industry.

Sarah is an entrepreneur and investor in early start-ups. She is the Founder of Nile, an award winning Service Design firm. Additional appointments have included, an elected Executive member of the British Interactive Media Association (BIMA), advisor to the Scottish Financial Fintech Strategy group, the Design Council and Number 10 Downing Street Digital Advisory Board. Her main areas of focus include, use of new technologies to improve the way we live and work, and the need to evolve company cultures to achieve sustainable change. She has been involved in designing future-proof currency for two countries, launched the first emergency cash service in Europe, and pioneered the world’s first digital health and symptom checkers. Her background is in human-computer interaction and behavioural psychology, and she has also served as a digital advisor to the British government. Sarah holds an MA in Psychology from the University of Aberdeen and an MSc in Human Computer Interaction from the Heriot-Watt University.

Leave your comments

Post comment as a guest